In today’s data centers, where AI, high-performance computing, and cloud-based operations demand ever-increasing bandwidth, 400G and 800G optical technologies have become critical enablers of scalability. However, selecting the right optical solution for any use case is a delicate balancing act between cost, power consumption, and the required reach. From modulation technologies like PAM4 to coherent pluggables for metro and long-haul links, network engineers and IT planners face an evolving landscape of options. This article unpacks the physical-layer standards, architectural patterns, energy and cost models, and forward-looking migration strategies to empower IT decision-makers. Each chapter sheds light on key aspects of selecting the right optics across deployment scales, helping teams streamline costs while ensuring performance, reliability, and operational efficiency in dense network environments.

How Physical-Layer Standards and Modulation Shape Cost, Power, and Reach in 400G and 800G Optics

Physical-layer choices determine almost every tradeoff that follows in 400G and 800G optics selection. Reach targets, fiber type, lane speed, connector style, and modulation method all influence module cost, thermal load, and operational flexibility. That is why standards matter so much. They do more than define interoperability. They also define the economic and power envelope of each optical class.

At the shortest distances, the standards favor intensity-modulated direct-detect optics using PAM4 across multiple lanes. For multimode fiber, SR variants typically serve the 50 to 100 meter range and remain attractive because the optics are comparatively simple. Yet that simplicity is not universal. An 800G SR8 module still pushes eight 100G PAM4 lanes, so power rises meaningfully versus 400G SR8. For single-mode fiber inside the data center, DR and FR families become more important. DR uses parallel fibers and usually targets 500 meters. FR uses wavelength multiplexing over duplex fiber and stretches to 2 kilometers. The practical result is clear: DR often lowers optical complexity and supports efficient breakouts, while FR reduces fiber count and cabling friction.

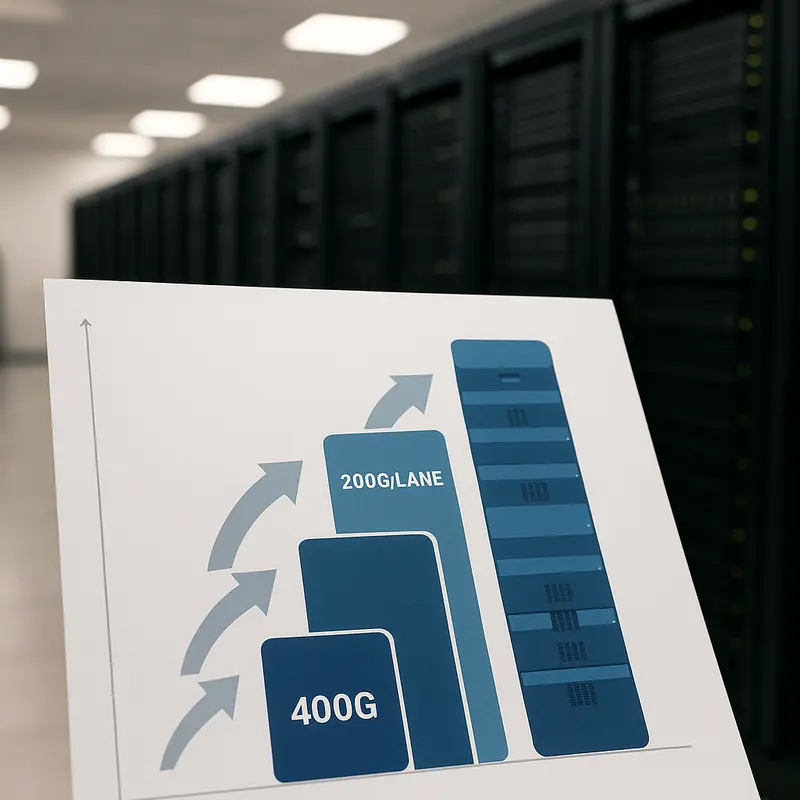

Those standards map directly to lane architecture. Mature 400G designs commonly rely on 8 x 50G or 4 x 100G PAM4 signaling. Current 800G deployments largely build on 8 x 100G PAM4, while 4 x 200G approaches are still maturing. That distinction matters because higher per-lane speed raises DSP demands, optical loss sensitivity, and thermal pressure. Early 200G-per-lane modules can improve port efficiency, but they usually arrive with a power and price premium before volumes and yields improve. In dense switches, a few extra watts per port can become a chassis-level limitation rather than a small specification detail.

Forward error correction is part of this same physical-layer equation. Common Reed-Solomon schemes allow PAM4 links to operate at realistic pre-FEC error rates, then recover clean post-FEC performance. Without that margin, many 400G and 800G links would not be deployable at useful reaches. But stronger signal conditioning also means more electronics, more heat, and sometimes more latency. This is where retimed optics and linear-drive approaches diverge. Retimed modules generally offer broader interoperability and safer margins. Linear-drive designs can cut module power and latency, but they shift more burden onto host SerDes quality, board design, and validation discipline.

Once reach extends beyond 10 kilometers, IM/DD optics become less attractive and coherent standards take over. Coherent pluggables add far more power and cost, but they buy dispersion tolerance, DWDM operation, and metro-scale reach that direct-detect optics cannot match cleanly. For a closer comparison of mainstream single-mode options, see this guide to 400G DR4, FR4, and LR4 transceivers. That progression from SR to DR, FR, LR, and then coherent is the physical-layer logic behind the architecture choices explored next.

Matching 400G and 800G Optics to Real Network Architectures

The practical balance of cost, power, and reach starts with where the link lives in the network. The same 400G or 800G port can be cheap and efficient in one topology, yet wasteful in another. That is why optics selection should follow architecture patterns first, then module specifications.

Inside a rack, or across a short row, the priority is usually low cost and low power. If distance is only a few meters, direct attach or active copper often wins. Once optical reach is required, very short multimode links remain attractive because transceiver pricing is generally favorable and power stays modest. That makes short-reach parallel optics a strong fit for dense east-west traffic, especially where existing multimode cabling is already installed. The tradeoff is limited reach and more dependence on MPO-based cabling discipline.

As links stretch toward end-of-row, leaf-to-spine, or pod-level fabrics, single-mode designs become more compelling. In this range, the choice often narrows to parallel single-mode for 500 m class links or duplex wavelength-multiplexed optics for 2 km class links. Parallel single-mode is often the best architectural match when breakout matters. A single high-speed port can fan out efficiently to several lower-speed server, accelerator, or storage endpoints. That pattern is especially important in AI clusters and distributed compute fabrics, where switch radix must be preserved and endpoint speeds do not always match uplink speeds. For that reason, many operators treat DR optics as the default building block for scalable fabrics, especially in designs similar to optical interconnects for GPU clusters.

Duplex optics become more attractive when the network values operational simplicity over breakout density. FR-class links reduce fiber count, align well with structured single-mode cabling, and simplify patching and moves. They often cost more than DR alternatives, but that premium can be justified when labor, polarity errors, and trunk complexity are considered. This is why many leaf-to-spine and building-scale links settle on FR optics even when DR could meet the raw reach requirement.

Beyond the data hall, the architecture changes again. Campus interconnects and short metro links reward duplex optics up to the point where chromatic dispersion, loss budgets, and operational margin begin to favor coherent transport. At that boundary, the decision is no longer only about reach. It becomes a question of switch thermals, router slot power, latency tolerance, and whether the design benefits from DWDM flexibility.

Seen this way, architecture patterns create a natural optics ladder: short links favor the cheapest and coolest options, fan-out fabrics favor parallel single-mode, structured duplex plants favor FR and LR classes, and DCI pushes toward coherent pluggables. The next step is to quantify how those architectural choices affect long-term energy and cooling costs.

Modeling Total Cost of Ownership: How Power and Reach Shape 400G and 800G Optics Economics

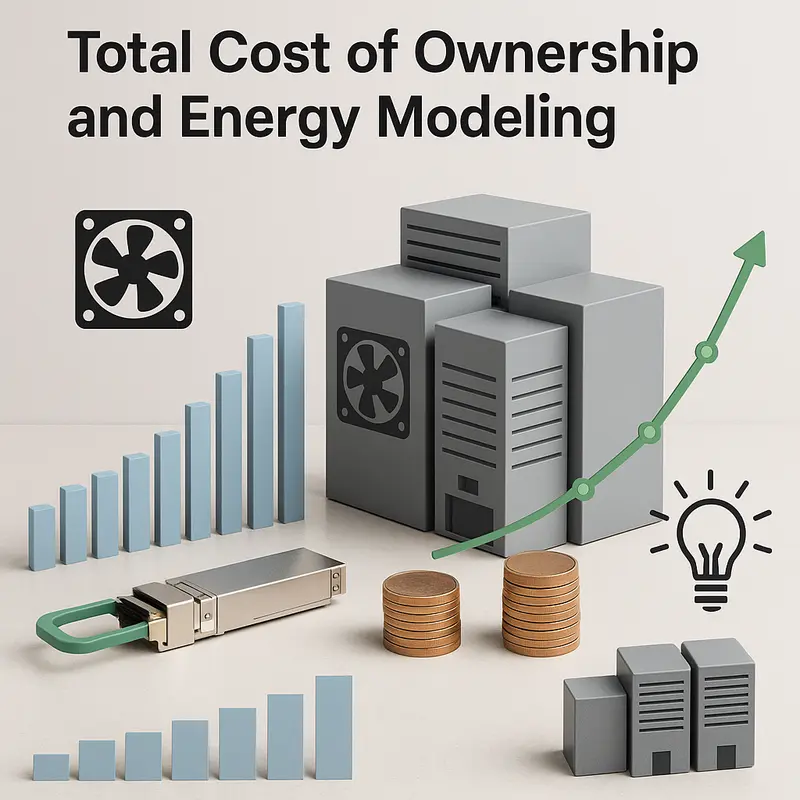

The architecture choice only becomes real when it is translated into a three- to five-year cost model. For 400G and 800G optics, purchase price is only the visible layer. The more important comparison is cost per delivered link over time, shaped by module power, cooling overhead, cabling, sparing strategy, and the operational burden created by each reach option.

A simple ranking often holds at the module level: short-reach multimode options usually start with the lowest transceiver price, parallel single-mode options sit in the middle, duplex wavelength-multiplexed optics cost more, and coherent pluggables remain the premium choice. But that ranking can flip once the fiber plant is included. An apparently cheaper short-reach option may require more fibers, more patching complexity, and more careful polarity control. A higher-priced duplex single-mode module can lower installation labor, reduce troubleshooting time, and delay future recabling.

Power is where TCO becomes more revealing. A useful rule is that 1 W consumed continuously costs about $0.876 per year at $0.10/kWh before cooling. In a dense switch, a 4 W delta across 64 ports becomes 256 W of additional IT load. With a PUE of 1.4, facility demand rises to roughly 358 W. Across one chassis, that may look manageable. Across hundreds of switches, it becomes a meaningful annual operating expense and a real thermal design constraint.

This is why optics with similar reach should never be compared on capex alone. A 400G FR-class module may cost more than a DR-class alternative, yet save money if it reuses duplex single-mode cabling and simplifies moves, adds, and changes. At 800G, the same logic becomes stronger. Modules in the mid-teens of watts are often easy to accommodate. Push into high-teens or low-twenties watts, and airflow design, faceplate density, and heat sink selection start affecting platform efficiency and deployment flexibility. That is especially important in AI fabrics and dense spine layers, as discussed in 800G deployment in data centers.

The most practical TCO model should include five inputs: module acquisition cost, structured cabling cost, annualized power with PUE, expected failure and sparing rates, and migration value. That final factor is often missed. A link choice that supports breakout, preserves duplex fiber, or aligns with the next lane-rate transition can protect future budgets. By contrast, the cheapest optics on day one may create stranded cabling or force earlier replacement.

Seen this way, reach is not just a distance specification. It is an economic trigger. The best 400G or 800G optic is the one that meets reach with the lowest combined cost of optics, watts, cooling, and operational friction, while still leaving room for the next upgrade cycle.

Choosing the Right Form Factor: Thermal Limits, Reliability, and Real-World Tradeoffs in 400G and 800G Optics

As cost models narrow the field, the next filter is physical reality: can the switch, router, and rack actually support the optic you want to deploy? In 400G and 800G environments, form factor is not just a packaging choice. It shapes power headroom, airflow behavior, port density, serviceability, and even which reach classes are practical. A dense 800G platform may look ideal on paper, yet the wrong module mix can create thermal hotspots, force port derating, or limit future migration to longer-reach optics.

This is why form factor decisions should be tied directly to reach and power targets. Lower-power IM/DD links for short and medium reach usually fit comfortably in the mainstream high-density pluggable formats. That is often true for 400G SR8, DR4, and many FR4 deployments, as well as 800G SR8 and some DR8 designs. The picture changes as optical complexity rises. Duplex 2 km and 10 km modules, early 200G-per-lane designs, and especially coherent pluggables place much heavier demands on thermal design. In those cases, larger thermal envelopes and better faceplate cooling become strategic, not optional.

The practical question is not whether one form factor is universally better. It is whether the platform can sustain the module’s real operating power at expected inlet temperatures. A module rated acceptably in the lab may behave differently in a fully populated chassis under summer conditions. Every extra watt adds not only device heat, but also cooling burden across the system. That makes a 2 to 4 watt difference per port important in high-density fabrics. It also explains why operators often reserve the highest-power optics for platforms with more thermal margin, while using lower-power direct-detect modules wherever reach permits.

Reliability follows the same logic. Optics fail less often when they operate with margin. High case temperature increases laser stress, raises bias current demands, and can narrow performance tolerance over time. More complex optics also add more components that must remain aligned and stable across temperature swings. This does not make advanced modules unsuitable. It means qualification, interoperability testing, and telemetry matter more as power climbs. DOM and CMIS-accessible diagnostics help teams track receive power drift, temperature excursions, and bias trends before a link degrades. For teams planning mixed-vendor environments, disciplined validation is equally critical, especially for breakouts, FEC behavior, and host compatibility; this is where a guide to reducing compatibility risks with optical modules is directly relevant.

Connector choice also affects operational reliability. Parallel optics can be efficient, but they demand cleaner polarity control and stricter cable management. Duplex links simplify moves, adds, and changes. That tradeoff often becomes decisive when scaling from a technically valid design to one that remains supportable over years of expansion.

Future-Proofing 400G and 800G Optics: Migration Paths and Risk Controls That Protect Cost, Power, and Reach

The hardest part of optics selection is not choosing what works now. It is choosing what will still look sensible after the next switch refresh, the next rack density jump, and the next change in application traffic. A sound roadmap starts by separating stable choices from transitional choices. Today, 100G-per-lane ecosystems are the stable core. That makes 400G DR4 and FR4, plus 800G SR8, DR8, and 2×FR4, the safest volume deployments. They are well understood, broadly interoperable, and easier to cost-model over three to five years. By contrast, 200G-per-lane 800G FR4 and LR4 promise better fiber efficiency, but early generations still carry maturity risk in power, thermals, and price.

That does not mean waiting is always wise. It means staging adoption. A practical migration plan uses mature optics for immediate scale, then reserves space, thermal headroom, and cabling options for later upgrades. In many data centers, that favors DR8 or 2×FR4 now, especially when breakout flexibility or duplex simplicity matters more than minimizing wavelength count. If the environment is being built around AI fabrics or dense east-west traffic, planning around SMF and higher-density patching usually gives a cleaner path from 400G to 800G and eventually beyond. The same logic appears in many 800G deployment patterns for data centers: avoid locking the plant to a single generation of optics when switch and server roadmaps are moving faster than cabling lifecycles.

Risk mitigation, then, becomes an engineering discipline rather than a procurement afterthought. The first control is standards alignment. Match host lane rates, FEC expectations, connector strategy, and breakout needs before comparing module quotes. The second is thermal margin. A link that works in a lab may fail in a full chassis at higher inlet temperatures. The third is interoperability testing across realistic patch-panel loss, not just direct connections. This matters more as designs push lower power through linear-drive approaches or early 200G-per-lane optics, where signal integrity budgets tighten.

Supply-chain resilience also belongs in the roadmap. Advanced lasers, DSPs, and coherent components can face uneven availability. Dual-sourcing, qualified alternatives, and sparing policies reduce that exposure. For longer reach, coherent adoption should be timed to platform capability, since power envelopes and airflow limits can determine whether a migration is elegant or disruptive.

The best roadmap is rarely the most aggressive. It is the one that delivers needed reach today, preserves power and cooling margins tomorrow, and keeps upgrade risk low when economics shift in favor of the next optics class.

Final thoughts

As data center demand for high-speed connectivity grows, striking the optimal balance between cost, power, and reach is crucial for sustaining scalability and efficiency. Leveraging standards-based optics, mapping use cases to deployment patterns, and integrating TCO models ensure smart decisions in 400G and 800G optical adoption. With rapid innovation in 200G lane technologies and coherent pluggables, today’s careful planning positions organizations for tomorrow’s requirements. The strategies outlined here provide a roadmap for making informed optics selections that maximize throughput while managing thermal, capex, and opex constraints.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for data center, AI, telecom, and network infrastructure projects.

Talk to ABPTEL

Looking for the right optical hardware for your AI data center, GPU cluster, or FTTA project? ABPTEL ships from Shenzhen with OEM/ODM support, fast lead times, and engineering-level pre-sales advice.

- 🔥 400G & 800G OSFP / QSFP-DD Transceivers — for AI training fabrics and hyperscale spine-leaf

- 📡 MPO / MTP High-Density Cabling — 12 / 24 / 32-fiber for high-density data centers

- ⚡ AOC & DAC Cables — short-reach GPU interconnects, OEM compatible

- 🧩 SFP / SFP+ / SFP28 / QSFP28 Modules — 1G to 100G optical transceivers

- 📋 Data Center Cabling Solutions — end-to-end design guide

- ❓ Read our FAQ — compatibility, polarity, lead time, MOQ

💬 Get a quote in 12 hours: Contact Candy · WhatsApp +86 188 1445 5697 · candy@abptel.com