Latency is no longer an afterthought in AI data center design. As machine learning workloads scale across thousands of GPUs, even microsecond-level delays ripple through cluster performance, affecting training throughput, inference speed, and overall efficiency. Every layer of the optical interconnect stack—be it transceivers, switching, protocols, or topologies—plays a role in ensuring low latency. This article unpacks the multifaceted importance of minimizing latency, from the physics of signal propagation to the intricate dance of network collectives, while offering practical insights on balancing power, cost, and reliability. Each chapter will guide you through how latency impacts AI workloads, network architecture, and ROI, ultimately preparing you to design networks that meet the rigorous demands of next-gen AI. Let’s explore why securing low latency isn’t just a technical advantage—it’s a business imperative.

From Fiber to FEC: The Physical-Layer Decisions That Shape AI Interconnect Latency

Low latency in AI data centers begins below the network stack, at the physical layer where every nanosecond is either preserved or surrendered. Before topology, congestion control, or collective algorithms can help, the link itself sets the baseline. That baseline is built from propagation delay, serialization time, transceiver processing, forward error correction, retimers, and the electrical path between switch silicon and optics. In a distributed training cluster, those small delays compound across hops, across collective steps, and across thousands of synchronized accelerators.

Propagation delay is the cleanest part of the budget. Light in fiber travels at roughly 5 ns per meter, so a 100-meter run adds about 0.5 microseconds. That seems minor until the path crosses rows, pods, and multiple switching stages. Serialization is even smaller for tiny packets on high-speed links, yet control traffic and collective metadata still feel it. At 400G and 800G, serialization shrinks sharply, which helps, but faster line rates alone do not solve latency if transceiver pipelines and FEC grow more complex.

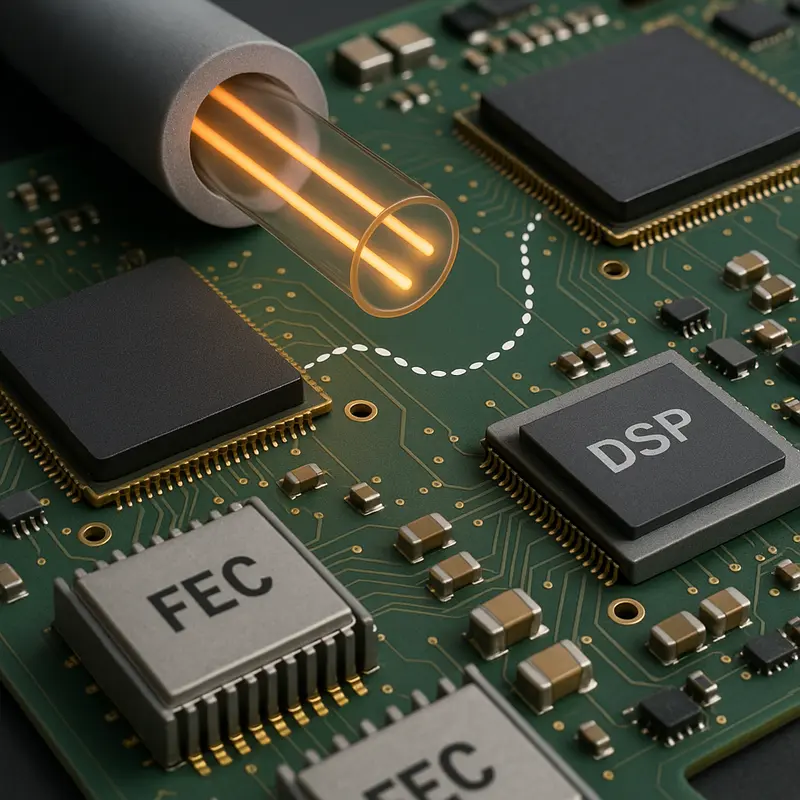

That is why optical design choices matter so much. Short copper links inside a rack add very little delay, but their reach is limited. Once traffic leaves the rack, optics become mandatory, and module architecture starts to dominate the physical-layer tradeoff. DSP-heavy PAM4 optics support dense, high-speed links, yet they introduce processing delay through equalization, clock recovery, and FEC. Those stages often add tens to hundreds of nanoseconds in each direction. Linear-drive optics reduce that burden by stripping out much of the onboard signal processing. The reward is lower latency and lower power, but only if the electrical channel is clean enough to tolerate tighter margins.

FEC sits at the center of this compromise. AI fabrics cannot afford frequent errors, because retransmissions destroy tail latency. Yet stronger coding means deeper processing and more delay. On short, well-controlled links, low-latency FEC profiles can preserve signal integrity without turning every packet into a longer pipeline event. The same logic applies to retimers and long board traces. Each extra element may improve reach or robustness, but it also adds delay and jitter. Designs that shorten electrical paths, or move optics closer to the switch, reduce both.

This is also where link budgets stop being abstract engineering math. In AI clusters, a cleaner optical path can translate directly into higher accelerator utilization. Lower-loss channels allow simpler modules, lighter FEC, and fewer signal-conditioning stages. That is the practical bridge between photonic design and training efficiency. For readers interested in how fiber choices affect transmission performance more broadly, how OPGW cable enhances data transmission offers useful context. From here, the next layer of latency comes from how those physical links are assembled into switching fabrics, where hop count, forwarding behavior, and transport design decide whether nanoseconds stay small or become queue-driven microseconds.

Designing AI Optical Fabrics for Speed: How Topology, Switching, and Protocols Shape Latency

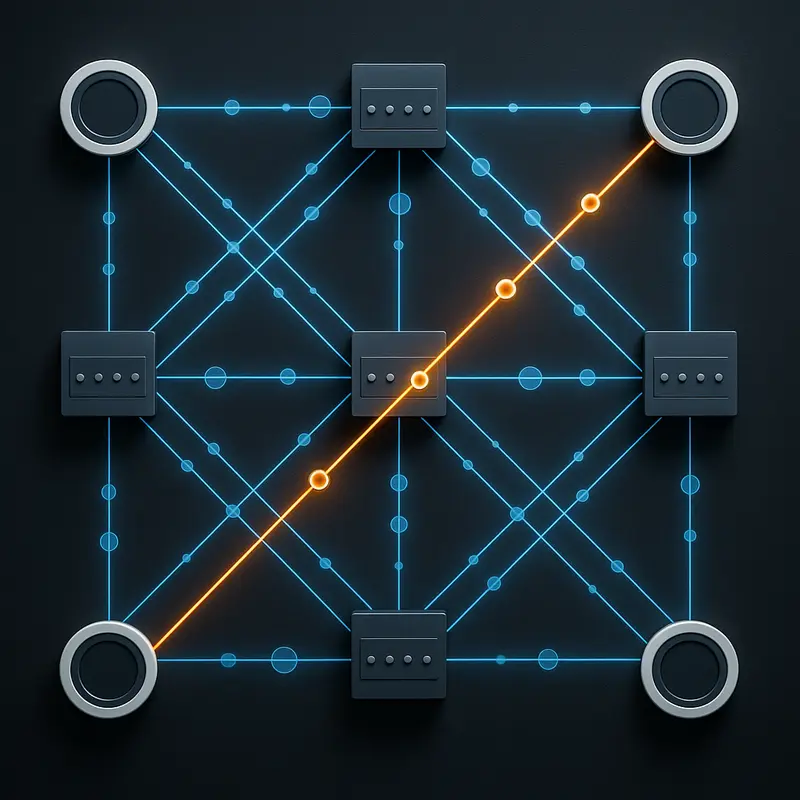

The physical link sets the floor for latency, but fabric design decides whether that floor is preserved or wasted. In AI clusters, that decision is rarely abstract. A synchronous training job waits for the slowest participant, so every extra hop, queue, and protocol transition can turn into idle accelerators. This is why topology, switching behavior, and transport choices matter as much as optical reach or module design.

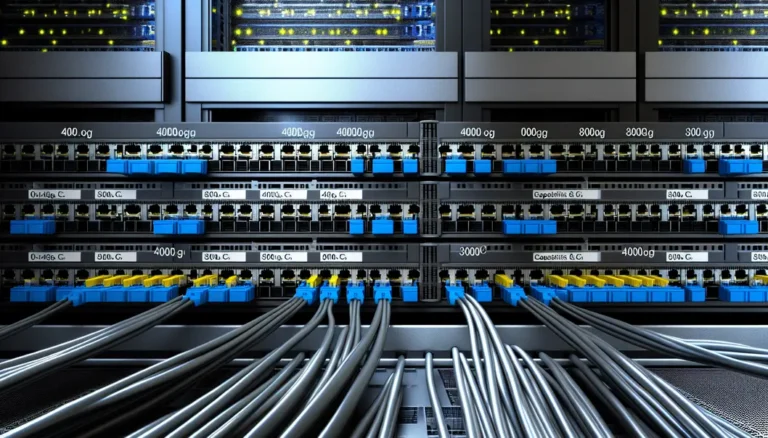

A well-built AI fabric starts by limiting hop count. Clos and fat-tree designs remain popular because they offer predictable east-west paths and bounded latency. In practice, most flows cross three to five switching stages. That sounds small, yet each stage adds silicon delay, arbitration time, and potential queueing. Multiply that by collective patterns such as all-reduce or all-to-all, and small per-hop penalties become measurable step-time losses. Higher-radix designs help because they flatten the network. Fewer tiers mean fewer serialization events, fewer buffer touches, and fewer chances for congestion to build.

Switching mode matters too. Cut-through forwarding keeps packets moving after only the header is examined, which trims latency for the small messages that coordinate distributed compute. Store-and-forward can protect integrity, but it adds delay that AI control traffic often cannot afford. Deep buffers offer another trade-off. They can absorb bursts, yet they also increase minimum memory access time and make congestion harder to detect early. For AI workloads, shallow and intelligently managed buffering is often better than simply adding more packet memory.

Protocol design then determines how much software stands between one accelerator and another. Kernel-bypass transports and remote direct memory access remove copies and reduce host intervention, keeping one-way latency in the microsecond class. That matters most for collective metadata, synchronization messages, and small shards that gate the next compute phase. A general-purpose stack may still deliver bandwidth, but it often adds too much jitter for tightly synchronized training or multi-stage inference.

The hardest problem is not average latency but tail latency. Incast, all-to-all bursts, and poorly placed jobs can create microbursts that add tens or even hundreds of microseconds. At that point, optical efficiency is no longer the bottleneck; queueing is. Congestion control must therefore be proactive, using explicit signals and pacing rather than broad pause mechanisms that spread delay across unrelated flows. This is also where telemetry becomes operationally critical. Fine-grained visibility into hot spots, buffer occupancy, and path imbalance allows operators to correct the fabric before p99 latency harms throughput. Even broader network planning principles, such as understanding how a switch directs and prioritizes traffic, reinforce the same lesson: the fastest optical interconnect is only as effective as the network architecture wrapped around it.

Synchronizing at Scale: Why Low-Latency Optical Interconnects Define AI Training and Inference Performance

In large AI clusters, distributed training succeeds or stalls on synchronization speed. Compute may happen on thousands of accelerators at once, but progress still waits for the slowest exchange. That is why low latency matters so much in optical interconnect design. Every collective operation creates a barrier. Every barrier exposes network delay. When those delays repeat across an entire run, microseconds turn into idle GPUs, lower throughput, and longer job completion times.

This effect is strongest in synchronous training. Data-parallel and model-parallel jobs depend on all-reduce, all-gather, reduce-scatter, and all-to-all traffic. For small and medium messages, latency often matters more than raw bandwidth. A ring collective, for example, pays a per-step latency cost across many stages. Add a few hundred nanoseconds to each step, then multiply that by node count, collectives per iteration, and iterations per training run. The result is no longer trivial. It becomes a meaningful loss in utilization and training efficiency.

Optical interconnect choices sit directly inside that latency budget. Fiber propagation inside a data center is fast, but not free. A 100-meter path adds about half a microsecond. Switch hops add more. Optical modules can add tens to hundreds of nanoseconds through digital signal processing, clock recovery, and forward error correction. In a typical leaf-spine path, these pieces stack quickly. Even before congestion appears, several microseconds can vanish into the network. Under bursty traffic, queueing can dwarf everything else and stretch into tens or hundreds of microseconds, making the slowest rank determine the speed of the whole job.

Inference is different in shape, but not in sensitivity. Token generation is sequential, and distributed serving spreads work across stages, memory pools, and retrieval systems. That makes tail latency critical. A modest increase in p99 network delay can show up directly as slower response time. For interactive systems, those extra waits are visible to users. For operators, they reduce request capacity and make service-level targets harder to hold.

This is why designers push for shorter electrical paths, fewer retimers, lower-latency optics, and cleaner congestion behavior. Techniques such as kernel bypass, direct memory access from accelerators, cut-through forwarding, and in-network reduction all aim at the same goal: reduce waiting between compute phases. Physical infrastructure still matters here. Careful fiber planning and deployment discipline, much like the considerations discussed in how optical cable enhances data transmission, help preserve signal integrity and support tighter latency budgets. At AI scale, the network is not just a transport layer. It is part of the compute system itself.

Why Low-Latency Optical Interconnects Demand a Careful Balance of Power, Cost, and Reliability

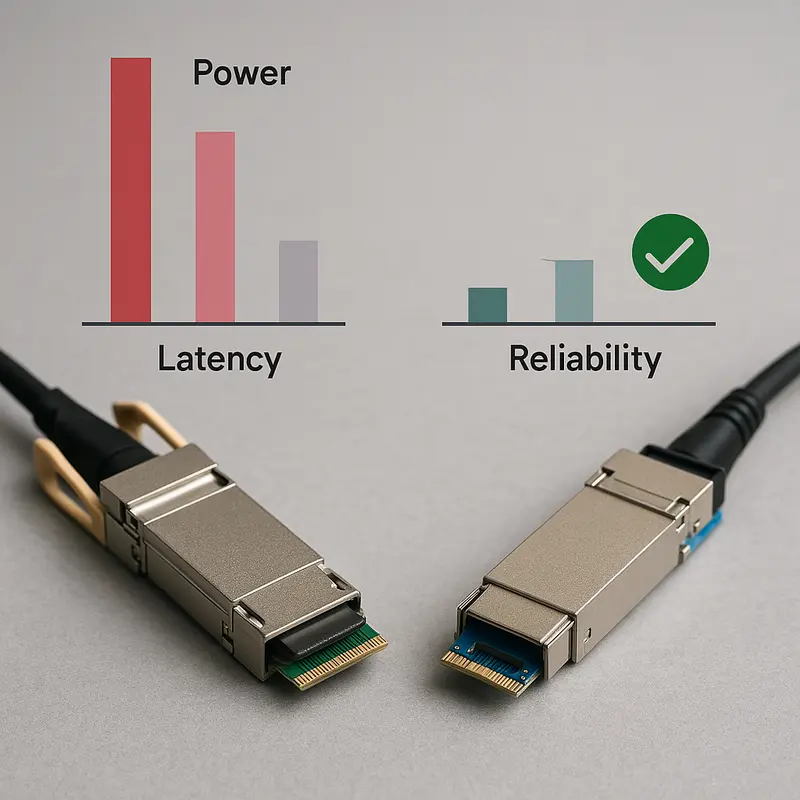

Low latency is only valuable if the network can sustain it at scale, day after day, without turning power, failure rates, or capital expense into a larger problem. That is the central design tension in AI data center optical interconnects. The fastest path is not always the cheapest path, and the lowest-power option is not always the most forgiving. Designers are constantly trading electrical margin, optical reach, thermal headroom, and error resilience against the need to keep GPUs busy.

This trade-off becomes sharp in short-reach AI fabrics. DSP-heavy optical modules can improve signal recovery and support longer or noisier channels, but they also add latency through equalization pipelines and forward error correction. They draw more power as well, which compounds across thousands of links. A few extra watts per port may look minor in isolation. Across a large leaf-spine fabric, it becomes a serious cooling and operating cost burden. By contrast, lower-latency approaches that reduce or remove heavy DSP stages can cut both delay and power, but they demand cleaner host channels, tighter signal integrity, and less tolerance for design mistakes.

Reliability complicates the picture further. AI training jobs do not respond well to packet loss or unstable links. One corrupted flow can stall a collective and turn a small physical-layer problem into a cluster-wide slowdown. That is why FEC cannot simply be treated as wasted latency. It is often the mechanism that prevents retransmissions and protects p99 performance. The real question is not whether to use error correction, but how much coding gain is needed for a given reach and channel quality. On short, clean links, lower-latency FEC profiles may be the right answer. On harsher channels, deeper protection may save more time than it costs.

Power also shapes topology choices. Lower-power optics can ease thermal density and make higher-radix designs more practical, which reduces hop count and indirectly lowers latency. That makes the economics nonlinear. Spending more on efficient optics or cleaner board design can reduce switch layers, cut cooling demand, and improve utilization at the same time. In large AI clusters, those second-order gains often outweigh the price delta of any single optical link.

The same balancing act appears in operations. Conservative designs with more margin may simplify deployment, while aggressively optimized links can deliver better latency but require tighter validation and monitoring. Teams that want both speed and stability need disciplined qualification, continuous telemetry, and a supply strategy grounded in component quality. That broader sourcing and quality lens is familiar in adjacent fiber infrastructure markets, where quality standards and certifications often determine long-term reliability as much as raw specifications. In AI fabrics, the lesson is similar: the best low-latency interconnect is not the one with the smallest datasheet number, but the one that delivers low delay, acceptable BER, manageable power, and predictable cost at production scale.

The Next Latency Frontier: Future Optical Interconnect Trends and Supply Chain Risks in AI Data Centers

As AI clusters move toward 800G, 1.6T, and eventually 3.2T links, the latency question becomes harder, not easier. Higher throughput reduces serialization time, but faster lanes usually demand more signal processing and stronger error correction. That creates a familiar tension: every added layer that protects reach and bit integrity can also add delay. For AI fabrics, where microseconds compound across hops and collective steps, the winning designs will be the ones that scale bandwidth without rebuilding latency in the optical path.

This is why the industry direction matters. Short-reach intra-data-center links are increasingly favoring architectures that remove unnecessary electrical distance, retimers, and deep DSP pipelines. Linear-drive optics and co-packaged approaches are attractive not only for power savings, but because they cut nanoseconds and reduce jitter sources. As switch radix rises, these designs also help flatten network diameter. Fewer hops mean fewer opportunities for queue buildup and fewer places where synchronization-sensitive traffic can stall. In large training clusters, that system-level effect often matters more than the raw port speed printed on a module.

At the same time, next-generation optics will not be defined by performance alone. Supply chain resilience is becoming a design constraint. Optical modules depend on a globally distributed stack of lasers, photonic components, packaging capacity, high-speed electronics, and test infrastructure. A disruption in any layer can delay deployment, narrow vendor choice, or force operators toward less optimal latency-power trade-offs. The same is true for switch silicon, advanced interconnect components, and manufacturing regions exposed to export controls or geopolitical friction. In practice, this means low-latency design is no longer just a technical exercise. It is also a sourcing strategy.

That sourcing strategy has direct architectural consequences. If the preferred low-latency optical option is constrained, operators may need to fall back to modules with deeper DSP pipelines, higher power draw, or longer lead times. If advanced switch platforms are limited, fabrics may be built with lower radix and more hops, raising both baseline and tail latency. Even standards evolution can shift risk. New Ethernet generations improve interoperability, but transitions often create temporary shortages, uneven qualification cycles, and mixed-fleet environments that complicate latency tuning.

For that reason, forward-looking AI data center planning should treat optical technology roadmaps and supplier diversification as part of the latency budget. The most competitive infrastructures will pair clean physical-layer design with pragmatic procurement discipline, using multiple qualified sources, standard-aligned components, and clear reach targets. Teams tracking broader fiber market shifts, including global trends in fiber optic cable supply and market dynamics, are often better positioned to anticipate where latency-saving upgrades will be practical, affordable, and available at scale.

Final thoughts

Low latency isn’t just a technical aspiration; it’s a critical enabler of AI efficiency and scalability. By understanding the intricate interplay of physical layers, topologies, protocols, and associated trade-offs, data center architects can design networks optimized for next-gen AI workloads. The journey to reducing latency translates directly into faster training times, improved inference responsiveness, and better cost efficiencies—all while addressing growing power and reliability challenges. With emerging technologies like LPO and co-packaged optics, the future of low-latency interconnects is brighter than ever, but only for those prepared to act.

Explore how ABPTEL can accelerate your AI data center with low-latency optical interconnects and cutting-edge solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides advanced optical transceivers, MTP/MPO cabling systems, high-performance DACs and AOCs, PoE switches, and FTTA solutions tailored for AI, data centers, telecom, and networking projects. With a focus on high-speed, low-latency interconnects, ABPTEL ensures businesses achieve unparalleled efficiency and scalability in infrastructure.