High-speed optical networks form the backbone of today’s data centers, AI infrastructures, and global connectivity, delivering unprecedented performance through technologies like DWDM and coherent modulation. However, they are vulnerable to a range of complex failure causes—from physical-layer impairments to software glitches and geopolitical disruptions. For data center network engineers, IT planners, and system integrators tasked with maintaining seamless operations, understanding these vulnerabilities is essential. This article dissects key factors behind link failures, breaking them into manageable insights across physical hardware, active components, control-plane faults, environmental disturbances, and security concerns. By exploring these chapters holistically, professionals will uncover actionable diagnostics and best practices for improving resilience.

Where Optical Links Physically Break Down: Fiber Plant Impairments Behind High-Speed Network Failures

High-speed optical links often fail long before a transceiver dies or software makes a bad decision. The physical path itself is usually the first place margin disappears. In terrestrial networks, the most visible cause is still the fiber cut. Construction crews, drilling activity, rodent damage, and vandalism can sever a sheath and trigger immediate loss of signal. In submarine systems, anchors and fishing gear remain dominant hazards, especially in shallow waters. These events are dramatic, but many failures begin as quieter degradations that slowly consume the link budget.

A common example is contamination at connectors and patch points. A clean connection may add only a few tenths of a decibel of loss, but dirt, oil, or poor mating can push that much higher and worsen reflectance. In coherent systems, that extra reflection is not harmless. It can interact with tight margins, raise error rates, and destabilize wavelengths that already sit close to their forward error correction threshold. Poor fusion splices create a similar pattern. One bad splice rarely causes an outage by itself, yet enough small defects across a route can reduce OSNR margin until normal temperature swings or traffic changes trigger a failure. This is why disciplined inspection and tools such as an optical time-domain reflectometer (OTDR) matter so much in fault isolation.

Mechanical stress is another frequent and underestimated cause. Tight bends in trays or patch panels create microbending and macrobending loss, often showing up first at longer wavelengths. Water ingress and compromised splice closures can make attenuation intermittent, especially during rain or humidity cycles. Aerial fiber adds more instability, because wind and vibration can introduce polarization changes and short PMD spikes. Modern coherent receivers compensate for large impairments, but not infinitely. If differential group delay changes too quickly, the receiver can lose tracking and the link may flap rather than fail cleanly.

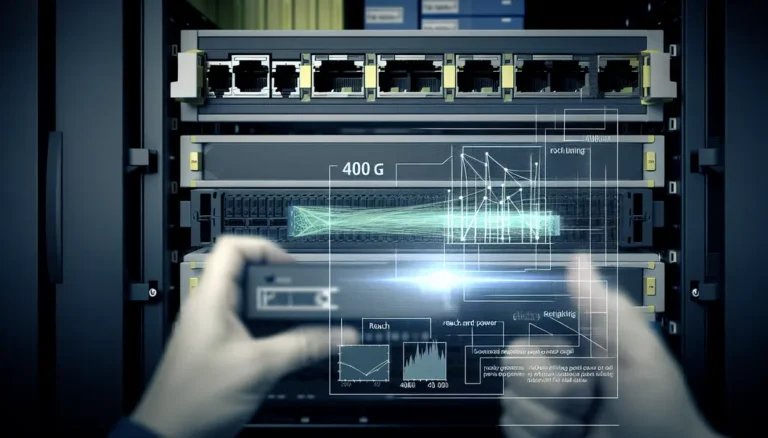

Dense optical routing adds another layer of fragility. Every mux, demux, and wavelength-selective filter slightly reshapes the channel. After multiple passes, passbands narrow, ripple accumulates, and polarization-dependent loss increases. That matters far more at 400G and above, where higher-order modulation tolerates less OSNR degradation and less spectral distortion. The result is a familiar outage chain: small passive losses accumulate, the noise margin shrinks to one or two decibels, then a heat event, wavelength drift, or add-drop change pushes pre-FEC BER beyond recovery. What looks like a sudden link failure is often the final step in a long physical-layer decline.

When the Optics Themselves Give Way: Active Component Degradation Behind Link Failures in High-Speed Optical Networks

If the previous causes live in the fiber plant, this class of failures begins inside the equipment that generates, amplifies, filters, and steers light. In high-speed optical networks, active components often fail less dramatically than a cable cut, yet they can be just as disruptive. A transceiver may stay powered while its laser drifts off frequency. An amplifier may still provide gain while its noise figure worsens. A wavelength switch may continue passing traffic while its filter shape slowly narrows. These are the failures that consume margin first and capacity next.

Transceivers are usually the first place where degradation becomes visible. Aging lasers, modulators, and receiver assemblies can push a link from stable operation into intermittent errors. Temperature is a major accelerator. As heat rises, wavelength stability, output power, and digital signal processing margins all tighten. In coherent systems, that can appear as cycle slips, rising pre-FEC BER, or loss of carrier lock before a hard outage occurs. Firmware also matters. Faulty alarm handling or power negotiation in pluggables can create flapping behavior that looks like a line issue but is actually local to the module. This becomes more important as operators move toward denser interfaces and higher speeds, as discussed in this guide to 400G transceiver procurement.

Amplification stages introduce another common failure path. An optical amplifier does not need to shut down completely to break a service. A weakening pump can reduce gain and raise amplified spontaneous emission noise at the same time. That combination eats directly into OSNR margin. Edge channels often fail first because spectral tilt grows as gain flattening drifts. In reconfigurable networks, add-drop events can also trigger power transients. If transient control is poor, receivers may briefly see overload, underpower, or burst errors severe enough to drop traffic.

ROADMs add flexibility, but they also add precision-dependent hardware that can age or misbehave. Wavelength-selective switching elements can drift in center frequency or compress usable passbands. At 100G, a network might tolerate modest filtering errors. At 400G and above, especially with higher-order modulation, the same error can become fatal. A few gigahertz of misalignment across several hops can distort the signal constellation, raise BER, and push FEC beyond recovery.

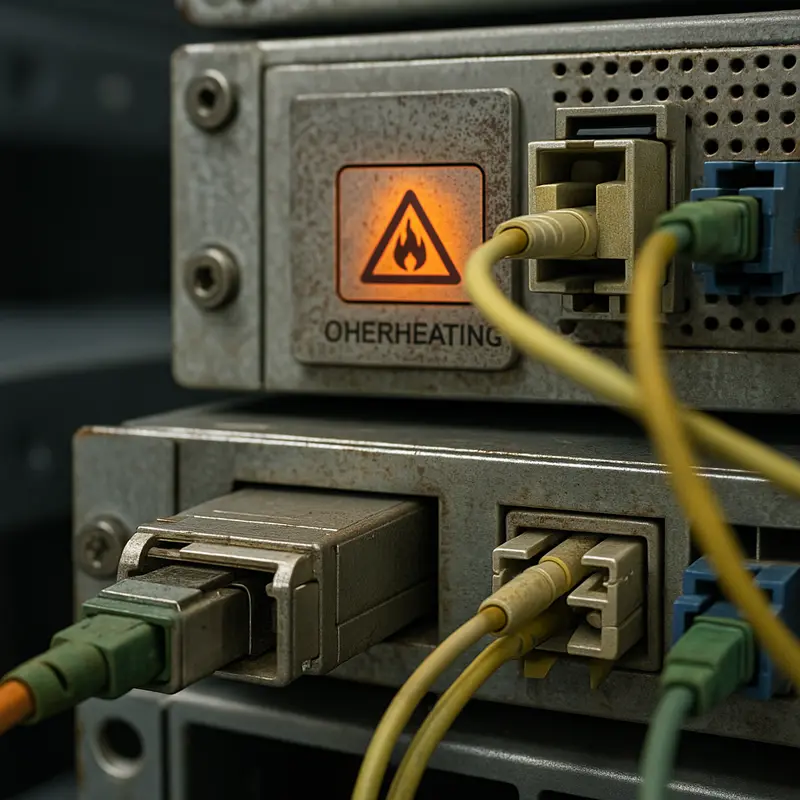

Many of these failures share the same pattern: the hardware remains partially functional while network margin quietly disappears. Fan degradation, dusty air paths, marginal power supplies, and poor thermal control often sit upstream of the optical symptom. By the time alarms escalate to loss of signal, the real cause may have been building for weeks in pump current trends, wavelength drift, or rising correctable errors. That is why hardware degradation sits between fiber impairment and software fault in the failure chain: it turns normal operating variance into service-affecting instability.

When the Network Thinks the Link Is Down: Control-Plane, Protocol, and Software Causes of Failure in High-Speed Optical Networks

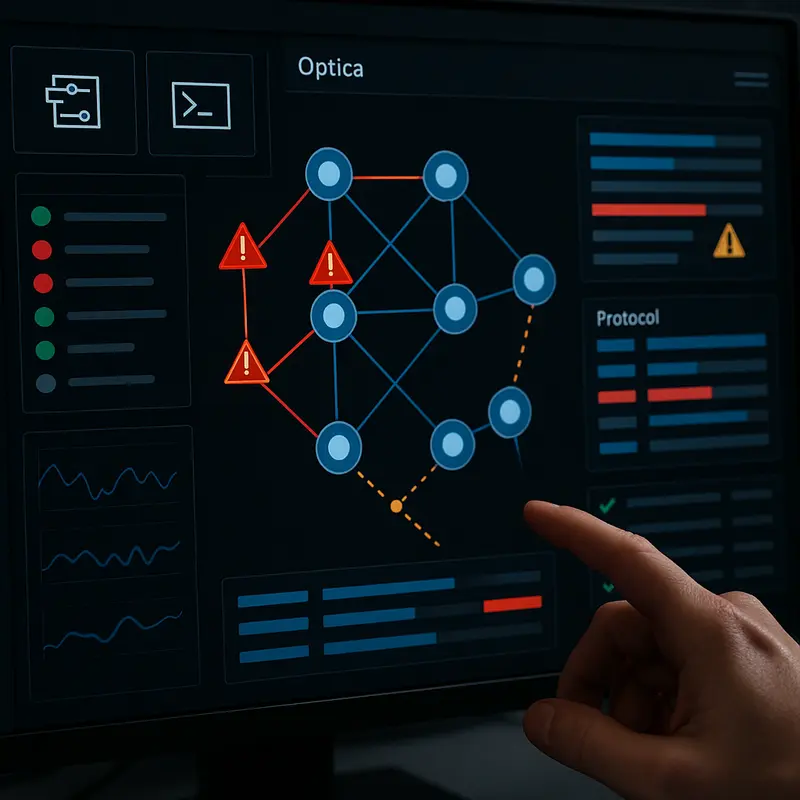

Physical faults often get the blame, but many high-speed optical link failures begin in software or in the control plane. Light may still be present on the fiber, amplifiers may still be healthy, and shelves may still have power. Yet capacity disappears because the network can no longer describe, signal, or restore the path correctly. In coherent DWDM systems, that gap between optical health and service availability is one of the most dangerous failure modes.

A common trigger is misconfiguration. Optical networks depend on precise channel plans, power targets, and route definitions across transponders, muxponders, and switching nodes. A wrong center frequency, an incorrect attenuation setting, or a route pushed into the wrong passband can silently black-hole traffic. These errors often appear after maintenance windows, software upgrades, or partial migrations to denser line rates. During transitions such as 100G to 400G migration, configuration discipline matters even more, because tighter modulation margins make logical mistakes look like physical instability.

Protocol faults create a different class of failure. Signaling and path computation layers must agree on topology, labels, shared risk groups, and restoration priorities. When they do not, valid paths may be withdrawn or never established. State desynchronization can leave one node believing a circuit is active while another has already torn it down. Floods of routing updates can also overwhelm control resources, delaying convergence just when the network needs to reroute around a fault. In that moment, the optical layer may be intact, but the transport service still collapses.

Protection systems are especially vulnerable to software mistakes because they only prove their value during abnormal conditions. A 1+1 or shared-mesh scheme can fail if policies are inconsistent, timers are mismatched, or both primary and backup paths cross the same hidden failure domain. That is how a single cut turns into a multi-link outage. The issue is not the absence of redundancy, but the false belief that redundancy was diverse.

Timing and synchronization problems add another subtle cause. Some services depend on stable phase and frequency even when optical power remains normal. If timing distribution fails, fronthaul and latency-sensitive transport can drop despite an apparently healthy span. Operators then face misleading symptoms: no obvious loss of light, but alarms spreading upward through client layers.

Monitoring gaps make all of this worse. If telemetry thresholds are wrong, or if controllers expose poor fault context, teams chase BER and LOS alarms while missing the real source: bad automation, stale topology, or failed protection logic. In high-speed optical networks, link failure is not always a broken fiber. Sometimes it is a network that can no longer correctly understand its own link.

When the World Breaks the Fiber: Environmental, Human, and Geopolitical Causes of Link Failures in High-Speed Optical Networks

Many optical failures begin far from the transponder or amplifier. They start in streets, ducts, poles, landing stations, and coastal waters. In terrestrial networks, construction remains the dominant threat. A backhoe strike, mislocated trench, or directional drilling error can sever fiber instantly and trigger loss of signal across every wavelength on the span. Urban and suburban routes often see roughly 0.2 to 0.5 cuts per 1,000 sheath-km per year, though the true rate depends on right-of-way control and excavation discipline. Even when the cable survives, sheath damage can admit moisture and create delayed faults that appear only after thermal cycling or rain.

Environmental stress is often less dramatic but just as dangerous. Tight bends in patch panels, trays, or closures create microbending and macrobending loss, which quietly erode margin until a hot afternoon or a heavy traffic period pushes OSNR below recovery limits. Compromised splice enclosures can let in water, increasing attenuation intermittently and making failures hard to reproduce. Aerial fiber adds another layer of exposure. Wind-driven galloping, vibration, and mechanical strain can trigger brief PMD spikes or unstable connector contact. In harsh utility corridors, cable design itself becomes part of failure prevention, especially where electrical stress and weathering accelerate degradation, as discussed in this guide to why ADSS cables fail from electrical tracking.

Human handling errors also sit at the center of many outages. Poorly cleaned connectors, improper bend management, weak splices, or mismatched connector endfaces can turn a healthy link into a marginal one. These are not always immediate hard failures. More often, they create a chain of small penalties: extra insertion loss, worse reflectance, more noise sensitivity, and then repeated flapping when temperature or load changes. Facility conditions amplify the problem. Power loss, failing batteries, generator handoff mistakes, or HVAC shutdowns can drop multiple shelves at once. In those cases, the network may look like it suffered a protocol event, but the root cause is physical and local.

At larger scale, geography and politics shape outage patterns. Submarine systems face about 150 to 200 faults globally each year, with most historically linked to fishing gear and ship anchors in shallow waters. Earthquakes, seabed landslides, and storm-driven seabed movement add another class of disruption. Repair times are long because weather, permits, and specialized ships control access. On international routes, cable landing stations and border crossings become strategic weak points. Regional instability, vandalism, and delayed cross-border approvals can turn a single cut into a prolonged capacity crisis. By the time operators escalate to security concerns in the next layer of analysis, many outages have already been set in motion by the simple fact that optical infrastructure lives in the physical world.

When Failure Is Deliberate: Security Threats and Intentional Attacks Behind Optical Link Outages

Not every optical outage begins with age, weather, or simple error. Some failures are caused deliberately, and high-speed optical networks are increasingly exposed because they concentrate enormous capacity into a small number of fibers, landing sites, colocation rooms, and control systems. A single act of tampering can therefore create effects far beyond the physical point of attack. In terrestrial routes, exposed handholes, cabinets, and roadside ducts are common weak points. In submarine systems, cable landing stations and shallow-water approaches are especially sensitive because they are accessible, geographically constrained, and difficult to protect continuously.

Physical sabotage is the most direct form of attack. An intentional cut produces the same immediate alarms as an accident, including loss of signal and loss of frame, but the surrounding evidence often differs. Multiple fibers may be severed at once, access points may show signs of entry, or cuts may occur at locations that maximize disruption rather than convenience. Attackers may also target connectors, patch panels, or power feeds instead of the cable itself. That matters because optical links can fail without a visible break. A contaminated or disturbed connector can add loss and reflection, pushing a coherent channel past its FEC margin. A malicious bend or patching change can create intermittent degradation that looks like an ordinary maintenance issue. In hardened outdoor environments, physical protection of interfaces becomes part of network resilience, especially where ruggedized fiber optic connector design affects exposure to tamper, moisture, and handling damage.

More subtle attacks exploit the fact that modern optical systems depend on software as much as fiber. A compromised management platform can push incorrect power setpoints, alter wavelength assignments, or steer channels into the wrong passbands. The result may be black-holed traffic even while optical light remains present. Protection systems can also be weaponized. If restoration policies are changed, traffic may be forced onto paths sharing the same risk domain, making a second fault far more damaging. These failures are dangerous because they resemble misconfiguration at first glance, delaying isolation and recovery.

Optical-layer abuse adds another dimension. High-power signal injection can overload receivers, saturate front ends, or trigger safety shutdowns. Narrowband interference placed near a channel center can raise BER without causing a total loss of light, creating a soft failure that flaps under load. In coherent networks using tighter margins at 400G, 800G, and above, even modest targeted impairment can be enough to collapse post-FEC performance. The operational lesson is clear: when investigating what causes link failures in high-speed optical networks, security cannot be treated as separate from engineering. Intentional actions now exploit the same tight budgets, automation dependencies, and concentrated infrastructure that make modern optical transport efficient.

Final thoughts

High-speed optical networks deliver exceptional connectivity but face vulnerabilities across hardware, software, environmental, and security domains. Understanding these causes—from physical-layer impairments and active component degradation to human and geopolitical disruptions—is vital for building resilient infrastructure in data centers and AI networks. By diagnosing failure symptoms early and adopting proactive measures, IT stakeholders can safeguard operations and ensure uninterrupted data flow for critical services.

Need expert guidance on high-speed optical network solutions? Talk to ABPTEL about advanced infrastructure tools for data centers and AI systems.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides cutting-edge solutions for high-speed optical systems, including pluggable transceivers, MTP/MPO cabling, AOC/DAC cables, PoE switches, and FTTA tools tailored for data centers, telecom networks, AI infrastructure, and scalable connectivity projects. With a commitment to high performance and resilience, ABPTEL empowers organizations with solutions to mitigate link failures and ensure seamless operations.