The design of optical architectures for leaf-spine networks is the backbone of modern data centers, enabling scalable connectivity and high-speed performance for AI workloads and cloud services. Selecting the right optical platform involves balancing technical and economic factors like link distances, optical standards, cabling strategies, module power budgets, and reliability requirements. This article unpacks these complex considerations in structured chapters, beginning with evaluation criteria, and progressing into the nuances of physical layer standards, trade-offs between pluggables and emerging optics designs, cabling architecture, and operational impacts. Each chapter reinforces the central goal: empowering decision-makers to select the best optical strategy for their unique environment.

How to Judge the Best Optical Architecture for Leaf-Spine Data Center Networks

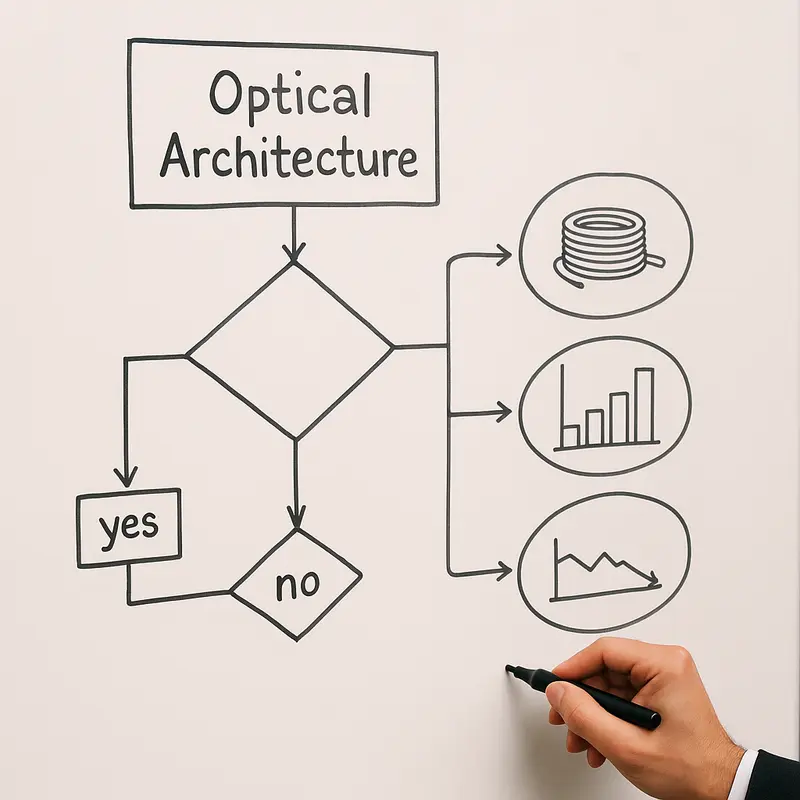

Choosing the best optical architecture for a leaf-spine network starts with a simple idea: best is never defined by speed alone. A strong design must balance reach, density, power, latency, cabling complexity, resilience, and upgrade flexibility at the same time. If one link type lowers transceiver cost but forces a bulky fiber plant, or saves watts but narrows operating margin, it may not be the right answer at fabric scale.

The first evaluation criterion is distance under real cabling conditions, not just lab reach. Leaf-spine links often span from tens of meters to about 2 km. That range changes everything. Very short runs favor simpler, lower-power optics and sometimes even electrical interconnects. Medium distances reward architectures that can preserve signal quality through structured cabling. Longer in-building runs demand more forgiving optical budgets. This is why fiber type and connector strategy matter as much as the module itself. A practical framework starts by separating links by reach, then checking whether the cabling plant supports parallel fiber, duplex fiber, or both. For readers comparing media choices, this overview of single-mode vs multimode fiber provides useful background.

The second criterion is economics at the system level. Cost per module matters, but total cost of ownership matters more. Parallel single-mode designs can offer excellent cost per bit, yet they consume more fibers and demand tighter polarity and cleanliness control. Wavelength-multiplexed designs reduce fiber count and simplify long paths, but often increase module complexity, power draw, and purchase price. The right architecture therefore depends on whether the facility is fiber-rich or fiber-constrained, and whether future moves, adds, and changes will be frequent.

A third filter is performance under operational load. In modern data centers, optics are judged by watts per port, thermal fit in dense switches, and latency added by signal processing. A design that looks elegant on paper can become unattractive if it pushes cooling limits or complicates airflow. This becomes even more important in AI-oriented fabrics, where link count is high and every watt scales across the room.

Finally, the best architecture must be evaluated for operational durability. Cleanability, field replacement, interoperability, telemetry, and vendor diversity all shape long-term success. The winning optical model is usually the one that tolerates real installation practices, provides enough margin for aging and patching, and still leaves a clean migration path to higher lane rates. That is the standard that should guide every technology comparison in the next chapter.

Choosing the Best Physical Layer for Leaf-Spine Networks: Why IM-DD on Single-Mode Fiber Usually Wins

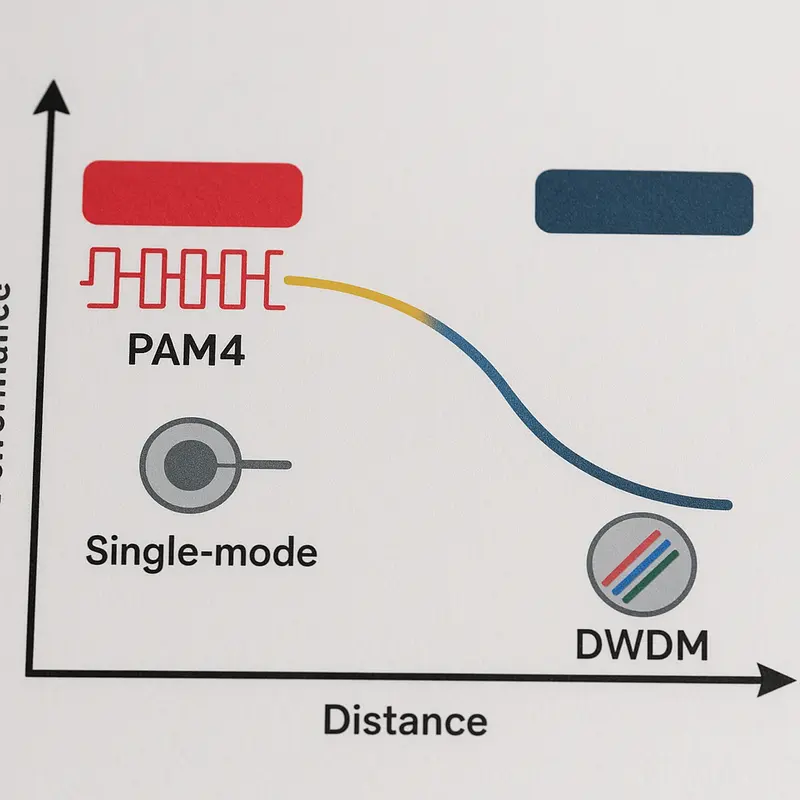

The physical layer decides whether a leaf-spine design remains elegant on paper or efficient in production. For most modern data centers, the strongest baseline is intensity modulation and direct detect, or IM-DD, carried over single-mode fiber. That combination aligns best with the distances, port speeds, and operating models common in leaf-spine fabrics. It also matches the current Ethernet roadmap, where 400G and 800G links are largely built around 100G-per-lane PAM4 signaling.

The practical reason is simple. Intra-data-center leaf-to-spine links usually fall between tens of meters and roughly 2 km. That is the sweet spot for IM-DD optics. They deliver lower power, lower latency, and simpler operations than coherent optics. Coherent technology is extremely valuable for longer data center interconnects, but inside a leaf-spine fabric it usually adds DSP overhead, cost, and complexity without solving a real problem. When links stay inside a hall, building, or closely connected campus, IM-DD is usually the better architectural fit.

Within that IM-DD choice, single-mode fiber has become the preferred medium because it balances reach, upgrade flexibility, and ecosystem maturity. Multimode still works well for short runs, especially where legacy cabling already exists, but its practical reach is shorter and its long-term migration path is less attractive at 400G, 800G, and beyond. By contrast, single-mode supports short parallel links and longer duplex WDM links on the same broader fiber strategy. If you need a refresher on the trade-offs, single-mode vs multimode fiber is a useful baseline comparison.

Standards reinforce this direction. Current Ethernet PMDs for data center optics center on short-reach and 2 km single-mode variants. Parallel designs such as DR4 and DR8 are optimized for lower-cost optics and high-density short links, while FR4-class optics use wavelength division multiplexing to reduce fiber count and extend reach over duplex LC. This creates a clean standards-based decision tree: use parallel single-mode optics where fiber is abundant and runs are controlled, and use duplex WDM single-mode optics where distance, patching, or fiber scarcity demands more margin.

Multimode remains a tactical option, not the strategic default. Coherent remains a specialized tool, not the leaf-spine norm. So when the question is not just what works, but what is best, the answer is usually a standards-aligned single-mode IM-DD architecture. It offers the strongest mix of scale, interoperability, and future readiness, while setting up the next design question: which pluggable implementation delivers that physical layer most efficiently?

Choosing Between Pluggables, LPO, and CPO in the Best Optical Architecture for Leaf-Spine Data Centers

The practical debate in modern leaf-spine design is no longer only about which fiber or which PMD to use. It is also about where the optical complexity should live and how much operational risk a network team is willing to absorb for gains in power, latency, and density. For most data centers, conventional pluggable optics still define the best balance. They fit the service model that operators already understand, support hot-swap replacement, and align well with the mixed DR and FR strategies that usually deliver the best overall architecture.

That matters because optics are now constrained as much by thermals and faceplate density as by reach. At 400G and especially 800G, the module is part of the switch design problem. Standard pluggables remain attractive because they isolate failures cleanly. If a link degrades, the operator replaces a module, cleans the connector, and restores service quickly. That field-replaceable model becomes even more valuable in large fabrics where mean time to repair affects application behavior as much as raw bandwidth. It is one reason pluggables remain the default choice even as alternatives mature.

Linear-drive optics change that equation at the margin. By removing or minimizing DSP functions inside the module, LPO can cut several watts per transceiver and trim latency by tens to low hundreds of nanoseconds per end. In tightly tuned AI fabrics, those savings are meaningful. But they are not free. LPO shifts more responsibility to the host electrical channel and to disciplined cabling practice. PCB traces, connectors, reflections, and insertion loss all matter more. That makes LPO strongest in short, clean, highly standardized deployments, not in every general-purpose leaf-spine environment. Teams considering it should already be comfortable with strict optical hygiene and channel engineering, especially in networks built for low-latency AI interconnect.

CPO pushes the same logic further by moving optical engines close to the switch silicon. The attraction is clear: shorter electrical paths, better signal integrity at future 224G lanes, and a path toward 1.6T class systems without impossible faceplate thermals. Yet the trade-off is fundamental. CPO improves system efficiency by reducing modularity. If optics are no longer easily field-replaceable, the operating model changes from simple sparing to chassis-level service planning. That is acceptable in some hyperscale environments, but it remains a serious barrier for broad deployment.

So, in the context of the best optical architecture for leaf-spine networks, the hierarchy is fairly clear. Pluggables are best for mainstream deployments today. LPO is a selective optimization when power and latency justify tighter engineering. CPO is a forward-looking answer to density and SerDes scaling, but not yet the default architecture for operators who value flexibility, mature tooling, and fast maintenance.

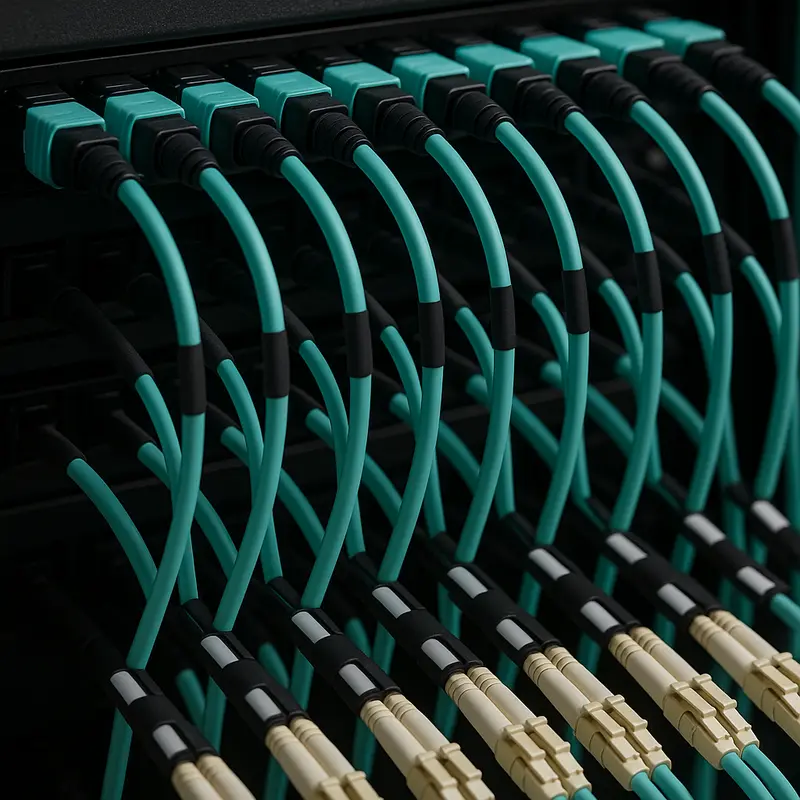

Designing the Physical Layer: Cabling and Loss Budgets That Determine the Best Leaf-Spine Optical Architecture

The best optical architecture for a leaf-spine network is not chosen by transceiver reach alone. It is decided just as much by the cabling plant, connector strategy, and the loss budget discipline behind every link. A design that looks optimal on paper can become fragile if patch fields are excessive, polarity is inconsistent, or connector loss quietly consumes the available margin. That is why structured design principles often decide whether parallel optics or duplex WDM is the better fit for a given hall.

For most modern fabrics, single-mode fiber is the right foundation because it supports both current 400G and 800G links and cleaner migration to higher port speeds. If you need a quick refresher on the tradeoffs, see single-mode vs multimode fiber. Within that single-mode baseline, the real design choice is usually between parallel DR-class links and duplex FR-class links. DR links are attractive because the optics are simpler and often more efficient, but they consume more fibers and are less forgiving of poor structured cabling. FR links reduce fiber count and work well across larger facilities, yet they shift complexity into the module.

That trade-off becomes clear in the loss budget. A 400G or 800G DR link over parallel fiber typically operates with a relatively tight budget, around the range where every MPO connection matters. Fiber attenuation over a few hundred meters is small, so the dominant issue is usually insertion loss from mated pairs, patch panels, trunks, and contamination. One dirty MPO interface can erase a surprising amount of margin. In practice, that means DR architectures are strongest when the plant is intentionally simple: limited patching, low-loss components, strict polarity control, and disciplined inspection and cleaning.

FR designs fit a different operational reality. When links span long halls, multiple rows, or building-scale pathways, duplex LC cabling is often easier to manage and more tolerant of intermediate cross-connects. The lower fiber count reduces trunk congestion and simplifies future rearrangements. This matters in leaf-spine networks because the physical layer must support repeated expansions without turning every capacity upgrade into a cabling rebuild.

Structured design therefore has to start from topology intent. If most leaf-spine runs stay under 500 meters and fiber is plentiful, parallel single-mode cabling usually produces the best balance of cost and efficiency. If distances stretch toward 2 kilometers, or if patching density and fiber scarcity are real constraints, duplex designs become the safer architectural choice. The winning optical architecture is not simply the fastest interface. It is the one whose cabling model preserves margin, scales cleanly, and keeps the network operable as port speeds climb.

The Real Cost of “Best”: Economic, Operational, and Supply-Chain Forces Shaping Leaf-Spine Optical Architecture

If cabling design determines whether a leaf-spine fabric can work cleanly, economics and operations determine whether it remains the best optical architecture once the network is deployed at scale. That is why the practical winner in most data centers is not simply the lowest-cost transceiver or the longest-reach option. It is the design that balances module cost, fiber consumption, power draw, sparing, failure recovery, and upgrade flexibility over several switch generations.

For most modern deployments, that balance still favors single-mode, IM-DD pluggable optics. Parallel DR optics usually win on raw optics economics. They often deliver the lowest cost per transported bit and attractive power efficiency, especially at 400G and 800G. But that advantage is only real when fiber pathways are abundant and the team can manage MPO-based infrastructure well. In buildings where conduit space is limited, patching is dense, or future moves are frequent, FR-class duplex designs often produce a better total outcome even with higher module pricing. The lower fiber count reduces installation complexity, eases documentation, and limits the hidden labor that accumulates across large fabrics.

Operations often decide the argument. Pluggable optics remain dominant because they fit established service models. Failed units are hot-swappable, sparing is straightforward, and telemetry is mature. Teams can monitor optical power, temperature, bias current, and FEC trends before faults become outages. By contrast, more aggressive approaches such as co-packaged optics may improve density and power efficiency, but they also complicate maintenance and shift failure domains from a replaceable module to a larger system element. For most operators, that trade-off is still too severe.

Power has also become a first-order architectural variable. At 800G, a few watts per module multiplied across a large spine layer turns into meaningful cooling and rack-level design pressure. This is why linear-drive pluggables attract interest in AI clusters and latency-sensitive fabrics. They can reduce power and shave latency, but they also demand cleaner electrical channels and tighter operational discipline. In other words, they lower energy cost by raising engineering rigor. This is closely tied to broader design choices around low-latency AI interconnect, where optical efficiency and system architecture increasingly converge.

Geopolitical and supply-chain realities now matter almost as much as technical merit. The best architecture is one that can be dual-sourced, validated across vendors, and procured consistently despite export controls, component shortages, or regional restrictions. Standardized DR4, FR4, DR8, and 2xFR4 ecosystems are stronger here than niche designs. For that reason, the most resilient leaf-spine strategy is usually not the most exotic one. It is the architecture that combines strong standards alignment, broad supplier depth, predictable operations, and a credible path to 1.6T without forcing a wholesale redesign.

Final thoughts

Choosing the best optical architecture for leaf-spine data center networks requires careful evaluation of technical needs, cost implications, and scalability constraints. IM-DD single-mode optics such as 400G DR4 and 800G DR8 are optimal for short-to-medium distances, while FR4-class WDM serves longer links efficiently. Emerging innovations like LPO and CPO offer enhanced power and density performance, but operational maturity demands strategic planning. Structured cabling and rigorous maintenance protocols ensure reliability while enabling future upgrades. Data-driven decision-making based on these insights ensures robust and future-proof optical infrastructure.

Explore ABPTEL’s advanced optical solutions for your leaf-spine network. Contact us today for custom design expertise and product guidance.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides cutting-edge optical transceivers, structured cabling (MTP/MPO), DAC and AOC technologies, and fiber tools designed for hyperscale data centers, AI/ML clusters, telecom, and FTTA installations. With a focus on scalability, reliability, and next-generation technology, ABPTEL enables seamless high-speed data transport for diverse projects, backed by expert guidance and consulting services.