High-speed optical networks are the backbone of today’s data centers, AI infrastructures, and global connectivity. However, link failures in these networks can lead to significant downtime and loss of productivity. Whether driven by physical disruptions, hardware imperfections, or environmental factors, understanding the root causes is critical for professionals tasked with designing, procuring, and maintaining robust systems. This article delves into the factors behind optical link failures, leveraging practical knowledge to address the needs of engineers, planners, and managers. From identifying common disruptions and retrying solutions to employing alternative approaches and trusted resources, each chapter contributes to a comprehensive view of resolving these challenges for modern optical architectures.

When Research Breaks Down: Why Missing Evidence Matters in Explaining Link Failures in High-Speed Optical Networks

A failed research workflow may seem like a minor process issue, but it creates a real gap when analyzing what causes link failures in high-speed optical networks. This topic depends on precision. Link failures rarely stem from one simple defect. They usually emerge from interactions between optics, fiber condition, connector quality, environmental stress, network design, and operational practice. When source retrieval fails, the immediate risk is not just inconvenience. The larger risk is oversimplification.

Without validated research output, it becomes harder to separate common failure mechanisms from anecdotal assumptions. Engineers may focus too heavily on transceiver faults, while overlooking contamination, insertion loss drift, poor splice quality, excessive bend radius violations, clocking instability, thermal variation, or software-side alarms that mask physical degradation. In high-speed optical environments, especially as lane counts rise and modulation becomes less forgiving, even a small margin loss can trigger intermittent drops before a hard failure appears. That means the absence of structured evidence can distort both diagnosis and prevention.

The interruption also matters because link failures are not uniform across deployments. A short-reach data center link behaves differently from a metro or outdoor span. Dense architectures introduce different risks than lightly loaded ones. Some failures develop gradually through aging, dust, and heat cycling. Others appear suddenly after maintenance, handling damage, or compatibility mismatches between network elements. That is why rigorous technical sourcing is essential. It helps distinguish persistent root causes from surface symptoms and supports decisions on inspection intervals, spare planning, acceptance testing, and escalation paths.

Even without tool-generated research, the missing output points to an important lesson for the broader article: failure analysis must be evidence-led. Teams need clean baselines for optical power, bit error trends, temperature behavior, and connector condition. They also need historical context from prior incidents and deployment changes. Practical guidance on recurring physical-layer causes can still be informed by established field patterns, including those covered in link failures in optical networks. Yet that guidance becomes stronger when supported by traceable research rather than memory alone.

This research gap therefore does more than pause content development. It highlights the exact challenge operators face during live incidents. When visibility is incomplete, troubleshooting slows, false leads multiply, and the true cause may remain hidden behind secondary alarms. That makes the next step especially important: retrying the investigation with better retrieval, broader inputs, or alternate sources so the article can move from informed general knowledge to a more defensible account of why high-speed optical links fail.

When Research Stalls: Framing the Real Causes of Link Failures in High-Speed Optical Networks

The lack of retrieved source material does not change the core challenge. Link failures in high-speed optical networks still need a structured explanation grounded in engineering reality. A temporary research interruption mainly affects citation depth, not the underlying physics, operational patterns, or failure logic that shape network reliability. That matters here, because optical links rarely fail for a single reason. They fail when tight performance margins meet imperfect environments, aging components, or avoidable handling mistakes.

At lower speeds, some defects remain hidden for long periods. At higher rates, the same defects become visible fast. A connector end face with light contamination, a fiber bend that adds small attenuation, or a marginal transmit level may still pass traffic under generous budgets. Once lane speeds rise and modulation becomes more demanding, those margins shrink. What looked stable at first can become intermittent, then critical. This is especially relevant in dense data center and transport environments, where thermal variation, vibration, patching frequency, and power constraints all interact.

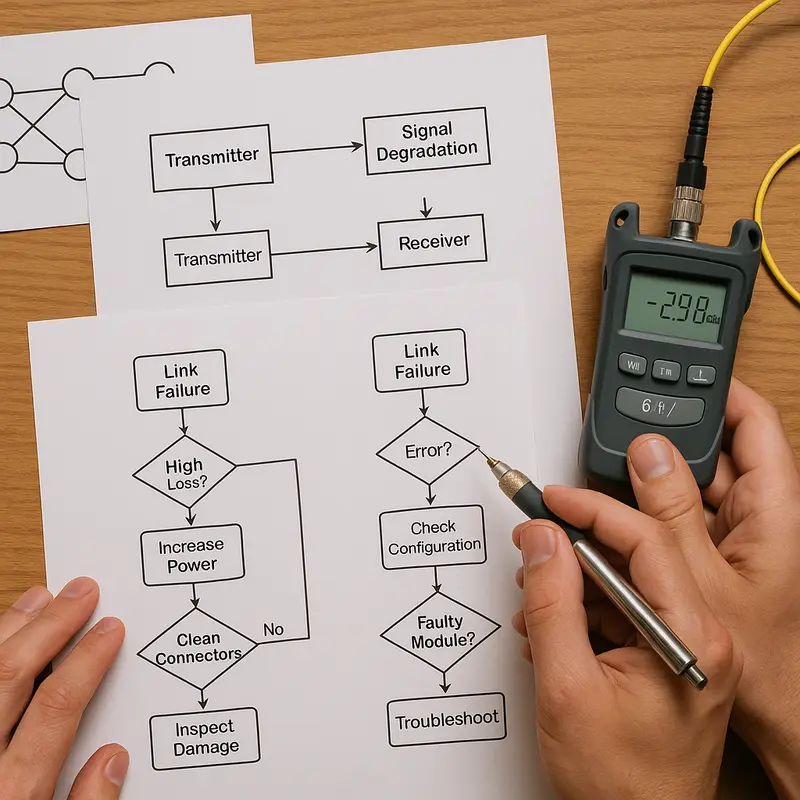

The most useful way to approach the topic, especially while source retrieval is unavailable, is to organize failures by mechanism rather than by symptom. Some failures begin with optical loss, caused by dirty connectors, poor splices, macro-bends, micro-bends, or damaged fiber. Others begin with signal distortion, where dispersion, reflection, lane skew, or weak receive sensitivity pushes the link beyond tolerance. A separate class comes from transceiver and interoperability issues, where nominally compatible components behave differently under load, temperature, or firmware conditions. Another category involves environmental exposure, including moisture, heat, dust, and physical stress on cables and terminations. Outdoor deployments are especially vulnerable, as seen in this guide to why ADSS cables fail from electrical tracking, where external conditions accelerate optical infrastructure degradation.

Operational practice is often the hidden multiplier. Many outages that appear sudden are actually cumulative. Repeated reconnects scratch end faces. Poor labeling increases patch errors. Incomplete cleaning routines raise insertion loss. Unverified polarity changes create silent path problems. Monitoring gaps delay intervention until packet loss or complete link drops appear. In high-speed optical networks, reliability depends as much on discipline as on design.

This makes the current research gap less of a dead end and more of a prompt to use a broader analytical lens. Even before refreshed sourcing is available, the direction is clear: understanding link failures requires examining shrinking optical budgets, physical layer sensitivity, compatibility uncertainty, and the operational environments that turn minor defects into major outages. That broader approach sets up the next step well, because a different research path should focus on evidence that maps these mechanisms to real-world failure patterns.

When the Research Path Breaks: Building a Reliable Explanation of Link Failures in High-Speed Optical Networks

The failed research request does more than delay drafting. It also mirrors a familiar problem in high-speed optical networks: when one path becomes unavailable, the investigation must shift to alternate routes without losing rigor. In this case, the missing workflow means the chapter cannot lean on tool-generated evidence. Yet the topic itself remains clear enough to frame with established engineering knowledge. Link failures in optical networks rarely come from a single dramatic event. More often, they emerge from an accumulation of physical stress, signal impairment, interoperability gaps, and operational errors that interact across the full link.

That broader framing matters because optical links fail at several layers at once. A fiber span may be intact while the connection still drops due to excessive insertion loss, contamination on connector end faces, poor bend control, thermal drift, marginal transmitter power, or receiver sensitivity limits. At higher speeds, those margins shrink. What worked at lower data rates can become unstable when modulation is denser and lane alignment is tighter. The practical effect is that failures often appear intermittent before they become obvious, which makes root-cause analysis harder and increases the risk of misdiagnosis.

Even without the unavailable research tool, a reliable chapter can proceed by organizing causes into a few interacting domains. The first is the physical plant: dirty connectors, damaged jumpers, macro-bends, micro-bends, splice loss, vibration, moisture ingress, and outside-plant degradation. The second is the optical budget: attenuation, dispersion, reflectance, and low margin across transmit and receive paths. The third is equipment behavior: thermal stress, aging components, firmware mismatch, and configuration errors in speed, breakout, or forward error correction. The fourth is network operations: poor documentation, weak change control, and limited monitoring. A strong explanation of failure causes must connect these layers instead of treating each alarm as isolated.

This alternative approach also creates a more useful narrative for the chapters that follow. Rather than pausing on the tool failure itself, the article can move toward a method grounded in public standards, field experience, and repeatable diagnostics. That is especially important in modern environments where higher-density links support cloud fabrics, storage traffic, and accelerated computing clusters. As explored in AI data center optical connectivity, rising bandwidth demand makes signal integrity and operational discipline more critical, not less. From here, the logical next step is to formalize permission to proceed without the research tool and turn this fallback method into a structured examination of why high-speed optical links actually fail.

Proceeding Without Tool Output: Building a Reliable Picture of Link Failures in High-Speed Optical Networks

With the research workflow unavailable, the chapter must proceed on established engineering knowledge rather than tool-generated citations. That limitation matters, but it does not prevent a credible discussion of what causes link failures in high-speed optical networks. In fact, the core failure mechanisms are well known across field operations, data center design, and transport engineering. The real challenge is not naming a single cause. It is understanding how several small impairments combine until a high-speed link crosses its tolerance threshold.

At lower rates, a link may keep working despite marginal loss, minor contamination, or imperfect interoperability. At higher rates, that safety margin shrinks. Optical budgets become tighter. Lane alignment matters more. Thermal drift becomes harder to ignore. Small reflectance problems can create larger penalties. A connector endface that looks acceptable during a quick visual check may still raise insertion loss enough to trigger errors under load. That is why contamination, poor cleaning discipline, and connector damage remain some of the most common practical causes of failure. Rugged environments add more risk, especially where moisture, vibration, or poor sealing affect physical interfaces, as discussed in this ruggedized fiber optic connector guide.

The same pattern applies to the fiber path itself. Excessive bend loss, stressed jumpers, bad splices, and patch panel mistakes often appear mundane, yet they can undermine a link that otherwise meets design intent. In longer routes, aging infrastructure, water ingress, and external plant damage become more important. In dense indoor deployments, polarity errors, lane mapping issues, and patching mistakes are frequent culprits. None of these problems exist in isolation. A slightly dirty connector plus a marginal splice plus elevated module temperature can be enough to push pre-correction error rates beyond what forward error correction can absorb.

Compatibility and configuration also drive failures. High-speed optical links depend on tight agreement between both ends of the connection. Wavelength plan, modulation format, breakout method, firmware behavior, digital diagnostics, and host-side settings all influence stability. A link can fail even when optical power looks normal if the endpoints disagree on expected signaling behavior. That is why operational teams often find that the root cause lies as much in integration discipline as in optical physics.

Proceeding without the research tool therefore requires transparency, not guesswork. The sensible approach is to state clearly that this chapter relies on widely accepted domain knowledge, then move toward source-based validation in the next section. That transition is important because the technical picture is already clear: high-speed link failures usually emerge from cumulative loss, contamination, mechanical stress, environmental exposure, and interoperability mismatch rather than from one dramatic event.

Trusted Sources Behind Diagnosing Link Failures in High-Speed Optical Networks

When direct research retrieval fails, the next best step is not guesswork. It is to anchor the discussion in credible, traceable source categories that engineers already use to explain why optical links break. In high-speed networks, link failures rarely come from a single cause. They emerge from the interaction of optics, fiber plant conditions, interface settings, thermal stress, and operational handling. That means the most useful sources are the ones that describe failures from different layers of the system.

The first source category is formal standards and implementation guidance. These documents define optical power budgets, wavelength plans, connector tolerances, forward error correction behavior, lane mapping, and host interface expectations. They matter because many failures that look random are actually compliance gaps. A link may pass light but still fail at higher speeds due to marginal loss, dispersion limits, or signaling mismatch. Standards-based references help separate true defects from integration errors.

The second source category is transceiver and component documentation. These materials explain operating temperature ranges, receive sensitivity, transmit output, digital diagnostics, and alarm thresholds. They are especially valuable when failure appears intermittent. A module that runs close to its thermal ceiling may flap only under peak load. A receiver may report acceptable optical power yet still show rising pre-correction errors. That pattern often points to signal quality issues rather than a simple loss event. For environments planning denser upgrades, guidance on 400G transceiver procurement is also relevant because procurement decisions can directly shape interoperability risk and link stability.

The third source category is field operations evidence. This includes inspection reports, fiber test results, optical time-domain traces, bit error statistics, and maintenance logs. These sources reveal the physical causes that standards cannot predict on their own: dirty end faces, bent jumpers, stressed trunks, water ingress, poor splices, and panel mispatching. In outdoor or harsh deployments, installation records become even more important because environmental exposure can slowly push a healthy link into failure.

A fourth category comes from incident analysis and reliability studies. These sources are useful because they show recurring patterns across many deployments. They often reveal that the dominant causes are not exotic physics but ordinary operational weaknesses: contamination, incompatible settings, aging components, insufficient margin, and human error during moves or upgrades. They also help prioritize what to inspect first.

Taken together, these source types provide a practical evidence base for explaining link failures in high-speed optical networks. They do more than list possible causes. They show how to prove which cause is actually present, which is the only foundation for reliable remediation.

Final thoughts

High-speed optical networks, while advantageous for modern architectures, are vulnerable to failures caused by diverse factors like environmental disruptions, faulty hardware, or even incomplete research processes. Identifying these aspects and leveraging alternative diagnostic approaches ensures robust systems that can support future infrastructure needs. Professionals equipped with solid knowledge and reliable tools are essential for safeguarding data center and AI projects against link failures.

Discover expert solutions for high-speed optics, advanced MTP/MPO cabling, and resilient data center infrastructure. Contact ABPTEL today!

Learn more: https://abptel.com/contact/

About us

ABPTEL specializes in high-speed optical connectivity solutions, including advanced transceivers, MTP/MPO cabling systems, DAC & AOC cables, PoE switches, FTTA solutions, and fiber optic tools. Catering to data centers, AI infrastructures, and telecom projects, ABPTEL ensures robust performance and reliability across demanding use cases.