High-speed optical networks are foundational to global connectivity, powering data centers and supporting AI infrastructures. However, link failures in these networks—resulting from physical faults, signal impairments, component failures, operational errors, and external risks—pose critical challenges for latency-sensitive applications and SLA commitments. This article will examine the primary causes of link disruptions, looking at everything from fiber cuts and OSNR limits to hardware reliability and configuration errors. It will also explore strategies for protection and restoration, providing actionable insights for professionals managing data center connectivity, AI workloads, and IT systems. By understanding these interconnected failure mechanisms, engineers, planners, and managers can develop robust, resilient optical systems that safeguard performance and operational uptime.

Where Optical Link Failures Begin: Physical Infrastructure Weak Points in High-Speed Networks

High-speed optical failures often begin far from the transponder or amplifier. They start in the physical path itself, where small defects or sudden damage strip away the margin that dense, high-capacity systems depend on. The most visible cause is the fiber cut. In metro networks, excavation, road work, drilling, and rail projects remain persistent threats. In long-haul routes, cuts are less frequent, but each event is more disruptive because so much traffic rides a single corridor. Submarine links face a similar reality in shallow waters, where anchoring and fishing activity continue to cause a large share of breaks.

Yet many infrastructure failures are not dramatic breaks. They are gradual degradations that raise loss until the link can no longer tolerate normal optical variation. Connector contamination is a leading example. A particle only a few microns wide can add meaningful insertion loss and back-reflection at one interface. In high-speed systems, that extra loss does not stay local. It consumes budget that later chapters will show is already under pressure from tight OSNR and modulation requirements. Poor handling, skipped inspection, and rushed patching can therefore create failures that appear random but are entirely physical in origin. Good field discipline matters as much as design, especially in harsh outdoor environments that demand ruggedized fiber connector practices.

Bending stress is another common root cause. Macrobends from tight routing can create immediate attenuation, especially at monitoring wavelengths. Microbends are subtler. They appear when cables are compressed, trays are overfilled, or temperature cycling changes local stress points. The result is unstable attenuation that is hard to diagnose because it may worsen only under heat, cold, or vibration. Splices behave in much the same way. A splice with acceptable initial loss can drift over time if the tray is stressed, moisture enters the closure, or mechanical creep changes alignment.

Passive infrastructure also ages. Patch panels, splitters, filters, ferrules, and adhesives all introduce opportunities for drift. A network may continue passing traffic while its physical margin quietly shrinks. Then a routine maintenance action, a weather event, or a simple channel add pushes the path into failure.

What makes these root causes especially dangerous is their ability to masquerade as higher-layer or equipment problems. A dirty connector can look like a transceiver issue. A stressed cable can resemble intermittent optical instability. A poor splice can be mistaken for amplifier imbalance. Before analyzing more complex impairment mechanisms, it is essential to see that many high-speed optical link failures begin with the condition, handling, and protection of the fiber plant itself.

How Optical Impairments and OSNR Margins Turn High-Speed Fiber Links into Failures

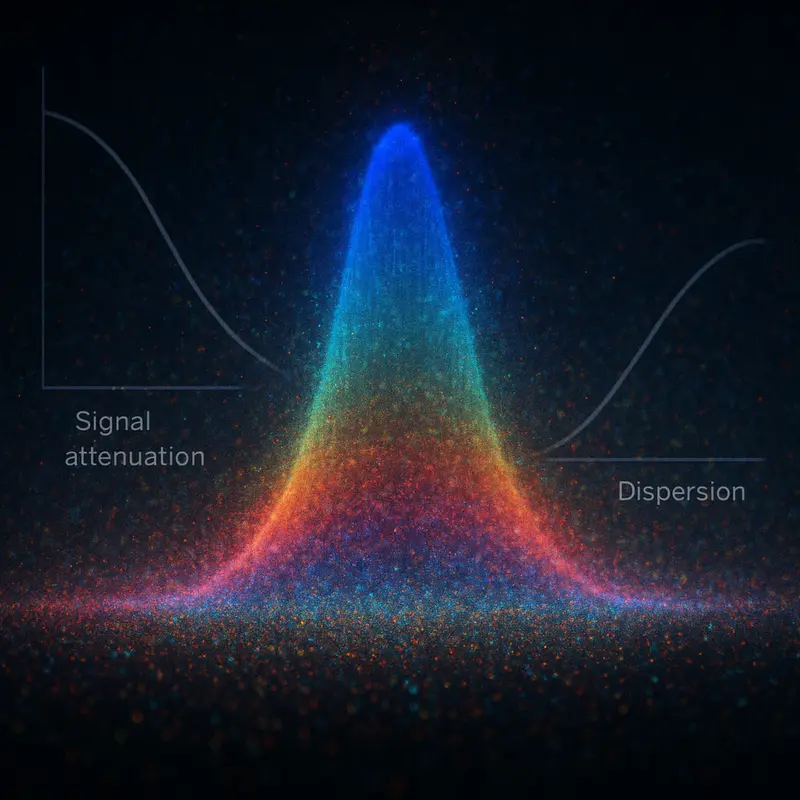

Between obvious physical damage and outright hardware faults lies the quieter failure domain where high-speed optical links degrade until they cross a performance cliff. In modern DWDM systems, that cliff is often defined by OSNR margin. A link may still show light at the receiver, yet no longer carry traffic reliably because amplified spontaneous emission accumulates span by span, filtering penalties build through reconfigurable nodes, and modulation formats demand cleaner signal conditions than older systems ever did. Coherent PDM-QPSK can often survive around 10–12 dB OSNR, but 16QAM and 64QAM require far more headroom. As rates increase, failures become less binary and more margin-driven.

That is why many optical outages begin as a telemetry story before becoming a service story. Rising pre-FEC BER, unstable equalizer behavior, and selective channel degradation usually appear before loss of frame or loss of signal alarms. Soft-decision FEC extends survivability, but it does not remove the underlying physics. It only delays the point where accumulated impairment becomes uncorrectable. Once margins are thin, a minor change in span loss, amplifier gain, or channel loading can trigger a sudden collapse.

Dispersion contributes to that collapse in subtler ways. Chromatic dispersion over standard single-mode fiber grows quickly with distance, and although coherent DSP can compensate large values, residual effects still interact with filtering and phase recovery. Polarization mode dispersion is even more unpredictable because it varies with temperature and vibration. A link may run clean for hours, then produce burst errors when differential group delay spikes. These events are especially dangerous in higher-order modulation, where tolerance is lower and recovery loops are less forgiving.

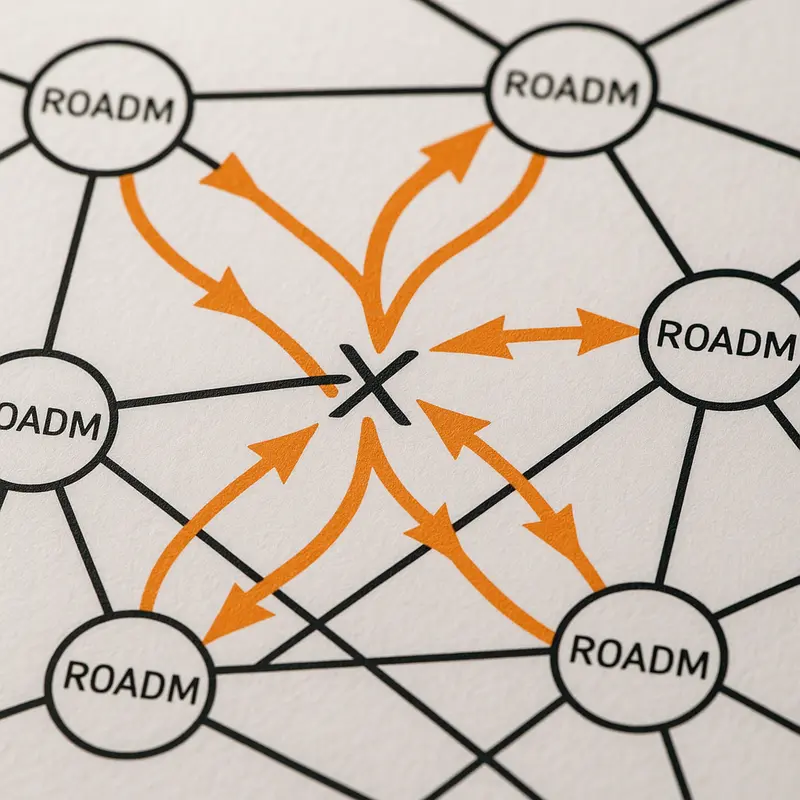

Nonlinear effects further narrow the safe operating window. Higher launch power improves OSNR only up to a point. Beyond that, self-phase modulation, cross-phase modulation, and four-wave mixing distort the waveform and create crosstalk between channels. The result is an OSNR-equivalent penalty that conventional power readings may not fully explain. In dense ROADM networks, passband narrowing compounds the problem. A signal that survives one filter may not survive six or eight cascaded filters with ripple, group-delay variation, and in-band crosstalk. This is one reason 400G migration planning must account for more than raw bandwidth.

Power transients make these impairment chains even more dynamic. When channels are added, dropped, or rerouted, amplifiers and Raman gain profiles can swing by several dB before control loops settle. Some wavelengths recover. Others cross the FEC threshold and fail selectively. In high-speed optical networks, many link failures are not caused by one dramatic event, but by several manageable impairments arriving at the same time and exhausting the system’s remaining optical margin.

When Hardware Becomes the Weak Link: Equipment and Component Reliability in High-Speed Optical Network Failures

If the previous discussion explained how optical margin erodes in flight, this part shifts attention to the devices that create, amplify, switch, and recover the signal. In high-speed optical networks, failures often begin not with a dramatic fiber cut, but with hardware drift so small that it escapes notice until pre-FEC error counts rise and service crosses the threshold from degraded to unusable.

Transceivers are usually the first place where this fragility becomes visible. High-rate optics depend on tightly controlled lasers, thermal regulation, fast converters, and digital signal processing that must stay stable under heavy load. As modules age, laser linewidth can broaden, output power can fall, and bias currents can creep upward. A thermal control fault can push the optics outside their intended operating window, causing phase recovery instability, burst errors, or complete loss of signal. In shorter-reach environments, client-side modules may never show a hard failure at first. Instead, they develop persistent error floors that consume FEC margin and create intermittent faults that are notoriously hard to isolate. That is one reason procurement quality and validation matter, especially during generational upgrades such as 400G transceiver procurement planning.

Amplifiers introduce another layer of risk because they affect many wavelengths at once. A pump diode failure, gain-control instability, or noise figure increase can drop OSNR across an entire span. Even before total failure, gain tilt drift can punish edge channels and create selective outages that look like path-specific issues rather than amplifier trouble. In Raman-assisted systems, pump drift or depletion changes the span gain profile and can trigger network-wide power rebalancing problems. The visible symptom may be channel flapping, but the root cause sits deep inside the amplification chain.

Reconfigurable optical nodes also fail in subtle ways. Switching elements can drift out of calibration, develop control faults, or mis-handle wavelength steering. When that happens, the result is not always a clean outage. It may appear as intermittent attenuation, unexpected crosstalk, or one direction of a protected path performing worse than the other. Cascaded through multiple nodes, small hardware defects accumulate into a service failure that operations teams may first misread as a routing or optical-layer problem.

Reliability is also shaped by what surrounds the optical path. Line cards, backplanes, power shelves, fans, and timing circuits all influence link continuity. A brownout in one site can drop multiple shelves. A cooling fault can force thermal shutdown. Firmware regressions can break framing, FEC alignment, or alarm behavior without any damaged optics at all. Over time, end-of-life drift across these components quietly reduces the 1 to 2 dB of aging margin that designers try to preserve. Once that reserve is gone, the next routine transient becomes a link failure.

When Configuration Mistakes Become Optical Outages: Control-Plane and Operational Causes of High-Speed Link Failure

After hardware reliability is accounted for, many high-speed optical failures still begin with people, software, and provisioning logic. The link itself may be physically intact, yet service collapses because the network was told to operate outside its safe margin. In dense optical systems, a small configuration error can behave like a physical fault. A misaligned wavelength, an incorrect baud-rate profile, or a filter setting that looks minor on paper can push a channel into loss of frame, rising pre-FEC errors, or a full outage.

The danger is magnified in reconfigurable optical networks. Every add, drop, reroute, and capacity turn-up changes channel loading, amplifier balance, and passband conditions. If operators add wavelengths without re-equalizing span power, weaker channels can lose OSNR while stronger ones drive nonlinear penalties. A difference of only 1 to 2 dB between channels may be enough to create selective failures that appear random until telemetry is examined closely. Wrong grid spacing or passband mismatch creates another subtle trap. A channel can remain visible while suffering enough filtering distortion and crosstalk to become unstable under traffic.

Operational handling introduces equally serious risks. Patch panel errors, swapped transmit and receive fibers, poor labeling, and unverified jumpers remain common causes of avoidable downtime. Connector hygiene is also an operational discipline, not only a physical concern. A contaminated interface inserted during maintenance can add enough loss or reflection to trigger alarms several spans away. Guidance on reducing optics interoperability problems becomes especially relevant during mixed-vendor or upgrade scenarios, as discussed in this overview of reducing compatibility risks in optical modules.

The control plane can fail even when optics are healthy. Path computation may ignore shared-risk link groups, placing protected and working paths in the same duct, bridge crossing, or conduit. Restoration logic may be provisioned but not truly diverse. Controller software defects can also compute routes with incompatible spectral assignments or fail to coordinate amplifier and switching states during reprovisioning. The result is a network that appears resilient on diagrams but fails under a single real-world event.

Monitoring policy often determines whether these errors become brief warnings or major incidents. Alarm thresholds that are too loose hide degradation until post-FEC errors appear. Thresholds that are too tight create flapping and mask root causes with noise. Sparse telemetry leaves operators blind to rising corrected-error counts, spectral tilt, or repeated power transients after routine changes. In high-speed optical networks, failures are often not sudden. They are configured, overlooked, and only later exposed by traffic growth, maintenance activity, or a restoration event that removes the last remaining margin.

When Links Fail Beyond the Fiber: Protection, Restoration, and External Risks in High-Speed Optical Networks

If operational mistakes often trigger avoidable outages, protection and restoration determine whether a fault becomes a brief disruption or a major service failure. In high-speed optical networks, resilience is never just a backup path on paper. It depends on how quickly traffic can move, how independent alternate routes really are, and how the optical layer behaves during sudden change. Carrier targets often aim for restoration within 50 ms, yet real outcomes depend on amplifier settling, ROADM reconfiguration, control coordination, and whether the spare path has enough OSNR margin to carry restored wavelengths without crossing FEC limits.

This is why route diversity must be judged physically, not logically. Two paths may look separate in a planning tool while still sharing a conduit, bridge crossing, landing station, or amplifier site. A single cut, fire, flood, or station power failure can then remove both the working and protection path at once. Shared risk link group planning is essential because correlated failures are common in metro rings, long-haul corridors, and submarine landing approaches. Even well-designed mesh restoration can introduce brief instability. Add-drop events and rapid channel reroutes may cause power transients of several dB, spectral tilt, and temporary loading imbalance before gain control loops settle. During that window, links already operating near their margin may show burst errors, frame loss, or selective wavelength failure.

External risks make these dynamics more severe because they are often abrupt, widespread, and hard to predict. Terrestrial fiber remains vulnerable to construction damage, road works, rail projects, and utility excavation. Aerial plant faces wind, ice, wildfire, and lightning exposure. In coastal and submarine systems, shallow-water sections are especially exposed to fishing activity and anchor drags. Flooding can disable huts and repeater power systems even when the fiber itself survives. Landslides and seismic events may sever multiple spans at once, stretching repair times far beyond SLA assumptions. In some regions, theft, sabotage, and geopolitical tension also raise the risk profile of cross-border routes and cable landing infrastructure.

The operational consequence is that availability depends as much on recovery design as on raw hardware quality. Five nines allows only about 5.26 minutes of annual downtime, leaving little room for slow fault isolation or poorly tested failover. Operators that routinely simulate restoration, verify true path separation, and precompute reroute behavior usually contain damage better than those relying on nominal redundancy alone. Strong telemetry also matters here. OTDR, spectrum monitoring, and FEC trends help teams distinguish between a clean cut, a degraded protection path, and a restoration event that introduced new optical penalties. For a broader discussion of network outage mechanisms, see what causes link failures in optical networks.

Final thoughts

By understanding the technical, operational, and external causes of link failures in high-speed optical networks, stakeholders can adopt strategies to prevent outages and ensure robust connectivity. From protecting physical infrastructure and mitigating optical impairments to investing in reliable equipment and disciplined operations, proactive measures reduce risks and enhance resilience. Route diversity, predictive analytics, and tested restoration protocols cement reliability in critical applications like AI workloads and data center operations, emphasizing the vitality of resilient architecture in modern optical systems.

Explore ABPTEL’s high-speed optical solutions for resilient data center interconnects, transceivers, and MTP/MPO cabling systems.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides state-of-the-art optical transceivers, MTP/MPO connectivity systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for robust data center, AI, and telecom infrastructures. Our solutions deliver reliable high-speed connectivity with optimized performance, backed by industry-leading expertise to meet SLA demands.