Data center network demands are surging due to AI/ML, large-scale storage, microservices, and east–west traffic patterns. While 100G networks remain common, congestion risks, operational costs, and limited scalability often make them unsatisfactory for modern workloads. Migrating to 400G is no longer optional—it’s a strategic necessity to improve performance density and efficiency while controlling power, space, and thermal footprints. This article explores a structured approach to 400G migration, covering optics and cabling selection, switch silicon and port strategies, fabric design evolution, and financial sustainability. Each chapter presents actionable insights for data center network engineers, system integrators, planners, and managers, ensuring a robust, phased transition that minimizes risk and supports future growth.

Choosing the Right 400G Optics and Cabling Path for a Low-Risk Data Center Migration

A successful move from 100G to 400G is shaped early by physical-layer choices. Optics, modulation, and cabling determine not only reach, but also how safely you can phase the migration. The wrong choice can lock the project into expensive recabling, weak interoperability, or limited upgrade options later.

The first decision is usually distance and fiber plant. For short intra-data-center links, existing multimode runs may look attractive because they seem reusable. That works in some cases, but 400G over multimode is less forgiving than 100G. Traditional 100G SR4 used NRZ across four lanes, while 400G relies on PAM4 signaling, usually across more complex lane structures and with mandatory forward error correction. PAM4 increases density, but it also tightens operational discipline. FEC overhead and a small latency increase are usually acceptable, yet they still matter for storage-heavy or low-latency fabrics.

That is why many migration plans increasingly favor single-mode fiber for new 400G builds. For links up to 500 meters, 400G DR4 often offers the most practical balance of cost, reach, and flexibility. It also supports one of the cleanest transition paths: a new 400G switch port can break out into four 100G links, letting upgraded spines connect to existing 100G leaves without a disruptive cutover. If your plant is already duplex-focused and fiber availability is tight, 400G FR4 becomes attractive because it runs over duplex connectors and simplifies patching, even if module cost and power are typically higher. A useful comparison of these tradeoffs appears in this guide to 400G DR4, FR4, and LR4 transceivers.

Connector strategy matters as much as optics selection. Parallel 400G options such as DR4 and SR8 depend on MPO connectivity, and that brings polarity planning, cassette design, and trunk compatibility into the migration plan. Base-8, Base-12, and Base-16 ecosystems do not mix gracefully by accident. A plant built for 100G SR4 may not cleanly support 400G SR8 without new trunks or patching changes. Duplex options avoid much of that complexity, which is why they often win in brownfield environments.

Loss budgets must be checked link by link, not assumed from data sheets. Every mated pair, cassette, and panel consumes margin. At 400G, connector cleanliness and lane-level monitoring become operational requirements, not best practices. The team should map each current link to a target optic, confirm real distances, count connection points, and reserve enough margin for aging and maintenance events. Those choices then feed directly into the next step: matching switch form factors, thermals, and breakout behavior to the physical design.

Choosing Switch Silicon, 400G Form Factors, and Breakout Paths for a Low-Risk Data Center Migration

A practical 100G-to-400G plan depends as much on switching platforms and host interfaces as on optics. The central question is not simply whether a switch supports 400G. It is whether its silicon, port design, and thermal profile let you scale cleanly through a mixed-speed migration. Higher-capacity ASICs increase radix and throughput density, but they also compress failure domains and raise the importance of table scale, buffering, and breakout flexibility. A platform that looks efficient on paper can become restrictive if it limits per-port speed changes, breakout modes, or forward error correction behavior.

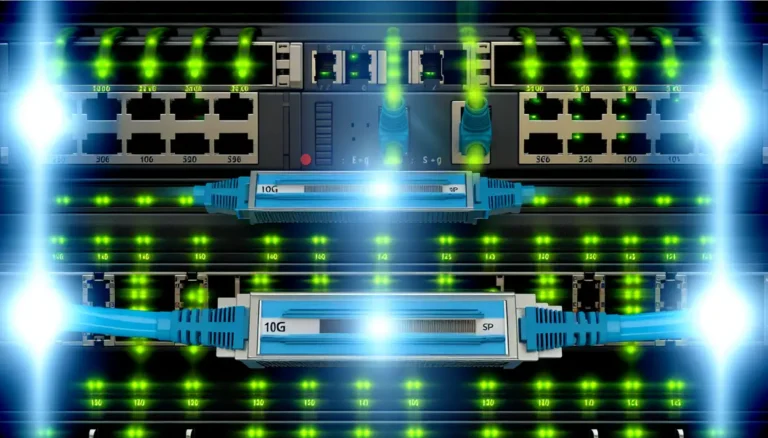

For most migrations, port flexibility matters more than peak bandwidth. Many 400G-capable systems can run ports as 100G, 200G, or 400G, which is critical when existing leaves, servers, and interconnect paths will not all change at once. That flexibility lets operators introduce 400G spines first, then connect them to legacy 100G leaves through 4x100G breakout. This staged model avoids a disruptive fabric rebuild and preserves familiar operational patterns during early deployment. It also protects capital already invested in 100G access layers while creating a clear path to later link consolidation.

Form factor choice shapes that path. QSFP-DD often fits environments where backward compatibility with existing 100G operational habits is important. OSFP can offer better thermal headroom, which becomes valuable as module power rises. The decision should be tied to airflow design, rack inlet temperatures, and the expected optics mix over the platform life. A migration that begins with moderate-power 400G modules may later need hotter long-reach or coherent variants, so thermal margin is not a side issue. It is part of platform longevity. For a deeper comparison of interface options, see this guide to 400G QSFP-DD vs OSFP, including SR8, DR4, FR4, and LR4.

Breakout strategy then becomes the bridge between generations. A 400G port split into four 100G links is often the cleanest migration tool because it aligns with existing leaf uplinks and common routing designs. It also supports measured cutovers on one fabric plane at a time. Still, breakout is not universally free. Some platforms impose lane-group restrictions, licensing limits, or reduced port availability when mixed modes are enabled. Those details affect real port yield and should be modeled before procurement.

Silicon selection should therefore reflect workload behavior, not only headline speed. Environments with AI training, storage bursts, or heavy east-west contention benefit from stronger buffering and better telemetry. Lower-latency fabrics may prioritize simpler forwarding paths and tighter queue control. In either case, the right switch is the one that lets 400G enter gradually, interoperate with 100G cleanly, and support the congestion policies the fabric will need once faster links begin carrying much larger bursts.

Designing the 400G Fabric: Architecture, QoS, and Congestion Control for a Low-Risk Migration

The architectural shift from 100G to 400G changes more than link speed. It changes how traffic is distributed, where congestion forms, and how much margin the fabric has during bursts. A migration plan should therefore treat fabric design and traffic control as one decision set. Faster links can reduce spine count and simplify topology, but they also concentrate more traffic per port. That makes oversubscription targets, ECMP behavior, and queue design far more visible in production.

A practical migration usually keeps the existing spine-leaf model and upgrades it in phases. The safest pattern is to preserve A and B planes, move one plane to 400G first, and keep the other at 100G until validation is complete. This limits blast radius and gives operations a clean rollback path. Many teams start with new 400G spines and connect them to existing 100G leaves through breakout links, then collapse those links into native 400G as leaves are refreshed. During this mixed-speed period, the key question is not only whether links come up, but whether path diversity remains healthy. With fewer physical uplinks carrying more bandwidth, weak hashing can create persistent hot spots even when total capacity looks sufficient on paper.

That is why ECMP validation should be part of architecture planning, not a post-cutover test. Flow distribution must be checked with realistic elephant and mice traffic mixes, especially for storage, container platforms, and AI clusters. If the environment already struggles with incast or microbursts, the move to 400G will expose those patterns faster. Deeper buffers may help absorb bursts, but they are only one part of the answer. Queue policies, shared buffer thresholds, and congestion signaling must be tuned for higher-rate interfaces so that bursts are signaled early rather than turning into tail drops.

For lossless or near-lossless fabrics, this becomes even more important. RoCE environments need Priority Flow Control and Explicit Congestion Notification settings that match actual buffer depth and traffic patterns. Poor tuning can spread pause storms or hide congestion until latency jumps. In many cases, ECN-based control should do most of the work, while pause mechanisms stay tightly bounded. Teams should also monitor RS-FEC counters, pre-FEC error trends, queue occupancy, and pause events as first-class migration metrics. These signals often reveal optics or congestion issues before applications report pain.

Strong observability is what turns a 400G design into an operable fabric. Streaming telemetry, per-lane optics data, and latency tracing provide the evidence needed to adjust thresholds and confirm stability under load. For teams refining those physical and traffic behaviors together, this guide to 400G DR4, FR4, and LR4 transceivers is a useful companion to fabric planning.

Counting the Real Cost of 400G Migration: Power, Cooling, Space, and Sustainability in Data Center Planning

A sound 100G-to-400G migration plan lives or dies on economics that extend far beyond switch and optics pricing. The most effective designs measure cost per delivered gigabit, cost per rack unit, and cost per usable watt, because 400G usually raises component power while reducing the number of links, devices, and patching elements needed for the same fabric capacity. That tradeoff is often favorable, but only when modeled across the full system. A 400G port may draw more power than a 100G port, yet four 100G links usually consume more optics, more faceplate space, more patch panel positions, and more operational effort than one consolidated 400G path. The right comparison is not port versus port. It is fabric outcome versus fabric outcome.

This is why migration budgets should separate direct CapEx from avoided CapEx. Direct spending includes new switching platforms, higher-speed optics, breakout harnesses, patching changes, and possible fiber remediation. Avoided spending includes deferred spine expansion, fewer line cards or fixed switches, fewer optics pairs, and less rack space devoted to network gear. In many environments, 400G improves throughput density enough to delay a new row, a new power circuit, or a larger cooling retrofit. That effect can matter more than the transceiver line item. When duplex fiber is already widespread, teams may favor FR4 for cabling simplicity despite a higher module premium. Where new single-mode plant is practical, DR4 often produces a better long-term balance of flexibility and cost efficiency. For teams comparing those paths, this overview of 400G DR4, FR4, and LR4 transceivers is especially relevant.

Power and thermal planning must be equally explicit. Higher-rate pluggables can move from low single-digit watts at 100G into the high single digits or low teens at 400G. That changes airflow assumptions, fan curves, and allowable rack inlet temperatures. A migration can fail operationally even when link design is correct, simply because thermal density was underestimated. Planners should model worst-case populated chassis, not average population, and include fan power, retimers, and cable management constraints. Short-reach copper may reduce optic cost, but active electrical options also add heat and handling complexity.

Sustainability fits naturally into this analysis. 400G can lower watts per transported bit, shrink the number of devices required for a target fabric size, and reduce ongoing material use in optics and cabling. Those gains are real only if procurement, reuse, and retirement are handled deliberately. Decommissioned 100G optics may still serve test beds, lower-tier clusters, or spares pools. Equipment that cannot be reused should enter certified recycling streams. The most responsible migration plan is not the one with the lowest purchase price. It is the one that improves network capacity while reducing stranded hardware, cooling overhead, and long-term operational waste.

Executing a Low-Risk 100G to 400G Migration: Phasing, Validation, and Cutover Control

The strongest 100G to 400G migration plans avoid a single cutover event. They turn the rollout into a controlled sequence of small, reversible changes. In practice, that usually means upgrading one fabric plane first, keeping the other plane stable, and using mixed-speed operation to preserve service continuity. A common pattern is to refresh the spine layer before the leaf layer, then run new 400G ports as 4x100G breakouts toward existing leaves. This creates immediate headroom without forcing a full fabric replacement. Later, those same paths can be collapsed into native 400G links when leaf switches are replaced.

That phased model only works if every step has explicit entry and exit criteria. Before any hardware is installed, build a link-level migration map that records distance, fiber type, connector type, patch panel count, and target optic for each path. This prevents last-minute surprises such as an LC-based duplex path being assigned a parallel optic, or a multimode segment being stretched beyond practical 400G reach. It also helps define rollback. If a new uplink shows abnormal corrected FEC growth, poor receive power, or uneven ECMP utilization, the safest response may be to revert that path to a known-good breakout state rather than debug under production pressure.

Testing should mirror production conditions, not just vendor compliance. Lab validation needs more than link-up checks. It should include optical margin verification, bit error testing, mixed packet sizes, sustained line-rate traffic, and congestion scenarios that expose microbursts. For storage and RDMA environments, measure latency with FEC enabled and watch queue behavior under incast. In production pilots, stream per-lane telemetry, DOM values, RS-FEC corrected and uncorrected counters, and interface discard trends. Those signals often reveal weak optics, dirty connectors, or polarity mistakes before users notice impact. Cleanliness and loss control are especially important at 400G, so connector inspection and disciplined handling should be part of every change workflow; this is where a practical guide to keeping MPO and MTP connectors clean becomes directly relevant.

Risk mitigation also extends beyond the maintenance window. Define canary racks, soak periods, and clear success thresholds such as error-free runtime, stable queue occupancy, and acceptable latency deltas. Qualify more than one compliant optics source where possible, because supply constraints can derail even a sound design. Most importantly, make operations teams fluent in breakout logic, PAM4 behavior, and FEC interpretation. A 400G rollout succeeds less through speed of installation than through disciplined execution, rapid detection, and the ability to back out one precise step at a time.

Final thoughts

Migration from 100G to 400G networks is pivotal for meeting modern data center demands, but it requires careful planning. From selecting the right optics and cabling to rethinking fabric architectures and managing sustainability, the process requires a phased, risk-controlled approach. By integrating economic modeling, rigorous testing, and operational readiness, planners, engineers, and managers can ensure a seamless transition while preparing for future advancements such as 800G. The right strategy today will enable robust performance and efficiency for years to come.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides cutting-edge solutions for data centers, including high-speed optical transceivers, MTP/MPO cabling systems, AOCs/DACs, PoE switches, fiber tools, and FTTA solutions for AI, telecom, and network projects.