Modern data centers are at the heart of IT, AI, and enterprise workloads, where choosing the appropriate fiber optic cabling and transceivers has become a strategic infrastructure decision. Single-mode (SMF) and multimode (MMF) fiber networks each offer distinctive advantages, but the best choice depends on factors like speed, reach, power, and future scalability. For network engineers, AI planners, and IT managers, understanding these distinctions is crucial for building high-performing and scalable architectures. This article explores the interplay between fiber standards, TCO, cabling topology, and future-proofing strategies, helping you select the optimal path for your next upgrade. Each chapter systematically unpacks core considerations such as standards and distances, cost benefits, operational reliability, and navigating supply chain challenges to meet the demands of 400G, 800G, and beyond.

Matching Reach, Bandwidth, and Ethernet Standards When Choosing Single-Mode vs Multimode for Data Center Upgrades

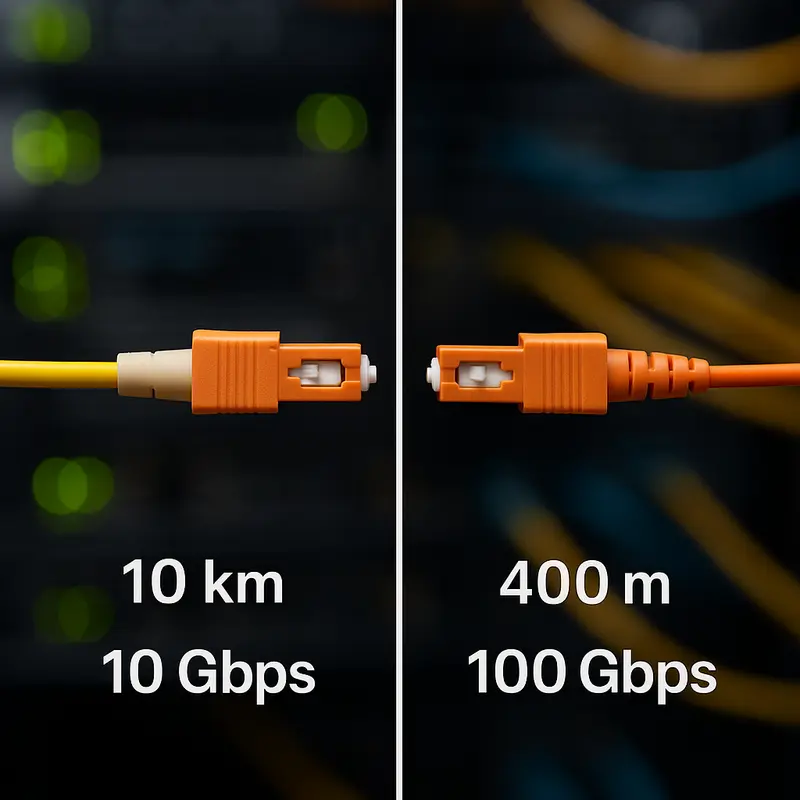

The technical divide between single-mode and multimode starts with a simple question: how far must each link run at the speed you need today and tomorrow? That question quickly pulls in standards, lane counts, connector types, and upgrade paths. In most data centers, multimode remains strongest on very short links. OM3 and OM4 have long supported 10G and 40G short-reach traffic well, and they still fit many 25G and 100G deployments when distances stay inside familiar limits. For example, 10G short-reach links can stretch to roughly 300 m on OM3 and 400 m on OM4, while 100G short-reach multimode links usually fall closer to 70 to 100 m, depending on fiber grade.

That shrinking reach at higher rates is where many upgrade decisions change. Once lane speeds moved beyond older NRZ designs and into PAM4 signaling, the practical advantage shifted toward single-mode for many fabrics. OS2 handles 100G, 200G, and 400G with much more distance headroom. A 100G duplex single-mode link can commonly reach 500 m or 2 km depending on the optical class, while 400G parallel single-mode links can cover 500 m and support clean breakout options. In a leaf-spine design where many paths sit between 100 and 150 m, multimode may still work, but single-mode gives more margin and a clearer migration path.

Standards matter because they define not only distance, but also how many fibers a link consumes. Multimode short-reach at 40G and 100G often uses parallel optics over MPO, typically with eight active fibers. Higher-speed multimode options can demand even more fibers. Single-mode is more flexible here. Some links use duplex LC, which simplifies patching, while others use MPO for parallel designs and breakouts. That flexibility becomes valuable when planning 400G to 4x100G transitions or preparing for 800G architectures.

The standards roadmap also explains why many upgrade teams now favor OS2 in new builds. The ecosystem around short-reach and mid-reach single-mode optics has expanded rapidly, especially for 100G and 400G classes. Multimode still has a valid role, especially where an existing OM4 plant is already installed and most runs are short. But its future at very high speeds depends more on niche variants, tighter reach limits, and careful compatibility planning.

This is why standards should be read as a migration map, not a spec sheet. If your links will remain under 70 to 100 m and your installed base is multimode, reuse can be sensible. If your upgrade horizon includes broad 400G or 800G adoption, single-mode aligns better with the direction of the market and the logic explained in this deeper guide to choosing single-mode vs multimode.

What the Budget Really Buys: Economics, Power, and TCO in Choosing Single-Mode vs Multimode for Data Center Upgrades

The cost gap between single-mode and multimode is no longer as simple as “cheap optics versus expensive optics.” That rule held for years when short-reach multimode links paired well with lower-cost transmitters, especially at 10G and 40G. But data center upgrades now happen in a very different market. As speeds move to 100G, 400G, and beyond, the economics shift from module price alone to a broader question: what architecture delivers the lowest cost per transported bit over the next five to seven years?

For many older environments, multimode still looks attractive because the cabling is already in place. If an existing plant is built on OM3 or OM4, and most links stay under 70 to 100 meters, reusing that infrastructure can avoid major construction costs. That is often the strongest financial case for multimode. The savings come less from the fiber itself and more from preserving sunk investment in trunks, cassettes, and operational workflows. In that setting, multimode can remain a practical choice for modest upgrade cycles.

The challenge appears when upgrade plans extend beyond the current refresh. Single-mode cable is often cheaper per meter than premium multimode grades, and duplex single-mode links can avoid some of the hardware complexity tied to parallel optics. Once higher speeds enter the design, the market volume behind single-mode short-reach optics has improved its cost position. In large deployments, this can make single-mode surprisingly competitive on a $/Gbps basis, especially when it supports cleaner migration to 400G and 800G without another recabling project. That is why many teams evaluating how to choose single-mode vs multimode now focus on lifecycle cost, not just day-one spend.

Power also changes the equation. A few watts per transceiver may seem minor, but across thousands of switch ports, that difference becomes a real cooling and energy expense. Very short-reach multimode optics can still be efficient, while longer-reach optical variants generally consume more power. Yet not all single-mode options carry the same burden. Shorter-reach designs usually draw less power than extended-reach ones, which means a standardized single-mode strategy can still be efficient if the reach class matches the actual distance.

The most useful TCO question is not which fiber is cheaper in isolation. It is whether the chosen media reduces future module diversity, avoids stranded cabling, and supports the breakout model you expect to use. If a data center will stay compact and heavily invested in existing multimode infrastructure, multimode may win financially. If the roadmap points to broader 400G or 800G adoption, single-mode often wins by reducing future disruption, simplifying migration, and limiting the need to pay twice for the same upgrade.

How Cabling Topology, Connector Choices, and Breakout Paths Drive Single-Mode vs Multimode Data Center Upgrades

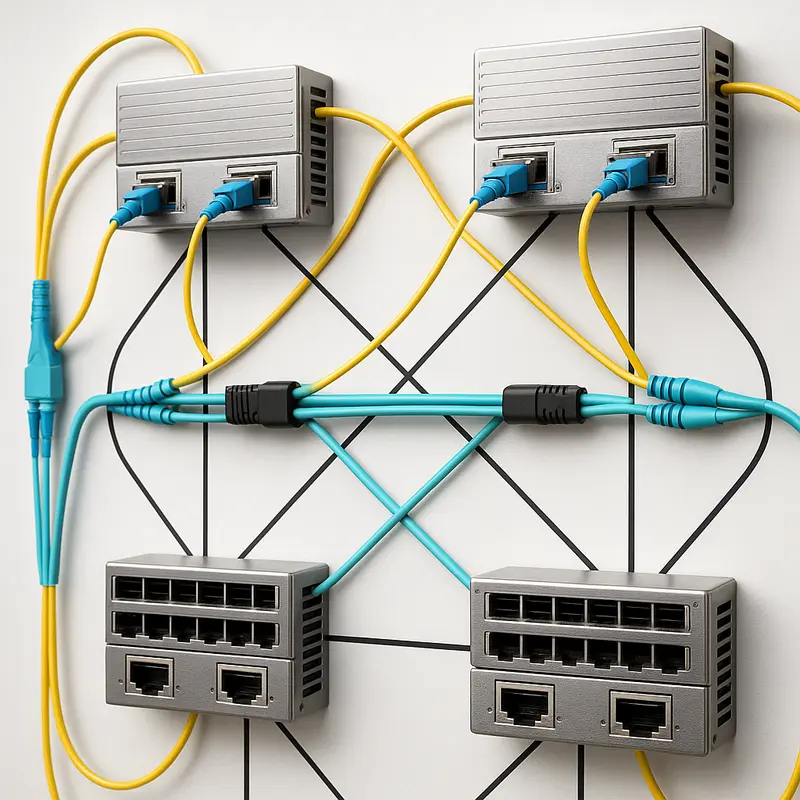

The physical layer often decides the upgrade path before optics pricing does. In data centers, the choice between single-mode and multimode is shaped by how links are actually routed, patched, and split. A fabric with mostly short, structured runs and existing parallel trunks may still favor multimode. A design aiming for cleaner migrations to higher speeds often leans toward single-mode because the cabling map becomes simpler as lane rates rise.

Connector style is central to that trade-off. Duplex LC supports a two-fiber model that feels operationally straightforward. It aligns well with single-mode DR, FR, and LR optics, where one fiber transmits and one receives. That matters when teams want predictable patching and fewer polarity surprises. By contrast, parallel multimode designs often depend on MPO connectivity, where eight or more fibers are active in one interface. This supports dense backbone trunks and efficient cassette-based distribution, but it also demands tighter discipline around polarity, keying, and fiber mapping.

That is why topology matters as much as media type. If the plant was built around MPO trunks and cassette workflows, multimode can remain attractive for short leaf-to-spine links, especially where distances stay under 100 meters. Existing OM3 or OM4 pathways can support straightforward 40G and 100G migrations without reworking the structured cabling model. But once link lengths push beyond that comfort zone, or when the upgrade plan includes broad 400G and 800G adoption, single-mode gains an edge because it reduces dependence on parallel optics for many common links.

Breakout design makes this even clearer. Multimode has long been effective for splitting a higher-speed uplink into several lower-speed server-facing links, especially in established short-reach environments. Yet current migration paths increasingly reward single-mode layouts. A 400G parallel single-mode link can break cleanly into four 100G links, preserving a logical upgrade path while keeping the long-term plant aligned with future speeds. That flexibility is one reason many planners now standardize new trunks on OS2 even when some present-day distances are modest. For a broader planning view, see how to choose single-mode vs multimode.

The practical implication is simple. Choose the topology first, then the optics family that best fits it. If your environment depends on dense MPO-based short-reach distribution and you already own the multimode plant, preserving that architecture can be sensible. If you want a flatter migration path, more duplex links, and fewer cabling constraints as speeds climb, single-mode usually creates the cleaner foundation. That physical choice then flows directly into testing, cleaning, polarity control, and day-two operations.

Operational Reality Check: Reliability, Testing, and Day-2 Discipline in Choosing Single-Mode vs Multimode for Data Center Upgrades

The media decision does not end at reach, port speed, or breakout design. It becomes real during turn-up, fault isolation, and long-term maintenance. That is where single-mode and multimode reveal different operational demands. In many upgrades, the better choice is the one your team can test, clean, document, and restore quickly under pressure.

Multimode often feels familiar in brownfield environments because teams already know short-reach optics, MPO trunks, and cassette-based patching. That familiarity matters, but MMF also concentrates risk in polarity errors, cassette mapping mistakes, and contaminated multi-fiber end faces. One dirty ferrule can disrupt several lanes at once. Single-mode, especially with duplex links, can simplify day-2 handling because each circuit is easier to trace and inspect. Yet SMF is not automatically easier. Reflection sensitivity is higher, link budgets must be respected carefully, and longer-reach circuits demand stricter connector quality.

Testing discipline therefore matters more than the fiber label. A basic acceptance workflow should always include end-face inspection, insertion-loss testing, polarity verification, and clean documentation of patch paths. For parallel links, that means using the right multi-fiber test method rather than assuming all lanes behave the same. For duplex links, it means validating both fibers, not just confirming light is present. Tier 1 loss testing is usually enough for short in-building runs, while OTDR becomes more useful on longer single-mode links where locating events has real operational value. The practical lesson is simple: choose the medium that matches the tools and habits your operations team already executes well.

Reliability also shifts with the optics generation. Older NRZ links were often judged by raw BER expectations alone. Newer PAM4 links depend on forward error correction, so operations teams must watch pre-FEC error rates and correction trends, not just link-up status. A link can remain live while margin is quietly collapsing. This makes proactive monitoring essential, especially at 100G, 400G, and beyond. Teams planning dense, latency-aware fabrics should also consider how FEC, DSP behavior, and thermal load affect failure domains, a point closely related to low-latency AI interconnect design.

In practice, the more future-ready plant is often the one with the fewest surprises. If your staff is strong with MPO cleaning, polarity control, and short-reach workflows, MMF can remain dependable for compact legacy zones. If you want simpler fault isolation, cleaner migration to duplex high-speed links, and more uniform operating procedures across mixed distances, SMF usually creates a steadier operational model. That operational steadiness becomes a strategic advantage once upgrade cycles accelerate.

Future-Proofing Your Upgrade Path: Supply Chain, Roadmap, and Strategy in Choosing Single-Mode vs Multimode

The choice between single-mode and multimode is not only about current reach or transceiver cost. It is also a bet on what your fabric must support over the next three to seven years. That is why future-proofing matters most when speeds move from 100G to 400G, then toward 800G and 1.6T. At those higher rates, the industry is clearly concentrating its volume, design energy, and migration patterns around single-mode DR and FR optics. This does not make multimode obsolete, but it does change the risk profile.

If your data center already has a healthy OM3 or OM4 base, multimode can still be the right tactical choice for short links. That is especially true when most paths stay under 70 to 100 meters and the upgrade target is limited to 100G. In that case, reusing existing trunks, cassettes, and operating practices can preserve capital and avoid disruption. The strategic problem appears when the roadmap extends beyond that comfort zone. At 400G, multimode options exist, but the ecosystem is narrower, the cabling can be less flexible, and migration choices become more dependent on specific module types and fiber counts.

Single-mode shifts that equation. OS2 gives far more distance headroom than most data centers need, but the real advantage is standardization. A facility can use the same media type for short leaf-spine links, pod interconnects, and longer campus runs. That reduces redesign later. It also aligns with popular breakout models such as 400G to 4x100G and future 800G to 8x100G or 2x400G. If your planning horizon includes broad 400G deployment or a likely move to 800G, single-mode usually lowers the chance of recabling.

Supply chain strategy reinforces this point. The strongest market pull is behind single-mode DR-class optics, largely because high-volume operators have standardized there. Higher volume usually means better availability, more second-source options, and more stable cost per gigabit. Multimode parts remain widely available, but some advanced short-reach variants can be more niche. That matters during long lead-time cycles, silicon shortages, or connector supply constraints. A media decision is therefore also a procurement decision.

This is where planning should connect technical goals with sourcing discipline. Define the link-length distribution first. Then map it to a realistic upgrade path, not an idealized one. A brownfield site may keep multimode at the edge while shifting all new trunks to OS2. A greenfield build often gains more by standardizing early. If you want a broader framework for that tradeoff, this guide to choose single-mode vs multimode offers a useful companion view. The best strategy is rarely ideological. It is the one that keeps options open without forcing expensive change twice.

Final thoughts

Choosing between single-mode and multimode fiber is critical for data center performance, cost efficiency, and scalability. By understanding the unique benefits and constraints of each, network decision-makers can align fiber strategies with both immediate performance needs and long-term infrastructure goals. MMF excels in low-cost, short-reach environments, leveraging existing infrastructure, while SMF supports broader scalability and higher speeds across longer distances. A hybrid approach often makes sense, balancing operational simplicity with emerging performance demands. Leverage these insights to build a resilient and future-ready data center network.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for data center, AI, telecom, and network infrastructure projects.