Choosing between single-mode (SMF) and multimode fiber (MMF) for data center upgrades is a foundational decision that impacts network scalability, performance, and long-term costs. With the rapid evolution of high-speed networking, balancing legacy infrastructure, greenfield builds, and future migration paths is critical. This article equips data center engineers, AI planners, and IT managers with a comprehensive framework to make informed choices. From analyzing reach, bandwidth, and power to ensuring compatibility with modern architectures, we’ll explore key considerations and provide practical insights across performance, cost, cabling, risk management, and sustainability to ensure your next upgrade meets current needs while preparing for future demands.

Choosing by Distance and Speed: How Performance and Reach Shape the Single-Mode vs Multimode Decision

When teams evaluate a fiber upgrade, performance usually starts with a simple question: how far must each link run at the speeds you plan to deploy next? That question quickly separates multimode and single-mode designs. Multimode fiber remains effective for very short links, especially in dense racks and rows where distances stay well below 100 meters. Its larger core and short-reach optics have long made it a practical fit for 10G, 40G, and many 100G deployments. In a brownfield environment with healthy OM4 plant, that can still be true.

The challenge appears as port speeds rise. At 100G and above, multimode reach tightens because modal dispersion becomes harder to manage. Typical short-reach multimode links often top out around 70 to 100 meters at 100G or 400G, with better results on higher-grade fiber and carefully controlled loss. That may sound adequate on paper, yet many modern leaf-spine fabrics include row-to-row and cross-hall paths that regularly push past those limits. A design that works for today’s rack layout can become restrictive after even a modest floor reconfiguration.

Single-mode changes that planning equation. Because it supports only one propagation mode, it avoids the modal dispersion ceiling that constrains multimode at higher rates. In practical data center terms, that means 100G and 400G short-reach single-mode links commonly cover 500 meters, while duplex variants can extend to 2 kilometers without changing the basic cabling plant. That extra headroom matters even when your current average link is short. It gives operators freedom to add new pods, stretch across larger halls, or connect adjacent buildings without redesigning the physical layer.

Performance is not only about raw reach. It also includes lane structure, breakout flexibility, and how cleanly a cabling system supports the next upgrade cycle. Multimode often relies on parallel optics and higher fiber counts, which can work well inside compact zones. Single-mode increasingly supports high speeds over fewer fibers, including duplex paths for longer short-reach links. As speeds move from 400G toward 800G, that simpler scaling path becomes more attractive.

Latency differences between the two are usually minor inside a facility. Fiber propagation delay is low over these distances, and module processing overhead is modest relative to application latency. For most upgrade decisions, reach margin and migration flexibility matter more than tiny latency differences. If your architecture also supports AI clusters or east-west traffic growth, those constraints become even more visible, as discussed in this guide to low-latency AI interconnect.

In practice, the strongest rule is straightforward: choose multimode when links are short, stable, and already well served by installed plant; choose single-mode when distance uncertainty, higher speeds, or future topology changes are likely to define the next phase of the data center.

Choosing Single-Mode vs Multimode by the Numbers: TCO, Power, and Long-Term Upgrade Economics

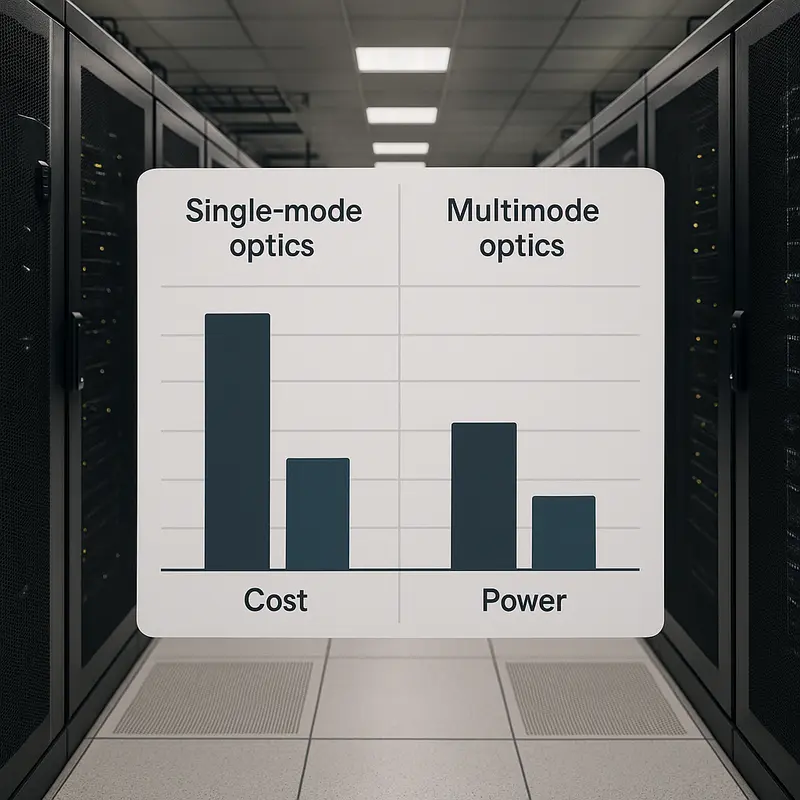

The cost debate between single-mode and multimode is no longer a simple optics price comparison. For data center upgrades, the more useful question is which option stays cheaper over the life of the network. That shifts attention from purchase price to total cost of ownership, where optics, cabling reuse, energy, cooling, and operational complexity all matter.

At lower speeds and very short distances, multimode often still looks attractive. If a facility already has a healthy OM4 plant and most links stay below 100 meters, short-reach multimode optics can preserve earlier cabling investments and avoid immediate trunk replacement. That can make brownfield upgrades financially sensible, especially when endpoint counts are high and the installed base is in good condition. But that advantage weakens as speed rises. At 200G, 400G, and 800G, the historic gap between multimode and single-mode optics has narrowed sharply. In many designs, it disappears once you include breakout flexibility, patching density, and the cost of future rework.

Power is where the economics become more revealing. A few watts per transceiver sounds minor until it is multiplied across hundreds or thousands of ports. Even a 2 to 4 watt difference per optic can add up to kilowatts across a row, and those watts must be cooled as well as powered. That means the true operating penalty is larger than the module specification alone suggests. For teams planning dense fabrics, this also affects thermal headroom, port packing, and the practical mix of form factors that can be supported without airflow compromises. If energy efficiency is already part of your infrastructure planning, it helps to compare optics by power per transported bit, not only by unit cost. The broader impact of latency and efficiency on modern high-speed fabrics is also worth considering in discussions around low-latency AI interconnect.

Cabling economics complicate the picture further. Single-mode cable is often cheaper per meter than premium multimode, and it usually consumes less pathway space. More importantly, single-mode can reduce fiber counts at higher speeds, especially when duplex links replace parallel multimode schemes. Fewer fibers mean simpler patch fields, fewer touchpoints during moves and changes, and lower risk of polarity or connector errors. Those operational savings rarely appear in initial bid comparisons, but they show up later in troubleshooting time, MAC labor, and avoided outages.

That is why the best financial answer is often hybrid rather than absolute. Keep multimode where links are short, static, and already well supported by existing plant. Shift to single-mode where power, density, longer reach, or 400G-plus migration makes today’s savings look temporary. In upgrade planning, the cheaper link is not the one with the lowest invoice. It is the one least likely to force a second rebuild.

Designing a Migration Path That Fits: Cabling Architecture Choices for Single-Mode vs Multimode Upgrades

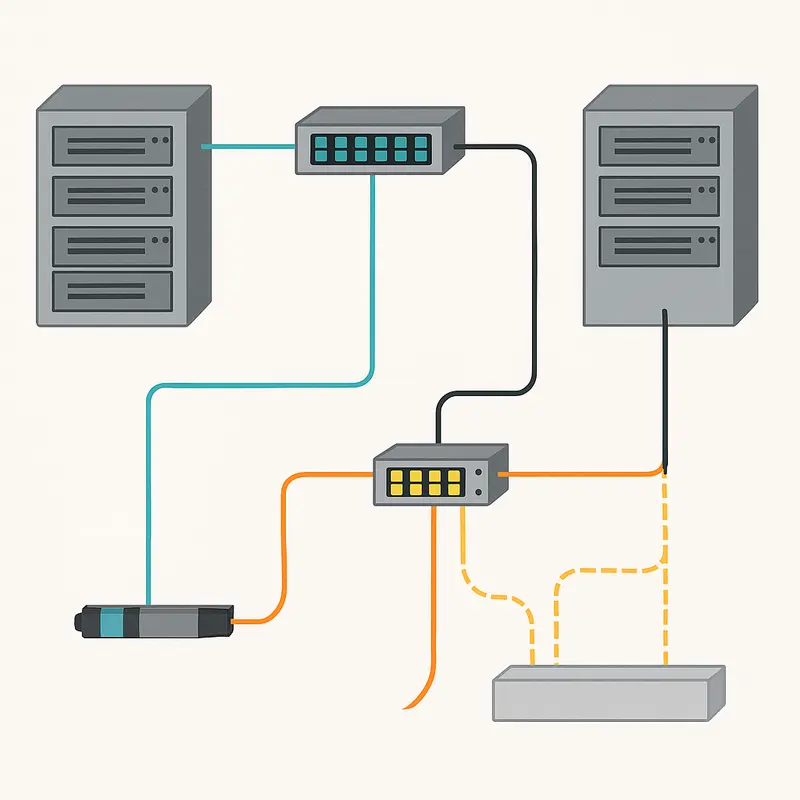

The cabling plant determines how gracefully a data center can move from current speeds to the next two or three generations. That is why the single-mode versus multimode decision should never be made only at the transceiver level. It must be made at the architecture level, where trunk design, connector strategy, breakout plans, and link-length distribution all shape what upgrades will cost and how disruptive they will become.

A multimode-centered architecture still makes sense in brownfield spaces with strong OM4 coverage, clean low-loss paths, and short, predictable runs. If most links stay under 70 to 100 meters at higher speeds, existing trunks can often support a practical progression from older short-reach links to newer parallel-optic designs. The advantage is clear: reuse what is already installed, avoid a full recable, and keep migration focused on endpoints. But that path narrows as speeds rise. Parallel multimode links often need 8 or 16 active fibers, which increases tray fill, patching density, and the chance of polarity mistakes during moves or reconfiguration. Once row-to-row distances begin creeping upward, reach margins can disappear quickly.

A single-mode-centered architecture offers a different kind of flexibility. It trades some historical familiarity for a cleaner long-term roadmap. With duplex links for many higher-speed applications and 500-meter to 2-kilometer reach options, single-mode reduces the odds that a future fabric redesign will strand installed cabling. That matters in large halls, phased expansions, and campus-style layouts where today’s short link can become tomorrow’s cross-hall uplink. Bend-insensitive single-mode fiber also helps in dense routing environments, where tight pathways and repeated handling can otherwise add avoidable loss.

The best migration strategy is often hybrid, not absolute. Preserve multimode where it is already paid for, performs well, and sits comfortably within projected reach limits. Overlay single-mode where distances are less predictable, where 400G and 800G adoption is likely, or where lower fiber counts simplify operations. In practice, that usually means keeping multimode inside dense short-reach pods while standardizing new aggregation, inter-row, and cross-building routes on single-mode. This approach limits stranded assets without forcing every segment onto the same timeline.

Execution matters as much as design. MPO polarity, cassette count, connector cleanliness, and insertion-loss discipline can decide whether a migration feels routine or fragile. Teams planning large refreshes should treat documentation and testing as part of the architecture itself, not as an afterthought. For environments where latency-sensitive AI fabrics are shaping topology choices, it also helps to review broader low-latency AI interconnect considerations when aligning cabling with future traffic patterns.

Choosing Single-Mode vs Multimode with Confidence: Standards, Supply Chain, and Upgrade Risk

A data center fiber decision is never just a reach calculation. Once cabling architecture is defined, the harder question is whether that choice will stay viable through the next refresh cycle. This is where standards maturity, supplier depth, and roadmap risk matter as much as optics pricing. A link that works today can still become expensive tomorrow if it depends on a narrow vendor ecosystem, a premium module class, or a fiber type that limits future migration options.

For that reason, standards-based interoperability should carry real weight in any single-mode versus multimode decision. Single-mode short-reach optics now benefit from strong industry momentum at 100G, 400G, and 800G. That matters because broad adoption usually improves availability, lowers pricing pressure, and reduces the chance of being locked into a small pool of compatible parts. Multimode remains well established for short links, especially where existing OM4 plants are already deployed. Yet some higher-speed multimode options rely on more specialized approaches, which can narrow sourcing flexibility and introduce premium costs. In practice, the safer long-term path is often the one supported by the widest standards base at your target speed, not the one with the lowest quote this quarter.

Supply chain resilience also deserves a place in the design review. Multimode and single-mode ecosystems depend on different optical components, packaging methods, and manufacturing capacity. If one component family tightens, lead times can shift quickly. That is why operators increasingly qualify at least two vendors per optic type and avoid depending on niche form factors or limited-availability reaches. This issue is not unique to data centers; broader fiber markets show the same pattern of price swings and sourcing pressure, as seen in global fiber optic cable supply and market dynamics. The lesson is simple: resilient infrastructure choices come from ecosystem depth, not assumptions about permanent cost advantage.

Risk also appears inside the facility. Parallel multimode links can perform very well, but they add more fibers, more polarity dependencies, and more chances for patching mistakes. Single-mode duplex links can reduce that operational exposure, even when module power is somewhat higher. At the same time, single-mode links demand strict cleanliness and disciplined handling because smaller cores are less forgiving. So the real comparison is not simple versus complex. It is one type of operational risk versus another.

The most informed choice usually combines technical fit with procurement realism. If your upgrade path depends on 400G and beyond, favor the option with broader standards support, deeper supplier competition, and less chance of stranded infrastructure. That risk lens naturally leads into long-term concerns around energy, maintenance discipline, and day-two reliability.

Choosing Single-Mode vs Multimode for Long-Term Data Center Outcomes

The final choice between single-mode and multimode is rarely settled by reach alone. It is settled by what that choice will cost to operate, how reliably it can be maintained, and whether it still makes sense after the next upgrade cycle. That is why long-term outcomes matter as much as initial deployment budgets.

From a sustainability perspective, optics power and cable longevity deserve close attention. At lower speeds and very short distances, multimode can still be efficient. Short-reach modules often consume less power, which helps in dense rows where every watt adds heat. But as networks move to 400G and 800G, that advantage becomes less decisive. Single-mode links increasingly offer better upgrade continuity, especially when the same trunk plant can support several future speed generations. Reusing fiber across multiple refresh cycles reduces waste, limits replacement labor, and lowers the material impact of repeated rebuilds. In large facilities, even a small per-port power difference can scale into a meaningful cooling burden, so evaluating energy per transmitted bit is more useful than focusing on module price alone.

Operationally, the comparison becomes even more practical. Multimode environments often rely on parallel optics and higher fiber counts. That can work well in disciplined plants, but it also creates more patching density, more polarity management, and more chances for mistakes during moves, adds, and changes. Single-mode designs, especially duplex architectures at higher rates, can simplify cable pathways and reduce touchpoints. Fewer fibers per link often means cleaner documentation, easier tracing, and less congestion in trays and panels. For teams planning denser AI or east-west fabrics, that operational simplicity can be as valuable as raw reach. It also aligns with the broader concern of managing thermal and latency-sensitive interconnects discussed in low-latency AI interconnect design.

Reliability ultimately depends less on marketing labels and more on discipline in the physical layer. Both fiber types demand strict inspection, cleaning, and loss-budget control. Single-mode cores are less forgiving of contamination, while multimode parallel systems are less forgiving of polarity or connector-count mistakes. Bend-insensitive cable helps in either case, but it does not replace process control. The teams that achieve the best uptime usually standardize connector practices, limit unnecessary cassettes, qualify links against realistic loss targets, and keep detailed plant records.

This is why many upgrade strategies become hybrid by design. Multimode remains useful where links are short, stable, and already supported by good installed plant. Single-mode becomes the safer long-term default where growth, reconfiguration, and higher speeds are expected. The better decision is the one that minimizes future rework while keeping daily operations predictable.

Final thoughts

Successfully upgrading your data center requires balancing short-term needs with long-term goals. By understanding the performance, TCO, and migration pathways of single-mode and multimode fiber, you can create an adaptive infrastructure that evolves with technology trends. Choosing the right fiber type ensures not only operational efficiency and scalability but also sustainability and reliability over time. Whether leveraging legacy MMF architecture for short links or transitioning to SMF to embrace higher speeds and longer reach, aligning your strategy with your roadmap and workload requirements is key.

Talk to ABPTEL today to optimize your data center network with high-speed optics, innovative cabling, and scalable interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, cutting-edge MTP/MPO cabling systems, direct attach and active optical cables (DAC and AOC), PoE switches, FTTA solutions, and precision fiber tools. Our end-to-end product portfolio serves data centers, AI infrastructure, telecom networks, and IT projects, ensuring high-performance connectivity and seamless scalability tailored to your infrastructure needs.