AI workloads are revolutionizing data center optical connectivity, demanding unprecedented speed, efficiency, and latency performance. As distributed training scales across tens of thousands of accelerators, optical technologies must evolve to address massive east-west traffic while balancing energy efficiency and cost. Underlying standards like IEEE 802.3df and 802.3dj drive advancements in 800G to 1.6T speeds, reshaping connectivity strategies. This transformation impacts network topologies, PHY choices, cabling, DCI technologies, and sustainability considerations, making it essential for architects and engineers to navigate these developments. Each chapter of this article delves into the major aspects of this shift: from the evolution of optical standards to the economics of scaling AI-ready fabrics, helping stakeholders build robust and future-ready infrastructures.

From 400G to 1.6T: The Standards and Speed Roadmap Reshaping AI Data Center Optics

AI networking is pushing optical connectivity into a new standards cycle, where speed increases are no longer simple upgrades. They are architectural responses to distributed training behavior. As clusters scale from hundreds to thousands of accelerators, 100G and 200G links quickly become constraints. That is why the market has moved rapidly through 400G and is now standardizing 800G, while preparing for 1.6T. The shift is tightly linked to how AI workloads consume bandwidth: bursty east–west traffic, strict synchronization windows, and low tolerance for jitter.

The technical foundation of this transition is the move from NRZ signaling to PAM4, first at 50G per lane, then 100G and 112G, and now toward 200G and 224G electrical and optical lanes. PAM4 doubles throughput per symbol, but it does so with tighter signal margins. That trade forces heavier reliance on forward error correction and signal conditioning. In practical terms, every jump in port speed is also a negotiation among reach, power, thermal headroom, and latency. For AI fabrics, that matters because optical efficiency cannot come at the cost of excessive delay across multiple switch hops.

This is where the current standards roadmap becomes especially important. Earlier Ethernet generations defined the 200G and 400G building blocks now common in large clusters. The newer work on 800G and 1.6T formalizes the next stage, including 100G- and 200G-per-lane optical interfaces, short-reach and mid-reach single-mode options, and higher-speed electrical I/O. Those definitions are not abstract. They shape switch radix, breakout strategies, cable plant choices, and what operators can deploy at scale over the next two years. For readers comparing current module classes, this overview of 400G vs 800G transceivers helps frame why 800G is becoming the default step for AI clusters rather than a niche premium tier.

Form factor choices also reveal how standards are bending around AI requirements. At 400G, the leading pluggable formats could coexist comfortably. At 800G, thermal density becomes far more decisive, so designs with more cooling headroom are often favored. At 1.6T, the challenge grows again because higher lane counts and 224G-class electrical interfaces push faceplate density, connector design, and heat removal toward their limits.

The result is that speed leadership in AI optical connectivity is no longer just about headline bandwidth. It is about which standards can deliver higher port density, manageable thermals, acceptable FEC overhead, and clear migration paths from today’s 800G pods to tomorrow’s 1.6T fabrics. That standards-and-speed foundation sets up the next question: how those links are organized into topologies that keep collective traffic moving efficiently.

Designing AI Optical Fabrics: Why Clos, RDMA, and Low-Diameter Networks Now Define Data Center Connectivity

As port speeds rise, the harder problem is no longer just moving more bits. It is shaping the fabric so AI jobs do not stall at synchronization points. Large training clusters generate repeated all-reduce, all-gather, and reduce-scatter bursts across thousands of accelerators. Those exchanges create severe incast, fast queue buildup, and tail-latency penalties that can stretch job completion time far more than raw bandwidth charts suggest. This is why AI is pushing data center optical connectivity toward topologies with high bisection bandwidth, predictable paths, and as few optical and switching stages as possible.

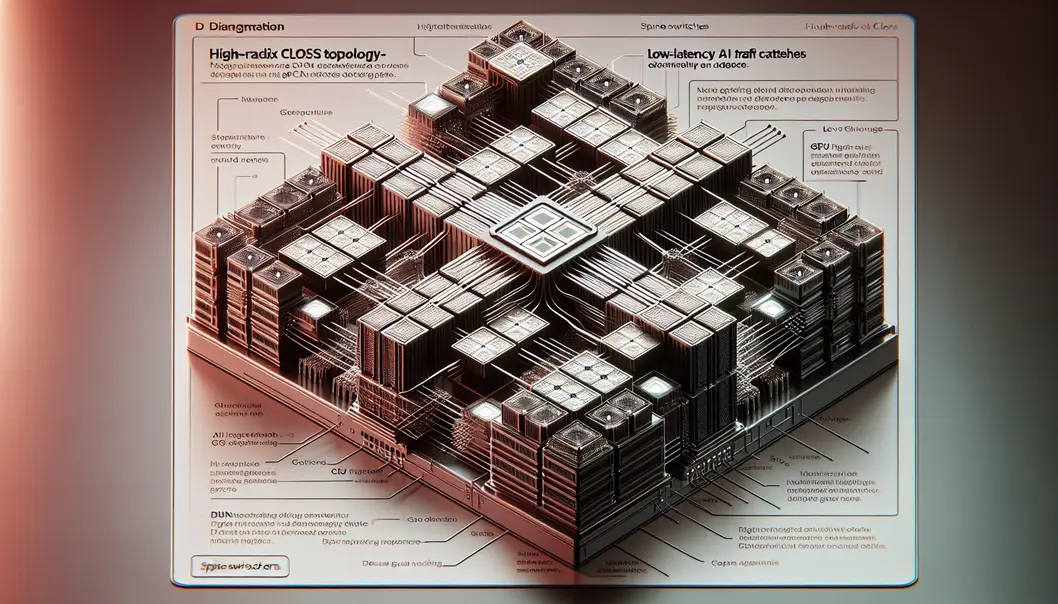

The dominant answer remains the high-radix Clos fabric, especially in two-tier designs for AI pods. Its appeal is practical as much as theoretical. Clos offers uniform pathing, operational familiarity, and a clean way to scale east-west bandwidth while keeping hop count low. In AI clusters, that low diameter matters because each extra hop adds serialization delay, FEC latency, DSP processing, and queuing risk. Even when each stage adds only nanoseconds, cumulative delay quickly eats into microsecond-level budgets. A topology that looks efficient on paper can still underperform if it amplifies jitter during collective operations.

That pressure is also reviving interest in alternatives such as dragonfly and flattened butterfly designs. Their promise is fewer hops at larger scale, with less cabling sprawl between pods. But their real value in AI settings depends on whether routing, congestion control, and failure handling stay predictable under bursty collective traffic. Many operators still prefer Clos because the software, telemetry, and troubleshooting models are mature. In other words, topology choice is now inseparable from operability.

Transport behavior matters just as much. AI fabrics increasingly rely on RDMA semantics because CPU overhead, retransmission delay, and noisy latency tails are unacceptable during tightly synchronized training steps. InfiniBand has long led here with credit-based flow control, adaptive routing, and hardware support for collective acceleration. Ethernet-based fabrics are closing the gap through RoCE enhancements, better ECN signaling, fine-grained pacing, and emerging efforts to reduce dependence on PFC. The goal is straightforward: preserve low latency under load without turning congestion control into a source of head-of-line blocking.

These architectural choices feed directly back into optics. A fabric with fewer hops and cleaner traffic behavior can better exploit high-speed links without wasting performance in buffering and recovery. That is one reason AI operators moving from 400G to 800G are also rethinking breakout plans, cabling layouts, and switch placement, as seen in discussions around 400G vs 800G transceivers for data center AI networks. In AI infrastructure, topology is no longer a logical overlay on the optical layer. It is one of the main forces shaping how that optical layer is built.

Choosing the Optical Engines of AI Scale: Modules, PHYs, and the 800G-to-1.6T Transition

The physical layer is now a strategic design decision in AI infrastructure, not a procurement detail. Once training clusters began pushing synchronized traffic across thousands of accelerators, optical module and PHY choices started shaping job completion time, rack power, and even how far a fabric could scale before latency budgets broke down. That is why the shift from 400G to 800G, and soon to 1.6T, is not simply a speed upgrade. It is a redesign of how electrical and optical boundaries are managed.

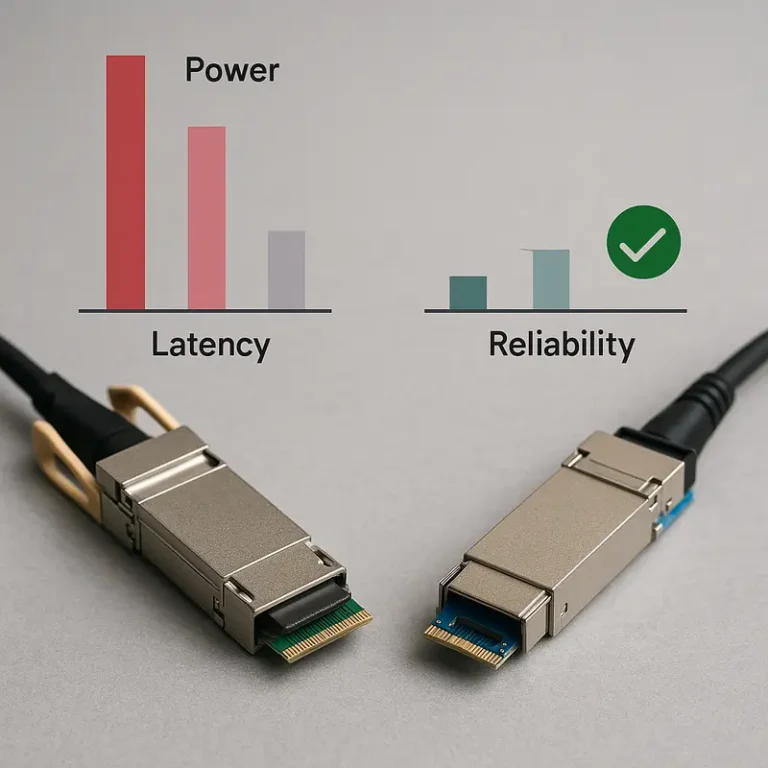

At the center of this transition is the move from older signaling to 100G and 200G per lane PAM4. PAM4 increases bandwidth efficiency, but it does so with tighter signal margins. That forces stronger forward error correction, more equalization, and stricter channel control. In AI clusters, those tradeoffs matter because every hop accumulates delay. FEC and DSP latency may look small in isolation, yet across a low-diameter fabric they consume a meaningful share of the microsecond budget that collective operations depend on.

This is also why module architecture matters as much as port speed. Conventional DSP-based pluggables remain the practical choice for many deployments because they provide robust interoperability and better tolerance for imperfect channels. They are especially useful where reach flexibility matters, including short single-mode links inside a pod and longer links that stretch across halls. But their power draw is significant at 800G, and early 1.6T modules push thermal design even harder. In dense AI clusters, optics can account for kilowatts per rack, turning transceiver selection into a cooling problem as much as a networking one.

That pressure explains the growing interest in linear-drive pluggable optics. By removing the module DSP, LPO can lower both power and latency, which aligns well with short-reach AI fabrics built on predictable cabling and tight host design. The downside is reduced margin. Signal integrity becomes more sensitive to board loss, connector variation, and vendor interoperability. For operators willing to engineer around those limits, LPO offers a path to better watts per bit. For a broader look at this migration path, see this guide on driving 400G and 800G optics for AI.

Form factor choices reflect the same realities. Thermal headroom has made OSFP especially attractive at 800G, while next-generation 1.6T systems are moving toward larger designs that can support 224G electrical I/O and heavier cooling demands. At the same time, single-mode optics such as DR and FR variants are becoming the default for new AI pods because they offer reach flexibility without locking the cabling plant into one topology. As 224G lanes arrive, copper remains valuable only at the shortest distances, and optics become the dominant medium almost everywhere else.

Scaling AI Beyond a Single Fabric: DCI Economics and the New Cost of Optical Connectivity

As AI clusters outgrow a single hall or campus, optical connectivity stops being only a rack-to-rack design question. It becomes a data center interconnect problem shaped by bandwidth hunger, latency sensitivity, and cost discipline. Large training jobs increasingly span multiple buildings, metro sites, and regional data hubs. That shift raises the importance of coherent optics, fiber plant planning, and the economics of moving huge model states and datasets without turning the network into the dominant bottleneck.

Inside the campus, the pressure is already clear. AI fabrics are moving from 400G to 800G at speed, with 1.6T close behind. Across sites, operators need similar step changes in interconnect capacity. Multi-site training, distributed checkpointing, and remote data lake access all drive sustained east-west traffic beyond the traditional data center boundary. This is why compact coherent pluggables have become central to AI scaling. They let operators extend high-capacity links over metro distances with far simpler architectures than older transport overlays. As 400ZR becomes standard and 800G coherent options emerge, the line between internal fabric expansion and regional interconnect strategy keeps fading.

The economics are just as disruptive as the technology. In AI networks, optics are no longer a rounding error in the bill of materials. At 800G, each link carries meaningful module power, and each switch can host dozens of those links. Across a large accelerator estate, optics power reaches megawatt scale quickly. That makes energy per bit, cooling overhead, and transceiver density board-level decisions as much as network ones. It also changes procurement strategy. Operators are modeling not just upfront port cost, but lifecycle power, replacement logistics, fiber utilization, and migration headroom to 200G-per-lane designs. The practical result is a much sharper focus on where short-reach parallel optics, duplex optics, or coherent links create the best return.

Cabling and operations feed directly into that return. Single-mode fiber is becoming the default because it supports both short-reach AI fabrics and longer DCI expansion without forcing a media redesign later. Connector choice, breakout strategy, and patch-field simplicity all affect how quickly clusters can scale and how safely teams can reconfigure them. Even seemingly narrow decisions around DR, FR, and LR optics now carry economic consequences, especially when multiplied across thousands of ports. For a useful grounding in these tradeoffs, see this overview of 400G DR4, FR4, and LR4 transceivers.

Those cost pressures also explain why supply chain resilience has become part of network architecture. Lead times, DSP availability, laser yields, and regional sourcing constraints can delay cluster expansion as surely as power or cooling limits. In AI data centers, interconnect economics now shape topology decisions, deployment timing, and even how far training can scale before sustainability becomes the next hard boundary.

Designing Sustainable Optical Connectivity as AI Data Centers Scale to 800G and Beyond

The shift to AI-scale infrastructure has made optical sustainability a core network design problem, not a secondary procurement concern. As clusters move from 400G to 800G and prepare for 1.6T, optics now shape power density, cooling strategy, service models, and long-term economics. A single high-speed link can consume tens of watts across both ends. Multiplied across thousands of ports, that becomes a material share of facility power. In many AI fabrics, the limiting factor is no longer only switch radix or bandwidth. It is whether the optical layer can deliver more bits without overwhelming thermal and energy budgets.

That pressure is changing how operators evaluate every connectivity choice. Passive copper still offers the best power profile at very short reach, but its practical distance shrinks as lane speeds rise. At 112G PAM4, and even more at 224G, copper becomes difficult beyond a few meters. This pushes more links onto single-mode optics, especially for row-scale and pod-scale deployments. Single-mode designs also provide cleaner migration paths across DR and FR reaches, simplify future speed upgrades, and avoid some of the constraints that come with multimode in dense AI environments. The result is a more optics-heavy architecture, but one that must be engineered carefully to avoid runaway power growth.

Module architecture now matters as much as raw reach. Conventional DSP-based pluggables remain the most flexible option, but they add both power and latency. For AI workloads, that combination is costly. Collective traffic is sensitive to microsecond-scale delays, and repeated FEC and signal-processing stages accumulate over multiple hops. This is why linear-drive pluggable optics are gaining attention for short-reach AI links. By removing the module DSP, they can reduce power and trim latency, though only where channel conditions are tightly controlled. The trade-off is narrower margin and stricter demands on host design, connectors, and interoperability. For many operators, that makes LPO attractive inside predictable cabling domains, while conventional pluggables remain safer across broader operational footprints.

The same sustainability logic extends to packaging. Near-packaged and co-packaged optics promise lower electrical loss, better energy per bit, and higher front-panel density. Yet they complicate replacement, maintenance, and lifecycle planning. That balance between efficiency and serviceability is becoming central to AI network design. Even cable management and cleanliness take on greater importance at these densities, especially with parallel optics and breakout-heavy deployments, making disciplined practices around 400G and 800G MPO/MTP migration pitfalls part of sustainability, not just installation quality. In that sense, sustainable optical connectivity for AI is not one technology choice. It is the disciplined alignment of PHY efficiency, thermal realism, operational simplicity, and upgrade paths that can survive the next bandwidth jump.

Final thoughts

AI training workloads are driving transformative changes in data center optical connectivity, pushing limits on speed, latency, and bandwidth. As operators adopt 800G and prepare for 1.6T connectivity, powering these networks sustainably while managing cost and complexity becomes critical. By keeping pace with advancements in standards, fabrics, and cooling techniques, engineers and planners can ensure their architectures not only meet AI demands today but also scale for the challenges of tomorrow’s distributed workloads.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for data center, AI, telecom, and network infrastructure projects.

Talk to ABPTEL

Looking for the right optical hardware for your AI data center, GPU cluster, or FTTA project? ABPTEL ships from Shenzhen with OEM/ODM support, fast lead times, and engineering-level pre-sales advice.

- 🔥 400G & 800G OSFP / QSFP-DD Transceivers — for AI training fabrics and hyperscale spine-leaf

- 📡 MPO / MTP High-Density Cabling — 12 / 24 / 32-fiber for high-density data centers

- ⚡ AOC & DAC Cables — short-reach GPU interconnects, OEM compatible

- 🧩 SFP / SFP+ / SFP28 / QSFP28 Modules — 1G to 100G optical transceivers

- 📋 Data Center Cabling Solutions — end-to-end design guide

- ❓ Read our FAQ — compatibility, polarity, lead time, MOQ

💬 Get a quote in 12 hours: Contact Candy · WhatsApp +86 188 1445 5697 · candy@abptel.com