As data center traffic grows exponentially due to cloud adoption, AI infrastructure, and high-performance applications, network architects face mounting pressure to modernize interconnects. Amid the transition to 200G, 400G, and beyond, the 100G QSFP28 standard continues to play a pivotal role, offering the ideal balance between cost, power, and reliability. Its technical simplicity, backward compatibility, and versatility ensure a seamless transition in diverse use cases, from enterprise networks to AI/ML backbones. This article explores how 100G QSFP28 remains a cornerstone in infrastructure upgrade plans, addressing technical foundations, economics, migration strategies, operational reliability, and sustainability. By maintaining relevance, flexibility, and efficiency, QSFP28 optics empower IT leaders and engineers to accommodate current needs while preparing for future evolutions.

Why 100G QSFP28 Still Anchors Modern Upgrades: The Simple, Flexible Technical Base Behind Its Staying Power

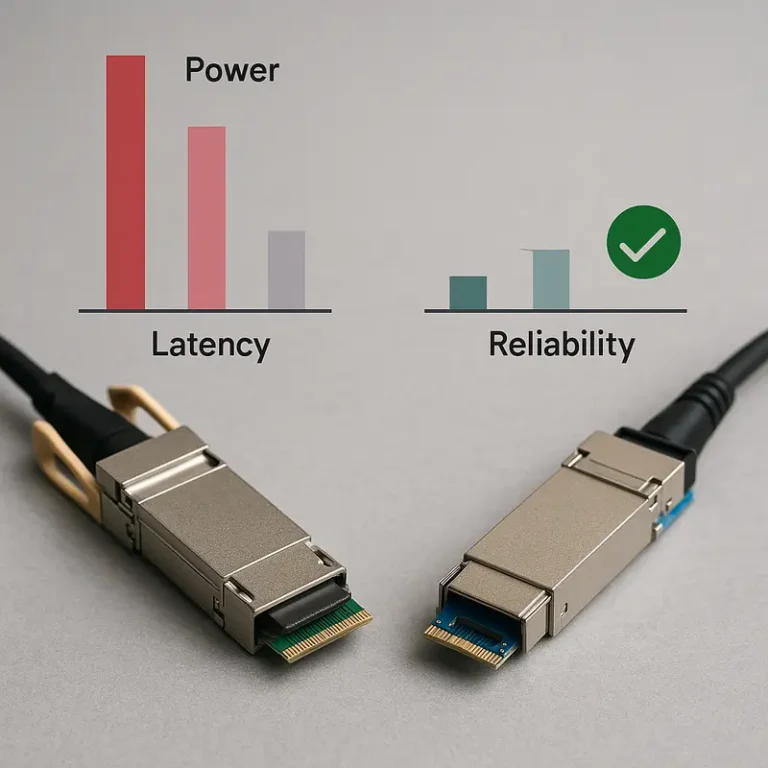

At the technical level, 100G QSFP28 continues to matter because it solves a difficult upgrade problem with very little drama. Its core design is straightforward: four 25G electrical lanes using mature NRZ signaling combine into a 100G Ethernet link. That simplicity has real consequences in production networks. It helps keep latency low, interoperability strong, and operational behavior predictable. For teams planning upgrades, those traits matter as much as raw bandwidth.

The form factor also supports a wide range of media choices without changing the overall operational model. For very short runs, direct attach copper remains the lowest-cost and lowest-power option. For in-row or adjacent-row links, SR4 over multimode fiber is still common because it fits existing MPO-based plant and delivers dependable short-reach performance. Where singlemode is preferred, CWDM4 and LR4 give planners practical reach options over duplex fiber, from a few kilometers to campus-scale distances. A useful technical overview of these variants appears in this guide to 100G QSFP28 SR4, LR4, ER4, CWDM4, and PSM4 differences.

That media flexibility is one reason 100G fits so well into mixed-generation data centers. Older multimode infrastructure can often remain in place. Existing singlemode plant can support newer duplex-based options. Short copper links still reduce cost and power inside racks. Instead of forcing a full cabling redesign, QSFP28 often lets operators upgrade in stages.

Its versatility extends beyond link reach. A 100G QSFP28 port can break out into four 25G connections, which aligns neatly with the server reality in many enterprise and colocation environments. Large numbers of servers still run at 25G, so switches with 100G uplinks and 4x25G breakout preserve port efficiency while avoiding premature server-side replacement. In phased migrations, that flexibility is often more valuable than jumping immediately to a denser but more disruptive architecture.

QSFP28 also remains technically relevant because it bridges cleanly into 400G designs. In many upgrade paths, 400G spine ports fan out into four 100G links toward existing leaf layers. That means 100G is not a dead end. It is a stable interoperability layer between older 25G access networks and newer 400G transport domains.

Even newer single-lambda 100G variants strengthen that bridge. They use PAM4 and forward error correction, but they also align well with duplex-fiber migration strategies and 400G breakout models. The result is a platform that combines mature NRZ options for low-latency environments with newer optical choices for cleaner long-term transitions. That balance of simplicity and adaptability is exactly why 100G QSFP28 still belongs in modern data center upgrade plans.

Why 100G QSFP28 Still Wins on Cost, Power, and ROI in Modern Data Center Upgrades

The business case for 100G QSFP28 remains unusually strong because upgrade decisions are rarely driven by raw speed alone. Most operators are balancing bandwidth growth against power caps, cooling limits, optics budgets, and the useful life of existing cabling. In that equation, 100G often delivers the best near-term total cost of ownership. It is mature, widely available, and operationally predictable. That lowers both acquisition cost and the hidden expenses tied to qualification, troubleshooting, and sparing.

Port economics are a major reason it stays in active plans. A 100G link usually costs far less to light than a 400G link, especially when optics are included. The gap is not just in module price. Higher-speed platforms often raise spending on denser switching hardware, airflow design, and rack power allocation. Even when 400G is more efficient per bit, 100G still has an advantage in absolute power per port. Typical 100G optics often sit in the low single-digit watt range, while 400G optics commonly consume much more. That difference matters in legacy rooms where a few extra watts per port can trigger thermal redesigns or force lower rack densities.

This is why 100G frequently remains the practical choice for leaf uplinks, aggregation layers, storage fabrics, and edge cores. Many of these environments do not need maximum fabric density. They need stable throughput, modest latency, and a predictable operating envelope. Keeping 100G in these tiers also protects prior investments in multimode MPO trunks, duplex singlemode links, and short copper runs. Reusing that plant can remove a large amount of migration cost that never appears on an optics quote. It also reduces installation labor, validation time, and the risk of patching errors.

The operational side of TCO is just as important. 100G QSFP28 benefits from years of field experience, mature diagnostics, and broad multivendor availability. That translates into fewer surprises during deployment and faster fault isolation when links degrade. Lower failure rates and easier procurement also mean smaller spare pools and less exposure to supply disruptions. Compared with newer, faster optics, 100G is simply easier to keep in service at scale.

For planners building a phased path forward, that economic stability creates flexibility. A data center can introduce 400G where radix and backbone capacity justify it, while retaining 100G where workloads are still well served. That blended model often produces a better ROI than a full-speed refresh. It also aligns well with the breakout strategies discussed in 400G DR4, FR4, and LR4 transceiver options, where higher-speed spines can coexist with existing 100G layers instead of forcing immediate replacement.

How 100G QSFP28 Keeps Upgrade Paths Open from 25G Access to 400G Spine Growth

The strongest argument for 100G QSFP28 in modern upgrade plans is not nostalgia. It is flexibility. Few link technologies sit as comfortably between older 25G server estates and newer 400G backbone designs. That middle position matters in real data centers, where refresh cycles rarely happen all at once.

At the access layer, 100G QSFP28 still maps neatly to how many enterprise fabrics are built. A single 100G port can break out into 4x25G links for server connections, which lets operators keep proven 25G NICs in place while raising uplink capacity. In brownfield environments, some platforms can also support 4x10G breakout, extending the useful life of older hosts during staged refreshes. This is why 100G often remains the practical control point for leaf switches: it supports incremental change instead of forcing wholesale replacement.

That same flexibility becomes even more valuable during spine upgrades. Many operators now move the spine to 400G first, then leave 100G at the leaf until application demand justifies a broader refresh. The key enabler is 400G DR4 breakout into 4x100G lanes. A 400G spine port can fan out to four 100G leaf links, increasing aggregate spine capacity without stranding existing investments. It also preserves operational continuity, because the fabric can scale in radix and throughput while edge domains continue running on familiar optics, cabling, and automation models. For planners comparing optical options, this overview of 400G DR4, FR4, and LR4 transceivers helps clarify why DR4 is usually the breakout-friendly choice.

Cabling strategy shapes these migrations as much as switching strategy. Existing MPO-based multimode plants make 100G SR4 an easy fit for short leaf-to-spine runs. Singlemode environments gain even more latitude. Duplex LC-based 100G single-lambda optics, such as DR, FR1, and LR1, reduce fiber count and align well with later 400G breakout architectures. That means an operator can standardize patching and simplify moves, adds, and changes today without blocking a future move to denser fabrics.

This does not make 100G the final destination for every workload. AI training clusters and very high-density spines may need 400G or 800G immediately. But many storage networks, campus cores, distributed application fabrics, and support networks do not. In those environments, 100G QSFP28 remains a highly effective bridge technology. It connects current workloads to future capacity, and it does so with minimal disruption, sensible cabling reuse, and migration paths that can unfold one layer at a time.

Why 100G QSFP28 Remains the Reliable, Low-Latency Workhorse in Modern Data Center Upgrades

If earlier upgrade decisions are about how to scale, this part of the plan is about how safely and efficiently that scale operates day after day. That is where 100G QSFP28 still earns its place. Its value is not only bandwidth. It is the combination of predictable latency, mature interoperability, and operational behavior that teams already understand well.

For many data centers, that maturity matters as much as raw throughput. A 100G QSFP28 link built on four 25G NRZ lanes avoids much of the signal complexity introduced by newer, denser optics. In practice, that often means lower pipeline overhead, fewer tuning surprises, and faster troubleshooting when a link behaves badly. On common SR4, CWDM4, or LR4 deployments, operators can often maintain very low optical latency beyond normal fiber propagation. That makes 100G especially attractive in storage traffic, east-west application flows, and smaller leaf-spine fabrics where consistent response time matters more than pushing maximum fabric density.

Operationally, 100G also benefits from a long, stable ecosystem. Diagnostics are familiar, alarm thresholds are well understood, and multi-vendor behavior is stronger than with newer generations. Digital monitoring data such as temperature, receive power, transmit power, and bias current gives teams enough visibility to catch degrading links before they fail hard. This lowers mean time to repair and reduces the need to overstock spares. In practical terms, mature 100G environments usually produce fewer surprises during maintenance windows and fewer interoperability escalations after changes.

That reliability shows up most clearly in real-world deployments. Enterprise and colocation networks still use 100G comfortably for leaf uplinks, aggregation tiers, and compact spine layers paired with 25G server access. Storage clusters and backup fabrics often prefer it because latency is stable and behavior under congestion is well characterized. Campus and edge operators continue to rely on 100G singlemode options for longer in-building and cross-campus runs, while avoiding the operational weight of more advanced transport solutions. Even in AI-focused environments, 100G remains useful for management, telemetry, and storage-side connectivity that does not need the cost or thermal profile of 400G.

Of course, reliability is never automatic. MPO-based links still demand polarity discipline and clean end faces, and PAM4-based 100G variants need correct FEC alignment. Those are manageable requirements, not structural drawbacks. For teams that want a quick reference on optical form factors and deployment differences, this overview of 100G QSFP28 SR4, LR4, ER4, CWDM4, and PSM4 modules is useful context. In upgrade planning, that mix of proven performance and operational confidence is exactly why 100G QSFP28 remains hard to displace.

Why 100G QSFP28 Still Makes Sense: Sustainability Gains and Supply Chain Stability in Modern Upgrade Plans

One reason 100G QSFP28 remains relevant in data center upgrade planning is that it supports progress without forcing unnecessary replacement. Many operators are not choosing between old and new in absolute terms. They are deciding where higher speeds create real value, and where proven 100G links still deliver the best balance of capacity, cost, and operational impact. That distinction matters for both sustainability goals and procurement resilience.

Keeping 100G in the right parts of the network reduces waste at several levels. Existing OM3 and OM4 MPO runs can often stay in place for SR4 links. Singlemode plants can continue serving CWDM4, LR4, or newer single-lambda options without a full cabling redesign. That avoids ripping out trays, cassettes, patch panels, and still-usable optics simply to standardize on a faster interface everywhere. In practical terms, a phased architecture often has a smaller material footprint than a full-speed refresh, especially when 100G remains sufficient for storage, aggregation, campus interconnects, and management networks.

The power story is just as important. Although 400G and 800G can look better on a watts-per-bit basis, 100G often wins on absolute port power, which is what constrained facilities feel first. Lower module draw means less heat per rack, less pressure on cooling, and fewer forced upgrades to power distribution. In older rooms, that can determine whether a modernization plan stays financially realistic. Extending the service life of stable 100G optics also lowers replacement churn, which reduces spare consumption and electronic waste over time.

Supply chain dynamics reinforce that value. 100G QSFP28 sits in a mature part of the market, with broad vendor support, multiple optical types, and fewer availability shocks than leading-edge 400G or 800G parts. Mature NRZ-based designs usually face less pressure from advanced DSP constraints, bleeding-edge packaging limits, and long qualification cycles. That wider ecosystem gives buyers more sourcing flexibility, better pricing leverage, and a stronger hedge against tariffs, regional disruptions, or lead-time spikes.

This does not mean newer optics lack advantages. Higher-speed fabrics are essential in dense AI clusters and very high-radix spines. But many upgrade paths work best when 100G absorbs the roles it already performs well, while faster layers are introduced where density and throughput justify them. That is why sustainability and supply chain planning increasingly overlap with architecture. A network that reuses fiber, preserves functioning optics, and relies on a broad procurement base is not just cheaper to operate. It is also easier to scale with less disruption. For teams comparing generations of pluggables, this broader landscape is useful background in 400G vs 800G transceivers.

Final thoughts

While data centers steadily migrate toward higher bandwidth interconnects, 100G QSFP28 remains indispensable in phased upgrade strategies. It combines reliability and affordability with backward and forward compatibility, ensuring immediate value without compromising future scalability. Its low power requirements, mature ecosystem, and versatile breakout configurations solidify 100G QSFP28 as a practical and future-ready option for diverse workloads. Whether maintaining existing infrastructure or supporting incremental AI and cloud growth, 100G QSFP28 empowers operators to innovate with confidence.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for data center, AI, telecom, and network infrastructure projects.

Talk to ABPTEL

Looking for the right optical hardware for your AI data center, GPU cluster, or FTTA project? ABPTEL ships from Shenzhen with OEM/ODM support, fast lead times, and engineering-level pre-sales advice.

- 🔥 400G & 800G OSFP / QSFP-DD Transceivers — for AI training fabrics and hyperscale spine-leaf

- 📡 MPO / MTP High-Density Cabling — 12 / 24 / 32-fiber for high-density data centers

- ⚡ AOC & DAC Cables — short-reach GPU interconnects, OEM compatible

- 🧩 SFP / SFP+ / SFP28 / QSFP28 Modules — 1G to 100G optical transceivers

- 📋 Data Center Cabling Solutions — end-to-end design guide

- ❓ Read our FAQ — compatibility, polarity, lead time, MOQ

💬 Get a quote in 12 hours: Contact Candy · WhatsApp +86 188 1445 5697 · candy@abptel.com