The explosive growth of artificial intelligence (AI) and large-scale machine learning (ML) has revolutionized the structure and operational needs of modern data centers. With AI clusters expanding to tens of thousands of GPUs per training job, the demand for high-bandwidth, low-latency connectivity within data centers has skyrocketed. This demand is catalyzing a swift upgrade to 400G and 800G optical links, essential for the low-oversubscription, near non-blocking fabrics powering AI workloads. From advances in switch silicon to the energy efficiency of optical modules, and from economic considerations to the move toward 1.6T roadmaps, this article explores the core drivers of this optical evolution. Readers will gain insights into how GPU-driven east-west traffic, evolving network protocols, and cutting-edge innovations in power and thermal management are shaping the future of data center connectivity.

How GPU Cluster Scale and East–West Traffic Are Accelerating 400G and 800G Fabric Design

AI data centers are demanding faster optics because GPU clusters no longer behave like traditional server farms. As training jobs expand from hundreds of accelerators to thousands or even tens of thousands, the network becomes part of the compute system itself. Each node must exchange model states, gradients, and parameters continuously. That traffic is mostly east–west, flowing between servers rather than north–south toward users or storage. The result is a fabric that must deliver huge bisection bandwidth with very low and predictable latency.

This matters because large AI jobs are gated by collective communication. Operations such as all-reduce and all-gather force many GPUs to synchronize at once. If the network stalls, expensive accelerators sit idle. That is why AI operators target very low oversubscription, often close to non-blocking in training tiers. Higher port speeds help achieve that goal more efficiently. A 400G or 800G link carries more bandwidth per hop, reduces serialization delay, and allows the same cluster to be built with fewer physical links and fewer stages. In practice, that improves scaling efficiency and shortens time-to-train.

Cluster design reinforces the shift. Modern GPU servers expose multi-terabit network bandwidth, often through several high-speed ports. Once each server can inject terabits of traffic into the fabric, older 100G and 200G designs become structurally limiting. Operators therefore build dense leaf-spine or multi-tier Clos networks, and in some cases dragonfly-style topologies, to preserve path diversity and keep hop counts low. High-radix switching makes these architectures practical, but it also multiplies optics demand. Every additional spine connection, every low-oversubscription uplink, and every breakout strategy raises the number of optical interfaces required across the pod.

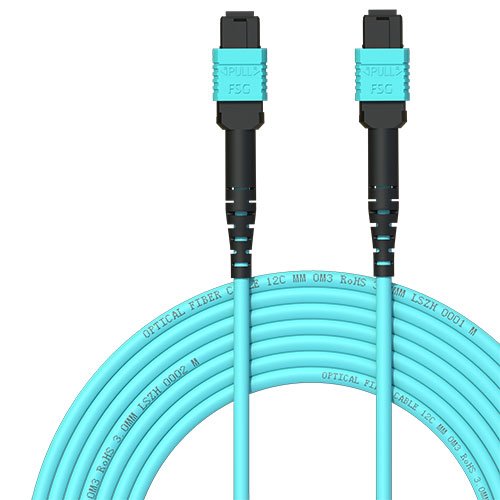

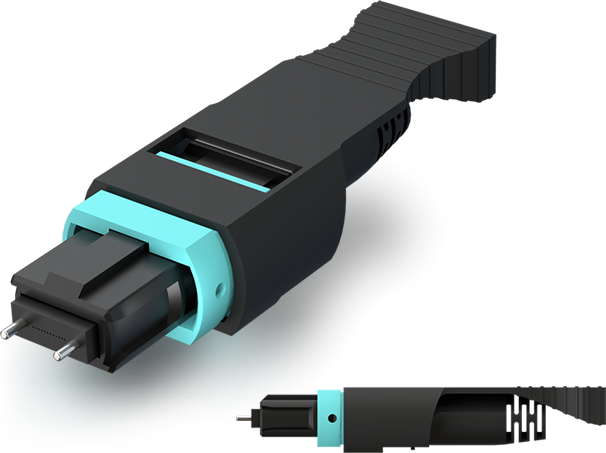

Cabling choices also follow from topology. Short copper links work only within limited rack distances. Beyond that, AI fabrics depend heavily on parallel single-mode optics and structured fiber plants. That is one reason details like MPO polarity, connector cleanliness, and loss budgets become operational issues rather than cabling afterthoughts. For teams planning dense AI fabrics, this guide to 400G/800G MPO/MTP loss budget and polarity is especially relevant.

The essential point is that 400G and 800G demand is not being driven by optics in isolation. It is being driven by the physics of distributed AI. Bigger clusters create more synchronized east–west traffic, and that traffic forces flatter, faster, lower-latency fabrics. Once those architectural choices are made, high-speed optics become the only practical way to wire the system at scale.

How Switch Silicon, SerDes, and Optical Modules Are Accelerating 400G and 800G Demand in AI Data Centers

The jump from AI cluster design to actual optics demand happens at the switch. Once operators decide they need flatter fabrics, lower oversubscription, and faster collective communication, the next constraint is the bandwidth each switch port can deliver. That is why switch silicon and SerDes advances have become a direct driver of 400G adoption and the rapid rise of 800G.

The earlier 12.8T and 25.6T switch generations made 400G practical at scale. They gave operators more faceplate density and more flexibility without expanding rack count. But the real inflection point for AI fabrics is the 51.2T class. With 112G-per-lane PAM4 SerDes, this generation supports 64 native 800G ports or far more 400G connections through breakout. That matters because AI networks are not built only around raw speed. They are built around usable radix, efficient cabling, and the ability to preserve bandwidth across several fabric tiers. Higher-radix switches reduce the number of boxes, hops, and links needed to reach a given bisection target, which turns silicon progress into immediate optics consumption.

SerDes evolution is the hidden engine here. Moving from 50G-per-lane to 112G-per-lane electrical I/O changes the economics of the entire fabric. It allows a single port to carry twice the bandwidth without doubling the front-panel footprint. It also enables smoother migration paths. A native 800G port can serve as one 800G uplink, two 400G links, or lower-speed breakouts where needed. In practice, that flexibility lets operators mix generations while still standardizing on newer switch platforms. The result is a faster ramp in both 400G and 800G optical volumes.

Optical module design has evolved in parallel. At 400G, DR4 and FR4 became common because they balance reach, fiber use, and deployment simplicity. At 800G, the market is sorting among DR8, DR4, and FR4-style approaches depending on distance, fiber plant, and thermal budget. Form factor choice is also becoming strategic. Higher-speed modules often push thermal envelopes hard enough that airflow and faceplate design affect what a switch can actually support in production. For teams planning structured cabling, connector count and polarity also become critical at these speeds, especially with parallel optics and breakouts, as outlined in this guide to 400G/800G MPO/MTP loss budget and polarity.

This is also why 800G demand is rising before 1.6T is mainstream. The silicon is ready, the module ecosystem is broadening, and 800G already solves immediate AI scaling problems. Newer optical approaches can trim power or improve density, but the core story is simple: when switch chips deliver more high-speed lanes, AI data centers buy more high-speed optics.

Why Power, Thermal Limits, and Form Factors Are Accelerating 400G and 800G Optics in AI Data Centers

The move to 400G and 800G optics in AI data centers is not only a bandwidth story. It is also a power-density story. As AI clusters scale, every switch port added to sustain low oversubscription also adds heat, faceplate congestion, and cooling load. That changes optical system design in a very practical way. Operators are no longer choosing modules only by reach and cost. They are balancing watts per port, airflow behavior, connector density, and serviceability across thousands of links.

At 800G, this tradeoff becomes much sharper. A fully populated high-radix switch can devote well over a kilowatt to optics alone. That makes the module envelope just as important as the switch silicon behind it. Larger form factors gain favor because they can dissipate more heat and support more aggressive heatsink designs. Smaller formats still matter where density is critical, but thermal headroom often decides what can actually be deployed at scale. In AI fabrics, where ports are populated quickly and run hot under sustained traffic, theoretical density means little if cooling margins are thin.

Reach selection also follows this same logic. Parallel single-mode optics are attractive for short and medium data center spans because they keep latency low and avoid heavier signal processing. Wavelength-division approaches reduce fiber count, which helps cable management, but they can increase optical complexity and thermal burden. The result is that form factor, fiber plant, and module architecture must be planned together, not separately. This is especially true when operators are weighing DR4, DR8, or FR4-style links for different tiers of an AI fabric. The cabling side of that decision is tightly linked to polarity, connector count, and insertion loss, which becomes more demanding during migration to higher-speed lanes. A practical reference is this guide to 400G/800G MPO/MTP loss budget and polarity.

Power pressure is also why interest in lower-power optical approaches has increased. Designs that remove or reduce onboard signal processing can cut several watts per module, which compounds into meaningful rack-level savings. Yet those savings come with tighter electrical tolerances and less margin for imperfect channels. That makes them appealing mainly for controlled short-reach environments, not as a universal replacement.

All of this explains why 800G adoption is rising even as it complicates thermal engineering. Fewer links are needed for the same aggregate bandwidth, and newer optics can improve bandwidth per watt despite higher absolute power draw. In AI data centers, that combination matters: the winning optical design is the one that fits the thermal envelope and keeps the GPUs from waiting on the network.

Why AI Data Centers Are Spending So Aggressively on 400G and 800G Optics

The demand surge for 400G and 800G optics is not only a technical story. It is also a capital allocation story. AI infrastructure budgets have expanded because networking now determines how efficiently expensive accelerators are used. When thousands of processors wait on synchronization traffic, the cost of a slower fabric quickly exceeds the cost of faster optics. That changes procurement logic. Operators are no longer buying transceivers as peripheral components. They are funding them as performance-critical assets that protect cluster utilization and shorten training time.

This shift is most visible in hyperscale spending patterns. Large AI buildouts require far more optical links than conventional cloud pods because training fabrics are designed with very low oversubscription. More links, more tiers, and higher radix switches all multiply module counts. A migration from 400G to 800G can raise per-module pricing in the near term, yet still improve the economics of the full fabric by reducing switch layers, cabling complexity, and the number of physical links needed for a given bisection target. That is why buyers increasingly model optics at the system level rather than the simple port level. A useful way to frame that tradeoff is the true cost of a data center port, where optics, fiber plant, power, and operational overhead must be evaluated together.

Supply chain conditions reinforce this urgency. Demand is concentrated around a narrow set of high-speed components: advanced electrical interfaces, digital signal processors, lasers, photonic engines, and high-yield packaging. If any one of those layers tightens, lead times stretch and cluster deployment slips. For AI operators, a delayed network can idle far more capital than the optics line item itself. That is why dual sourcing, design standardization, and multi-vendor qualification have become strategic priorities. The broad 400G ecosystem still offers a maturity advantage here, especially for common reaches. But 800G is gaining volume quickly as yields improve and manufacturing ramps.

Regional policy and trade constraints add another layer. Even where access to leading accelerators is restricted, demand for high-speed Ethernet fabrics remains strong because domestic AI clusters still need dense optical interconnects. This supports localized module assembly and diversified supply chains, especially in markets seeking more control over critical infrastructure inputs.

As a result, 400G remains the volume anchor for cost-sensitive and transitional deployments, while 800G is becoming the preferred spend for new large-scale AI fabrics. The next chapter builds on this economic logic by showing how protocol choices, inter-site connectivity, and the road to 1.6T will shape the next wave of optical demand.

Why Protocol Choices, DCI Expansion, and the 1.6T Roadmap Are Accelerating 400G and 800G Optics in AI Data Centers

As AI clusters expand beyond single halls and even single buildings, protocol choice increasingly shapes optics demand. Ethernet with RoCEv2 and InfiniBand now compete less on raw headline speed than on how efficiently they move collective traffic at scale. Training jobs generate synchronized bursts, incast, and persistent east–west congestion. That makes low tail latency, predictable loss behavior, and strong congestion handling essential. In practice, both fabrics are pushing operators toward dense 400G deployments and a fast transition to 800G, because protocol tuning alone cannot overcome physical bandwidth limits.

For Ethernet fabrics, the attraction is ecosystem breadth and operational consistency. Large operators can run AI alongside storage and cloud networking with familiar tooling, then tune congestion control, ECN, queueing, and load balancing for GPU traffic. InfiniBand still holds an edge in tightly optimized training environments, especially where credit-based flow control and established software stacks matter most. Yet both paths land in the same place: more optical ports, faster port speeds, and flatter fabrics. Once clusters reach tens of thousands of accelerators, every additional network tier or oversubscribed uplink directly affects scaling efficiency. That is why 800G optics are becoming the practical unit of expansion, while 400G remains the volume layer for broader interoperability and breakouts.

This pressure grows further when AI infrastructure spreads across metro campuses. Data center interconnect is no longer only about disaster recovery or basic workload mobility. It increasingly supports cluster pooling, checkpoint movement, and linking superpods across buildings with near-seamless bandwidth. Coherent 400G and emerging 800G pluggables make that possible without the cost and footprint of traditional external transport shelves. The result is a multiplier effect: high-speed DCI increases demand for high-speed client optics inside each facility as fabrics are designed around the same 400G and 800G edge assumptions.

The next transition is already influencing current buying decisions. The roadmap to 1.6T promises another jump in radix and bandwidth density, but it does not displace 800G in the near term. Instead, it reinforces 800G as the deployment baseline because operators want fiber plants, connector strategies, and switch designs that can bridge generations smoothly. That is why form factor, breakout flexibility, and cabling discipline matter so much now. For teams planning these migrations, details like MPO polarity and loss budgets quickly become strategic, not merely operational, as outlined in this 400G/800G migration pitfalls guide. In that sense, protocols, DCI, and 1.6T are not separate stories. Together, they explain why 400G demand stays strong while 800G becomes the core architecture for AI scale.

Final thoughts

In today’s AI-driven era, the rapid rise in GPU cluster sizes and data traffic within data centers is driving demand for 400G and 800G optics. High-speed optical technologies are now indispensable for scalable, efficient, and sustainable AI training and inference infrastructure. From innovations in switch silicon and optical modules to power management strategies and the 1.6T horizon, every advancement is directly addressing the bandwidth, latency, and energy needs of hyperscale deployments. The ongoing evolution of optical connectivity, powered by billions in CAPEX investments, is ensuring that AI workloads are poised to reach breakthrough performance levels while staying cost-effective and sustainable.

Talk to ABPTEL about high-speed optics, MTP/MPO cabling, and data center interconnect solutions to accelerate your AI deployments.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools. These solutions are designed for data center, AI, telecom, and network infrastructure projects focused on scalability, cost efficiency, and reliable performance.

Talk to ABPTEL

Looking for the right optical hardware for your AI data center, GPU cluster, or FTTA project? ABPTEL ships from Shenzhen with OEM/ODM support, fast lead times, and engineering-level pre-sales advice.

- 🔥 400G & 800G OSFP / QSFP-DD Transceivers — for AI training fabrics and hyperscale spine-leaf

- 📡 MPO / MTP High-Density Cabling — 12 / 24 / 32-fiber for high-density data centers

- ⚡ AOC & DAC Cables — short-reach GPU interconnects, OEM compatible

- 🧩 SFP / SFP+ / SFP28 / QSFP28 Modules — 1G to 100G optical transceivers

- 📋 Data Center Cabling Solutions — end-to-end design guide

- ❓ Read our FAQ — compatibility, polarity, lead time, MOQ

💬 Get a quote in 12 hours: Contact Candy · WhatsApp +86 188 1445 5697 · candy@abptel.com