AI cluster networks demand performance, reliability, and scalability to support cutting-edge technologies like deep learning and large-scale computations. Optical transceivers play a vital role, bridging compute nodes with ultra-fast connections. Choosing the right transceiver—400G or 800G—impacts not only speed but also power efficiency and cost-effectiveness. This article unpacks the decision-making process with clarity. From understanding technical specifications to topology design, cabling requirements, and deployment best practices, each chapter offers actionable insights specifically crafted for professionals. By the end, you’ll be equipped to make informed choices tailored to your data center needs and AI workloads.

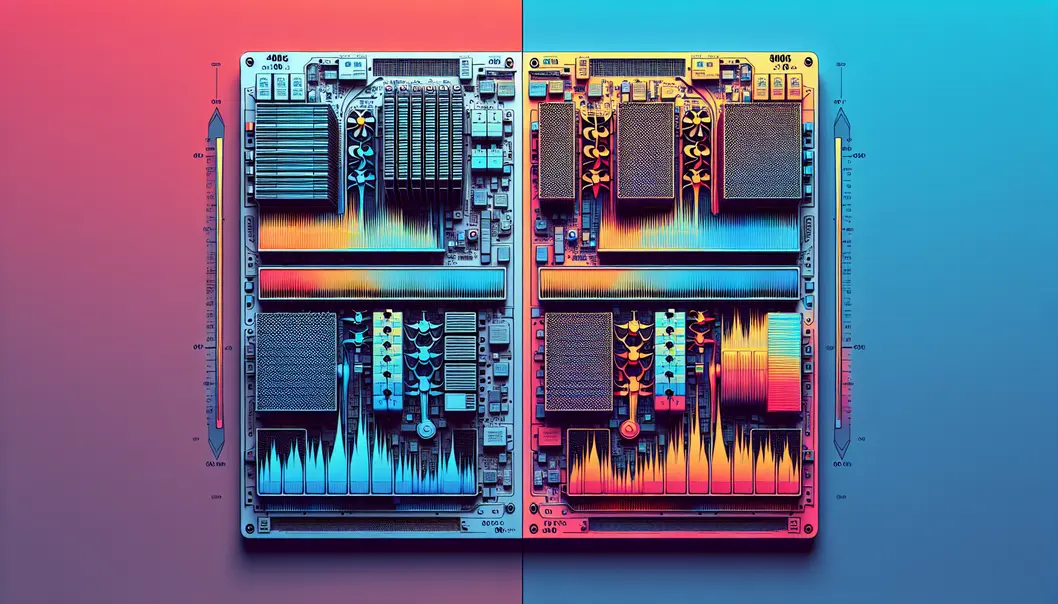

What Really Separates 400G and 800G Transceivers in AI Cluster Networks

Choosing between 400G and 800G transceivers starts with a clear reading of the underlying specifications, because the headline speed alone says very little about fit. In AI cluster networks, the real comparison is built from lane architecture, modulation, reach, connector format, power, thermal density, and breakout flexibility. Those factors determine not only how much bandwidth each link can carry, but also how efficiently the fabric can scale.

At the physical layer, 400G optics are commonly deployed through either 4 x 100G electrical lanes or 8 x 50G lane designs, depending on the module type and media. By contrast, 800G optics usually move to 8 x 100G signaling. That shift matters because it aligns better with newer switch silicon and high-radix AI fabrics. It also changes the cabling and optical engine requirements. In practical terms, 800G is not simply “double 400G.” It often represents a different design generation with tighter signal integrity demands and stricter thermal limits.

Reach options are equally important. For short in-rack or row-based links, multimode variants may still serve 400G deployments well, especially where legacy fiber plants remain in place. But many AI clusters favor single-mode optics for cleaner scaling across leaf, spine, and backbone layers. Here, both 400G and 800G can support short- to medium-reach designs, yet 800G usually makes more sense when link counts are exploding and operators want to reduce the number of physical ports needed for the same aggregate throughput. That is one reason many planners compare not just optics speed, but also cost per delivered bit and cost per front-panel port. A useful framework appears in this guide to calculating the true cost of a data center port.

Form factor also shapes the decision. Higher-speed modules often demand larger thermal envelopes, more careful airflow planning, and tighter host compatibility checks. A 400G transceiver may offer a broader installed base and easier interoperability in mixed environments. An 800G transceiver may deliver stronger long-term density, but only if the switch, cage, power budget, and cooling design were built for it.

Breakout support adds another layer. In some AI networks, 400G is attractive because it can split efficiently into lower-rate server or accelerator links during phased migrations. In others, 800G is preferred because it reduces oversubscription pressure and matches next-generation fabric bandwidth more directly. These specification differences are not isolated details. They shape how the network can be wired, expanded, and balanced, which leads directly into the topology and fabric choices that determine whether 400G or 800G is the better architectural fit.

Choosing 400G vs 800G Transceivers by AI Cluster Topology and Fabric Design

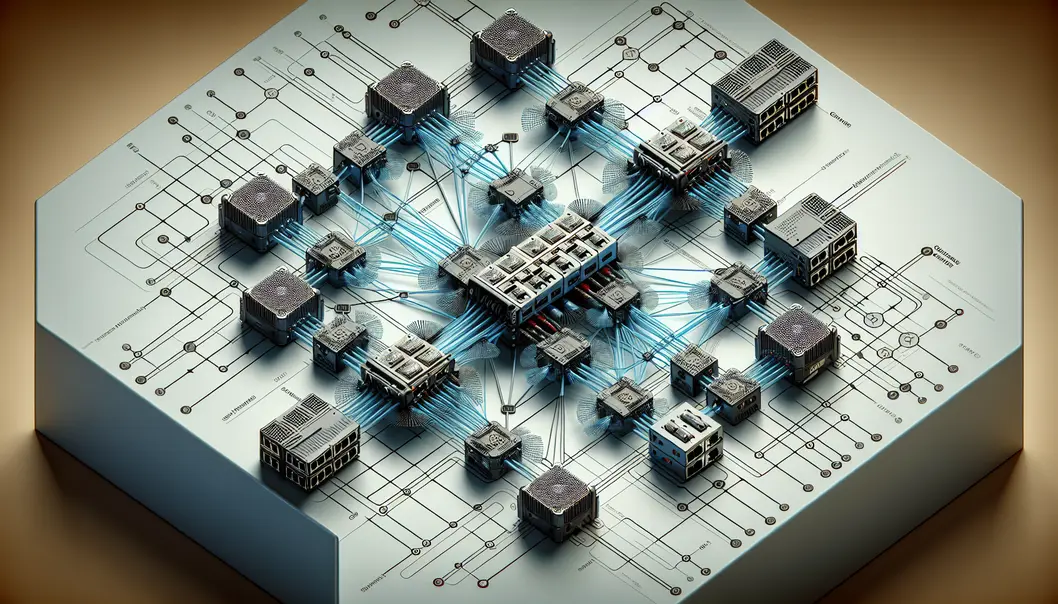

The right transceiver speed is rarely decided by optics alone. In AI cluster networks, it is shaped by the fabric topology, the oversubscription target, and the number of optical lanes your design can absorb without adding operational friction. A small or mid-sized leaf-spine fabric may gain excellent efficiency from 400G links because radix, switch port counts, and cable density stay balanced. Once cluster scale rises, however, 800G often becomes attractive not because it is newer, but because it reduces the number of links required to build the same east-west bandwidth.

That tradeoff matters most in GPU training environments. These networks generate intense all-to-all traffic, frequent synchronization bursts, and strict sensitivity to congestion. In that setting, topology choices can amplify or erase the value of a faster optic. If the fabric already needs many spine ports and parallel trunks, moving from 400G to 800G can simplify the graph. Fewer physical links can mean fewer patching points, fewer optics to manage, and less pressure on front-panel space. If the cluster is still growing in stages, though, 400G may provide more granular scaling. It lets operators add bandwidth in smaller steps and align upgrades with actual rack deployment.

Port breakout strategy also influences the decision. A design that relies on splitting high-speed uplinks into lower-speed server-facing or inter-switch paths may favor one form factor over another depending on how evenly the topology maps to rack pods. The question is not simply whether 800G delivers more throughput per port. The real question is whether your fabric can use that throughput cleanly. If traffic patterns, switch silicon, and rack grouping leave half that capacity stranded, a nominally faster link may lower efficiency.

Cabling architecture should be considered at the same time as topology. Dense AI fabrics often depend on structured fiber systems, MPO-based trunks, and tightly controlled polarity plans. As speed rises, mistakes in lane mapping become more disruptive because each failed link carries more traffic and affects larger training jobs. That is why topology planning should sit alongside a disciplined migration path for parallel optics and patching design, especially when reviewing 400G and 800G MPO/MTP loss budget and polarity guidance.

In practice, 400G fits fabrics that value flexible expansion, lower step size, and broad interoperability across current switching tiers. 800G fits designs where port scarcity, spine scaling, and cabling density have become limiting factors. The better choice is the one that matches the shape of the network, not just the headline speed.

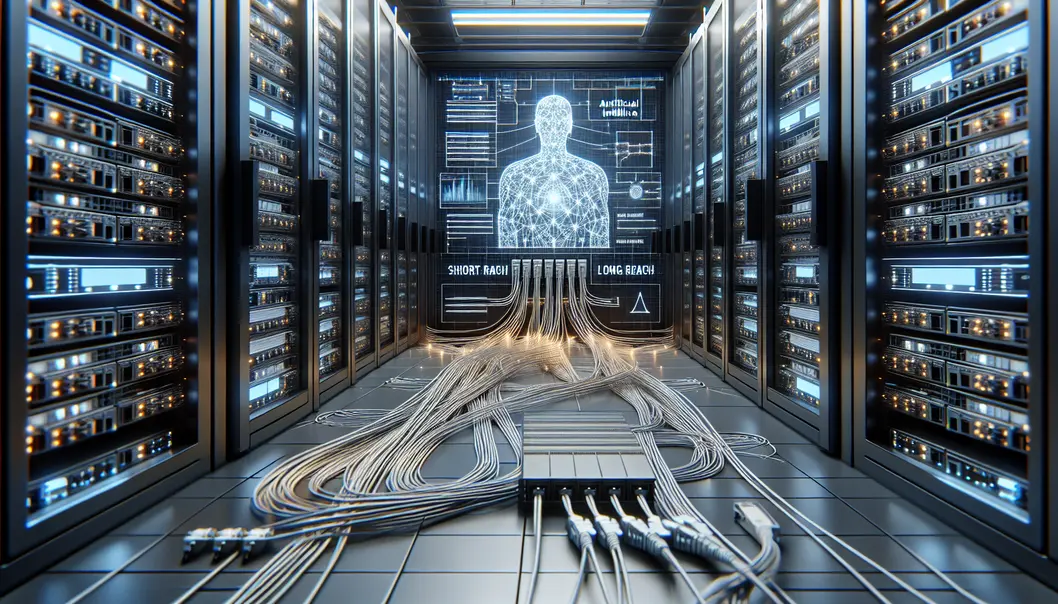

Choosing 400G vs 800G Transceivers for AI Clusters: Reach, Modulation, and Cabling Realities

After the fabric is defined, the next decision is physical: how far the link must run, how the signal is encoded, and what cabling plant can support it without shrinking operational margin. This is where many 400G versus 800G decisions become practical rather than theoretical. In AI clusters, the shortest links usually sit inside a row or between adjacent racks. Those paths often favor simple, high-density optical designs over long-reach flexibility. As distance grows across rows, pods, or halls, optical reach and loss budget start to dominate the choice.

A useful way to think about the trade-off is that higher speed does not remove physical constraints. It often makes them less forgiving. A 400G link may use fewer optical lanes at a higher per-lane rate, or more lanes at a lower one, depending on the interface type. An 800G link pushes that further. The modulation method, lane count, and wavelength plan all affect how sensitive the transceiver is to insertion loss, reflections, and fiber quality. In very short links, parallel multimode optics can look attractive because they align with existing structured cabling and offer straightforward deployment. But they demand disciplined polarity management, trunk planning, and connector cleanliness. For teams evaluating those trade-offs, this guide to 400G and 800G MPO/MTP loss budget and polarity is especially relevant.

Single-mode options become more compelling as AI clusters expand. They support longer reaches and can simplify future upgrades, especially when the network may move from 400G to 800G without a full recabling cycle. That said, single-mode is not automatically the better choice. The right answer depends on whether the current plant can meet connector, splice, and patching tolerances at scale. A design that looks clean on paper can become fragile if the cabling path includes too many connection points.

Modulation also matters because it influences both reach and implementation complexity. Simpler schemes tend to be easier to validate operationally, while denser signaling can improve fiber efficiency but tighten performance margins. In AI environments, where link counts are high and fault isolation must be fast, that operational aspect is easy to underestimate. Choosing 800G for backbone aggregation and 400G for leaf-to-server or shorter fabric links can sometimes produce a cleaner optical strategy than forcing one speed everywhere.

The core principle is straightforward: choose the transceiver only after matching reach target, fiber type, connector ecosystem, and migration horizon. When those four factors align, 400G and 800G decisions become less about headline speed and more about building an optical layer that remains stable as cluster density grows.

Choosing 400G or 800G for AI Clusters: The Real Power, Thermal, Cost, and Latency Trade-offs

As optical reach and cabling choices narrow the field, the final decision between 400G and 800G usually comes down to four operational pressures: power draw, heat density, cost per delivered bit, and latency under AI traffic patterns. These factors are tightly linked. A faster port can reduce the number of optics, fibers, and switch lanes you need, yet it can also raise module power and thermal concentration at the faceplate. That means the best choice is rarely the headline speed alone. It is the system outcome across the cluster.

In many AI fabrics, 800G looks attractive because it increases bandwidth without doubling the number of ports. Fewer optical links can simplify topology design and lower the count of switch-to-switch connections. That can improve rack-level cable management and reduce the operational burden of provisioning and testing. In dense clusters, those savings matter. They may also offset a higher per-module price, especially when the network design would otherwise require many more 400G endpoints. This is why teams often evaluate not just transceiver price, but the broader true cost per data center port.

Power and thermal behavior, however, often decide what is practical. An 800G transceiver can deliver better bandwidth efficiency per unit of rack space, but it also concentrates more heat into each socket. If your switch thermal envelope is already tight, denser optics may trigger derating, airflow redesign, or stricter placement rules. A 400G design spreads capacity across more modules, which can increase total component count, yet each individual port may be easier to cool and operate within conservative margins. For operators scaling rapidly, that difference affects maintenance windows, failure rates, and long-term stability more than a simple watts-per-gigabit calculation suggests.

Latency adds another layer. The raw serialization advantage of 800G can help when east-west traffic is intense and every stage of oversubscription is being minimized. More importantly, 800G can reduce the need for parallel links and link aggregation in some architectures, trimming coordination overhead and simplifying traffic engineering. Still, latency gains are often architectural rather than magical. If the fabric is poorly balanced, faster optics alone will not fix congestion, buffer pressure, or job completion delays.

Cost therefore has to be framed in phases. For near-term deployments with constrained budgets, 400G can offer a safer entry point, broader operational familiarity, and easier incremental scaling. For clusters designed around aggressive GPU growth, 800G often becomes the better long-horizon choice because it preserves front-panel density, reduces link sprawl, and aligns more naturally with next-generation fabric expansion. The right answer is the one that fits your thermal headroom, topology plan, and cost model at full cluster scale, not just on day one.

Choosing 400G or 800G with Confidence: Standards, Compatibility, and Deployment Rules for AI Cluster Optics

After weighing power, thermal, cost, and latency trade-offs, the next filter is simpler but often more decisive: will the optics fit the network you actually need to build? In AI cluster environments, that question reaches beyond raw speed. It includes standards alignment, switch and NIC interoperability, connector choices, breakout strategy, and the operational discipline needed to keep dense optical fabrics stable at scale.

The first best practice is to treat 400G and 800G as ecosystem decisions, not just module decisions. A transceiver must match the host form factor, lane architecture, fiber plant, and reach target at the same time. A 400G link may use 4 or 8 electrical and optical lanes, depending on the interface type. An 800G link usually doubles lane density or per-lane signaling demands. That affects everything from port mapping to test procedures. If the AI cluster relies on spine-leaf fabrics with heavy east-west traffic, standardization across a limited set of link types reduces mistakes during expansion. Mixed optical types can work, but they raise sparing complexity and increase the chance of polarity, patching, or breakout errors.

Compatibility planning should also start at the physical layer. Multi-fiber links demand careful attention to connector count, lane assignment, and polarity method. Single-mode and multimode paths are not interchangeable, and short-reach assumptions often fail once real patch panels and cross-connects are added. That is why teams should validate the full channel, not only the transceiver datasheet. A useful reference for this area is this guide to 400G/800G MPO/MTP loss budget and polarity pitfalls. In AI clusters, minor cabling errors can strand high-value compute resources, so optical standards and cabling practice must be tightly aligned.

Interoperability discipline matters just as much. Even when two modules claim the same standard, host qualification, firmware behavior, digital diagnostics, and FEC expectations can vary. The safer approach is to validate optics against the target switch and adapter platforms before volume deployment. It is also wise to define a small set of approved reaches and media types for each network tier. For example, one standard for in-row links, another for row-to-spine, and another for longer campus or building paths. That keeps operations predictable as the cluster grows.

The strongest deployment rule is consistency with room for migration. Choose 400G when it fits current lane design, rack density, and upgrade timing. Choose 800G when the surrounding switching, cabling, and validation process are already prepared for it. In AI networks, standards compliance is necessary, but deployment success comes from matching that compliance to a repeatable, supportable architecture.

Final thoughts

Selecting between 400G and 800G transceivers for AI cluster networks depends on performance requirements, cost, and scalability. By analyzing technical specs, designing efficient topology fabrics, considering optical reach, evaluating power and cost implications, and adhering to deployment best practices, network engineers and planners can optimize their infrastructure confidently. As AI technology demands continue to grow, making the right transceiver choice ensures your data center remains future-ready.

Discover high-speed optics and solutions tailored to AI infrastructure. Talk to ABPTEL about transceivers, cabling, and deployment strategies.

Learn more: https://abptel.com/contact/

About us

ABPTEL provides high-speed optical transceivers, MTP/MPO cabling systems, DAC and AOC cables, PoE switches, FTTA solutions, and fiber tools for data center, AI, telecom, and network infrastructure projects.